## Grouped Bar Chart: Mean Ratio Comparison Between Two Language Models

### Overview

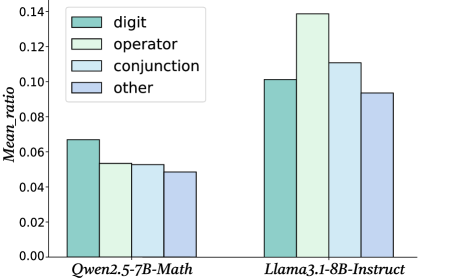

The image is a grouped bar chart comparing the "Mean ratio" of four different token categories across two large language models: **Qwen2.5-7B-Math** and **Llama3.1-8B-Instruct**. The chart visually demonstrates that the Llama model exhibits substantially higher mean ratios across all measured categories compared to the Qwen model.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Mean ratio"

* **Scale:** Linear scale from 0.00 to 0.14, with major tick marks at intervals of 0.02 (0.00, 0.02, 0.04, 0.06, 0.08, 0.10, 0.12, 0.14).

* **X-Axis:**

* **Categories (Models):** Two primary groups labeled "Qwen2.5-7B-Math" (left group) and "Llama3.1-8B-Instruct" (right group).

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Categories & Colors:**

1. `digit` - Teal color (approximate hex: #7fcdbb)

2. `operator` - Light green color (approximate hex: #c7e9b4)

3. `conjunction` - Light blue color (approximate hex: #a1d9f4)

4. `other` - Lavender/light purple color (approximate hex: #d0d1e6)

### Detailed Analysis

The chart presents the mean ratio for four token types for each model. Values are approximate based on visual inspection against the y-axis.

**For Qwen2.5-7B-Math (Left Group):**

* **Trend:** All four bars are relatively low and close in height, all below the 0.08 mark.

* **Data Points (Approximate):**

* `digit` (Teal): ~0.068

* `operator` (Light Green): ~0.054

* `conjunction` (Light Blue): ~0.054

* `other` (Lavender): ~0.049

**For Llama3.1-8B-Instruct (Right Group):**

* **Trend:** All four bars are significantly taller than their counterparts in the Qwen group. The `operator` bar is the tallest, followed by `conjunction`, then `digit`, and finally `other`.

* **Data Points (Approximate):**

* `digit` (Teal): ~0.102

* `operator` (Light Green): ~0.139

* `conjunction` (Light Blue): ~0.111

* `other` (Lavender): ~0.094

### Key Observations

1. **Model Disparity:** The most prominent observation is the substantial difference in magnitude between the two models. Every token category for Llama3.1-8B-Instruct has a mean ratio roughly 1.5 to 2.5 times higher than the corresponding category for Qwen2.5-7B-Math.

2. **Category Ranking:** The internal ranking of categories differs between models.

* For **Qwen**, `digit` is the highest, followed by a tie between `operator` and `conjunction`, with `other` being the lowest.

* For **Llama**, `operator` is the highest, followed by `conjunction`, then `digit`, and `other` is again the lowest.

3. **Operator Emphasis:** The `operator` category shows the most dramatic increase between models, jumping from one of the lower values in Qwen to the highest value in Llama.

### Interpretation

This chart likely visualizes a metric related to the internal token usage or attention patterns of these two language models, possibly during mathematical reasoning tasks (given the "Math" in Qwen's name and the token categories like "digit" and "operator").

* **What the data suggests:** The significantly higher "Mean ratio" for Llama3.1-8B-Instruct across all categories could indicate several possibilities: a different tokenization strategy, a higher density or frequency of these specific token types in its outputs or internal representations, or a different architectural approach to processing mathematical language. The fact that `operator` tokens are most prominent in Llama might suggest it places a stronger relative emphasis on procedural or operational steps in its reasoning compared to Qwen.

* **Relationship between elements:** The direct side-by-side comparison of the same four categories for two different models allows for a clear, controlled analysis of how model architecture or training affects this specific metric. The legend is essential for decoding which bar corresponds to which linguistic component.

* **Notable anomalies:** The reversal in the ranking of `digit` and `operator` between the two models is a key finding. It suggests a fundamental difference in how these models prioritize or represent core components of mathematical language. The consistently lowest value for `other` in both models indicates that the three specified categories (digit, operator, conjunction) are the primary drivers of the measured "Mean ratio."