TECHNICAL ASSET FINGERPRINT

2984dad06a7127dcf8ddffb3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

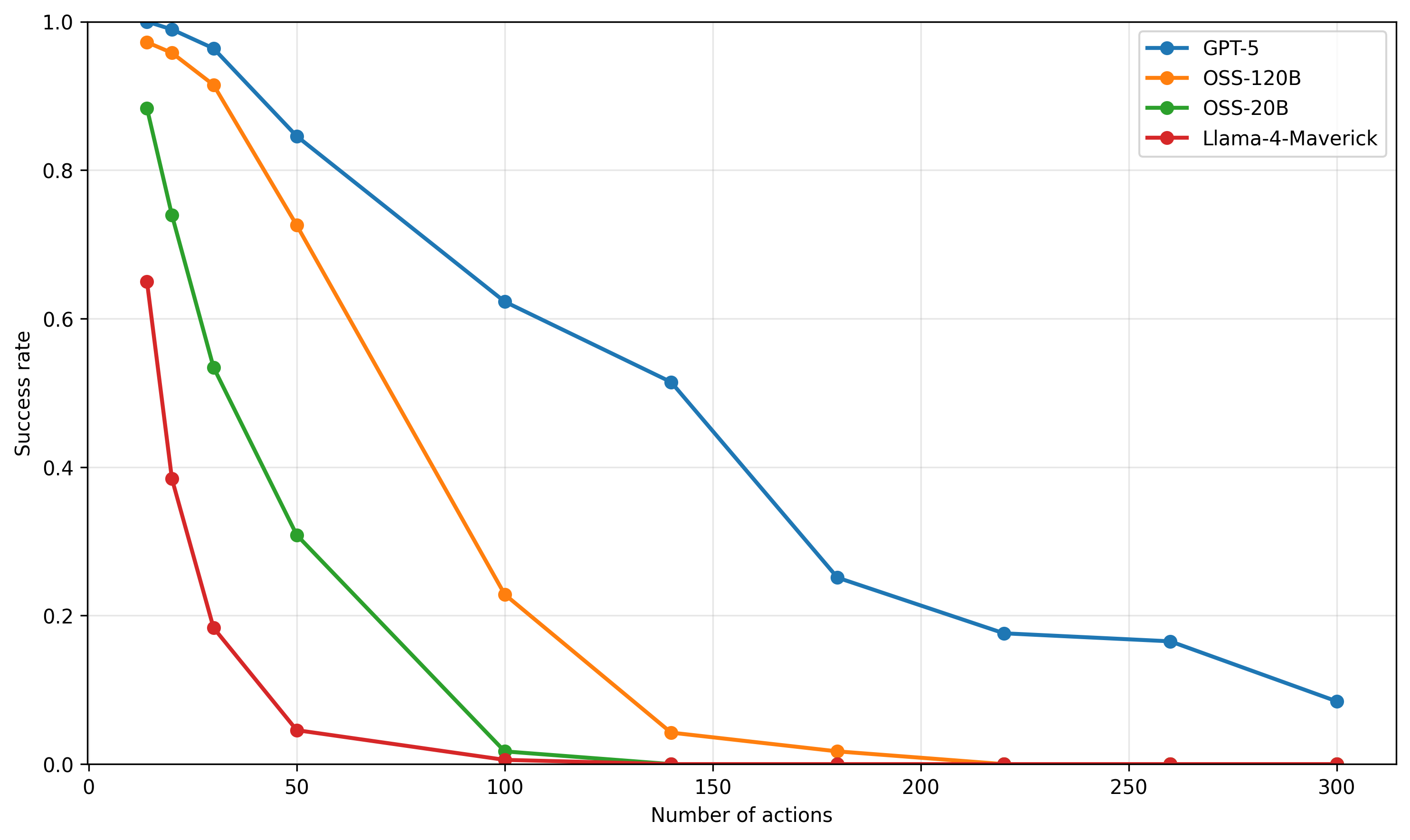

## Line Chart: Success Rate vs. Number of Actions for Different Language Models

### Overview

The image is a line chart comparing the success rate of four different language models (GPT-5, OSS-120B, OSS-20B, and Llama-4-Maverick) as the number of actions increases. The chart plots the success rate on the y-axis against the number of actions on the x-axis. Each model is represented by a different colored line with circular markers.

### Components/Axes

* **X-axis:** Number of actions, ranging from 0 to 300 in increments of 50.

* **Y-axis:** Success rate, ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend (Top-Right):**

* Blue: GPT-5

* Orange: OSS-120B

* Green: OSS-20B

* Red: Llama-4-Maverick

### Detailed Analysis

* **GPT-5 (Blue):** The success rate starts at approximately 1.0 and gradually decreases as the number of actions increases.

* (0, 1.0)

* (25, 0.98)

* (50, 0.84)

* (100, 0.62)

* (150, 0.51)

* (200, 0.18)

* (250, 0.16)

* (300, 0.08)

* **OSS-120B (Orange):** The success rate starts near 1.0 and decreases more rapidly than GPT-5.

* (0, 0.98)

* (25, 0.92)

* (50, 0.72)

* (100, 0.22)

* (150, 0.02)

* (200, 0.01)

* (250, 0.00)

* (300, 0.00)

* **OSS-20B (Green):** The success rate drops sharply as the number of actions increases.

* (0, 0.88)

* (25, 0.73)

* (50, 0.30)

* (100, 0.01)

* (150, 0.00)

* (200, 0.00)

* (250, 0.00)

* (300, 0.00)

* **Llama-4-Maverick (Red):** The success rate decreases very rapidly and plateaus near zero.

* (0, 0.65)

* (25, 0.38)

* (50, 0.18)

* (100, 0.03)

* (150, 0.00)

* (200, 0.00)

* (250, 0.00)

* (300, 0.00)

### Key Observations

* GPT-5 maintains the highest success rate across all numbers of actions compared to the other models.

* Llama-4-Maverick and OSS-20B experience the most rapid decline in success rate.

* All models eventually converge to a success rate near zero as the number of actions increases.

### Interpretation

The chart illustrates the performance of different language models in terms of maintaining success as the number of actions increases. GPT-5 demonstrates the most robust performance, suggesting it is better at handling a larger sequence of actions while maintaining a higher success rate. The other models, particularly Llama-4-Maverick and OSS-20B, are more susceptible to performance degradation as the number of actions increases. This could indicate differences in model architecture, training data, or optimization strategies. The convergence of all models to a near-zero success rate at higher action counts suggests a common limitation in handling very long sequences, possibly due to error accumulation or vanishing gradients.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

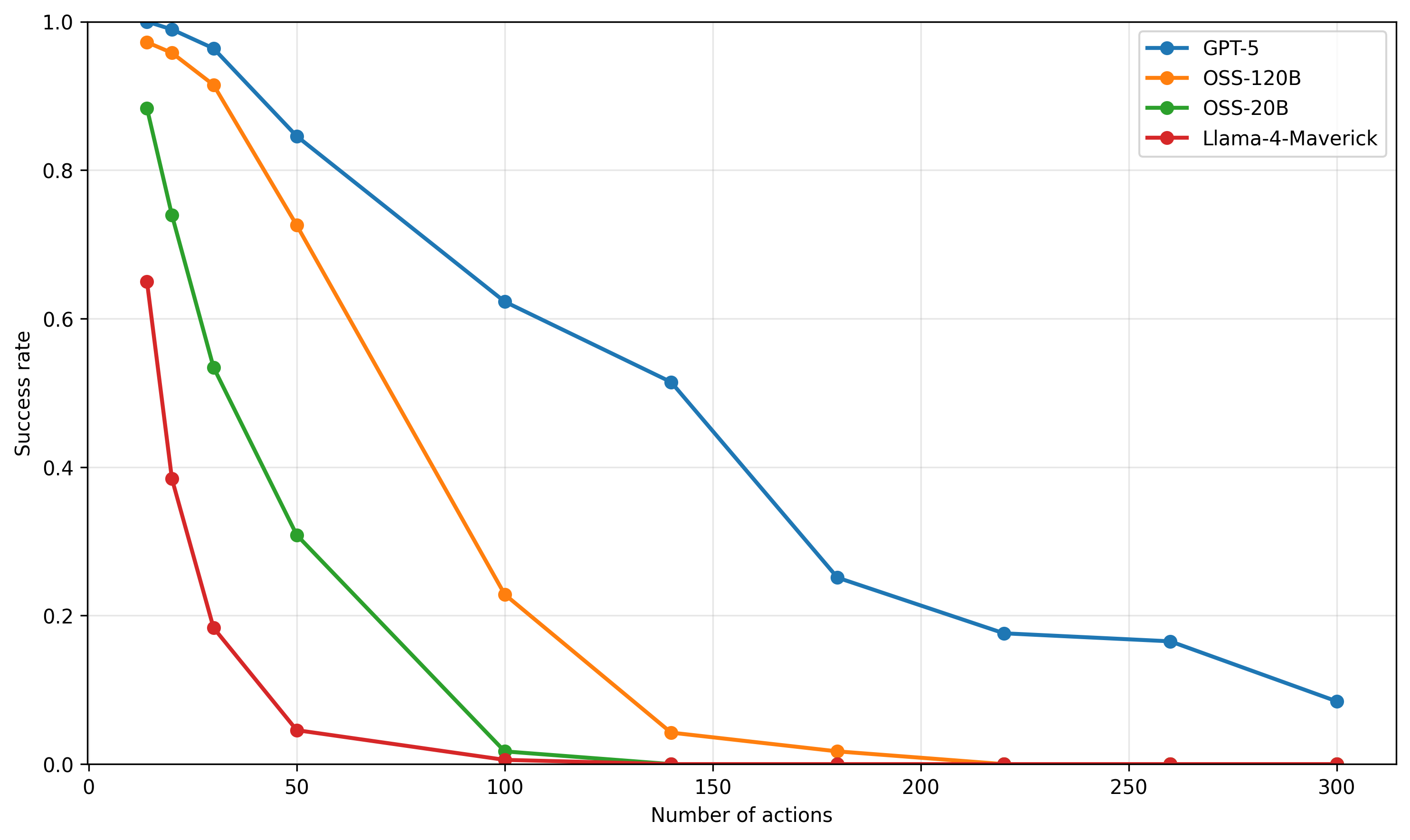

## Line Chart: Success Rate vs. Number of Actions

### Overview

This image displays a 2D line chart illustrating the relationship between "Success rate" (Y-axis) and "Number of actions" (X-axis) for four different models or systems: GPT-5, OSS-120B, OSS-20B, and Llama-4-Maverick. Each line represents a different model, showing how its success rate decreases as the number of actions increases. The chart uses a white background with a light grey grid for readability.

### Components/Axes

The chart is structured with a horizontal X-axis at the bottom and a vertical Y-axis on the left.

* **X-axis (Horizontal)**: Labeled "Number of actions".

* Range: From 0 to 300.

* Major ticks are marked at 0, 50, 100, 150, 200, 250, and 300.

* Minor grid lines are visible, suggesting intervals of 25 units.

* **Y-axis (Vertical)**: Labeled "Success rate".

* Range: From 0.0 to 1.0.

* Major ticks are marked at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* Minor grid lines are visible, suggesting intervals of 0.1 units.

* **Grid**: Light grey horizontal and vertical grid lines extend across the plotting area, aiding in data point estimation.

* **Legend**: Located in the top-right corner of the chart. It identifies the four data series by color and marker type (all using circular markers).

* **Blue line with circle marker**: GPT-5

* **Orange line with circle marker**: OSS-120B

* **Green line with circle marker**: OSS-20B

* **Red line with circle marker**: Llama-4-Maverick

### Detailed Analysis

The chart presents four distinct data series, each showing a generally decreasing trend in success rate as the number of actions increases.

1. **GPT-5 (Blue Line with Circle Markers)**:

* **Trend**: This line starts with the highest success rate and shows the most gradual decline among all models. It maintains a relatively high success rate for a larger number of actions before its decline steepens slightly and then flattens out at lower success rates.

* **Data Points**:

* At approximately 10 actions, the success rate is 1.0.

* At approximately 25 actions, the success rate is around 0.95.

* At 50 actions, the success rate is approximately 0.85.

* At 100 actions, the success rate is around 0.62.

* At approximately 140 actions, the success rate is about 0.52.

* At approximately 180 actions, the success rate is around 0.25.

* At approximately 220 actions, the success rate is about 0.18.

* At approximately 260 actions, the success rate is around 0.17.

* At 300 actions, the success rate is approximately 0.08.

2. **OSS-120B (Orange Line with Circle Markers)**:

* **Trend**: This line starts with a high success rate, slightly below GPT-5, and exhibits a steeper initial decline. Its success rate drops significantly faster than GPT-5, approaching zero around 200 actions.

* **Data Points**:

* At approximately 10 actions, the success rate is around 0.95.

* At approximately 25 actions, the success rate is about 0.90.

* At 50 actions, the success rate is approximately 0.72.

* At 100 actions, the success rate is around 0.23.

* At approximately 140 actions, the success rate is about 0.05.

* At approximately 180 actions, the success rate is around 0.01.

* From approximately 220 actions onwards, the success rate is effectively 0.00.

3. **OSS-20B (Green Line with Circle Markers)**:

* **Trend**: This line starts with a lower success rate compared to GPT-5 and OSS-120B and shows a very rapid decline. Its success rate drops to near zero much faster than the previous two models, reaching this point before 150 actions.

* **Data Points**:

* At approximately 10 actions, the success rate is around 0.88.

* At approximately 25 actions, the success rate is about 0.75.

* At approximately 40 actions, the success rate is around 0.55.

* At 50 actions, the success rate is approximately 0.31.

* At 100 actions, the success rate is around 0.01.

* From approximately 140 actions onwards, the success rate is effectively 0.00.

4. **Llama-4-Maverick (Red Line with Circle Markers)**:

* **Trend**: This line starts with the lowest initial success rate among all models and demonstrates the steepest and fastest decline. Its success rate plummets to near zero very quickly, reaching this point well before 100 actions.

* **Data Points**:

* At approximately 10 actions, the success rate is around 0.65.

* At approximately 25 actions, the success rate is about 0.38.

* At approximately 40 actions, the success rate is around 0.18.

* At 50 actions, the success rate is approximately 0.05.

* At 100 actions, the success rate is around 0.01.

* From approximately 140 actions onwards, the success rate is effectively 0.00.

### Key Observations

* **Performance Hierarchy**: GPT-5 consistently outperforms all other models across the entire range of "Number of actions," maintaining the highest success rate.

* **Rate of Decline**: Llama-4-Maverick shows the most rapid degradation in success rate, followed by OSS-20B, then OSS-120B, and finally GPT-5, which has the most resilient performance.

* **Threshold for Zero Success**:

* Llama-4-Maverick's success rate drops to near zero (below 0.05) by 50 actions and effectively 0.00 by 140 actions.

* OSS-20B's success rate drops to near zero by 100 actions and effectively 0.00 by 140 actions.

* OSS-120B's success rate drops to near zero by 180 actions and effectively 0.00 by 220 actions.

* GPT-5's success rate remains above 0.05 even at 300 actions, indicating superior robustness.

* **Initial Performance**: While GPT-5 starts at a perfect 1.0 success rate, OSS-120B is very close at 0.95, and OSS-20B is also strong at 0.88 for 10 actions. Llama-4-Maverick starts significantly lower at 0.65.

### Interpretation

This chart likely demonstrates the robustness or capability of different models (GPT-5, OSS-120B, OSS-20B, Llama-4-Maverick) in performing a task as the complexity or length of the task (represented by "Number of actions") increases. The "Success rate" can be interpreted as the probability or percentage of successfully completing the task.

The data suggests that:

* **GPT-5 is the most capable and robust model** among those tested. It maintains a high success rate even when faced with a large number of actions, indicating superior long-term coherence, memory, or planning abilities for complex tasks.

* **Model size or architecture (implied by names like 120B, 20B, Llama-4)** appears to correlate with performance. OSS-120B (presumably a larger model than OSS-20B) performs better and degrades more slowly than OSS-20B. This aligns with common observations in AI where larger models often exhibit better performance and generalization.

* **Llama-4-Maverick is the least effective** for tasks involving a higher number of actions, with its performance rapidly deteriorating. This could imply limitations in its ability to handle sequential dependencies, maintain context, or plan over extended sequences.

* The steepness of the curves indicates how quickly a model's performance degrades under increasing task complexity. A flatter curve (like GPT-5) signifies greater resilience.

* The point at which each curve approaches zero success rate can be considered a practical limit for that model's utility in tasks requiring that many actions. For instance, Llama-4-Maverick is practically unusable for tasks exceeding 100 actions, whereas GPT-5 still offers a non-trivial success rate even at 300 actions.

In essence, the chart provides a comparative benchmark of these models' ability to sustain performance under increasing operational demands, highlighting GPT-5's significant advantage in handling complex, multi-step tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Success Rate vs. Number of Actions

### Overview

This line chart depicts the success rate of four different models (GPT-5, OSS-120B, OSS-20B, and Llama-4-Maverick) as a function of the number of actions taken. The success rate is plotted on the y-axis, ranging from 0 to 1.0, while the number of actions is plotted on the x-axis, ranging from 0 to 300. The chart illustrates how the performance of each model degrades as the number of actions increases.

### Components/Axes

* **X-axis Title:** "Number of actions"

* **Y-axis Title:** "Success rate"

* **Legend:** Located in the top-right corner of the chart.

* **GPT-5:** Blue line with circle markers.

* **OSS-120B:** Orange line with circle markers.

* **OSS-20B:** Teal line with circle markers.

* **Llama-4-Maverick:** Red line with circle markers.

* **Gridlines:** Present to aid in reading values.

* **Data Range (X-axis):** 0 to 300

* **Data Range (Y-axis):** 0 to 1.0

### Detailed Analysis

Here's a breakdown of each model's performance, with approximate values extracted from the chart:

* **GPT-5 (Blue):** The line starts at approximately 0.98 at 0 actions. It slopes downward, relatively slowly.

* At 50 actions: ~0.85

* At 100 actions: ~0.70

* At 150 actions: ~0.55

* At 200 actions: ~0.35

* At 250 actions: ~0.20

* At 300 actions: ~0.10

* **OSS-120B (Orange):** The line begins at approximately 0.80 at 0 actions and declines rapidly.

* At 50 actions: ~0.30

* At 100 actions: ~0.10

* At 150 actions: ~0.02

* From 150 to 300 actions: Remains very close to 0.

* **OSS-20B (Teal):** Starts at approximately 0.95 at 0 actions and declines at a moderate rate.

* At 50 actions: ~0.75

* At 100 actions: ~0.60

* At 150 actions: ~0.45

* At 200 actions: ~0.30

* At 250 actions: ~0.20

* At 300 actions: ~0.10

* **Llama-4-Maverick (Red):** Starts at approximately 0.40 at 0 actions and declines very rapidly.

* At 50 actions: ~0.05

* At 100 actions: ~0.01

* From 100 to 300 actions: Remains very close to 0.

### Key Observations

* GPT-5 exhibits the highest success rate across all action counts, demonstrating the most robust performance.

* Llama-4-Maverick has the lowest success rate, and its performance degrades extremely quickly with increasing actions.

* OSS-120B and OSS-20B show a similar trend of rapid decline, but OSS-20B maintains a slightly higher success rate than OSS-120B.

* All models experience a decrease in success rate as the number of actions increases, indicating a challenge in maintaining performance with complex tasks.

### Interpretation

The chart demonstrates the scalability and robustness of different language models in performing sequential tasks. The success rate is used as a metric to evaluate the model's ability to achieve a desired outcome after a series of actions. GPT-5 clearly outperforms the other models, suggesting it is better equipped to handle complex, multi-step processes. The rapid decline in performance for OSS-120B, OSS-20B, and especially Llama-4-Maverick indicates that these models struggle with tasks requiring a large number of coordinated actions. This could be due to limitations in their ability to maintain context, reason about long-term dependencies, or avoid accumulating errors over multiple steps. The data suggests that model size and architecture play a significant role in the ability to perform complex tasks effectively. The chart highlights the importance of considering the number of actions required for a task when selecting a language model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Success Rate vs. Number of Actions for AI Models

### Overview

The image is a line chart comparing the performance of four different AI models. It plots the "Success rate" (y-axis) against the "Number of actions" (x-axis), showing how each model's performance degrades as the task complexity (number of actions) increases. All data series show a downward trend.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Number of actions"

* **Scale:** Linear, ranging from 0 to 300.

* **Major Ticks:** 0, 50, 100, 150, 200, 250, 300.

* **Y-Axis:**

* **Label:** "Success rate"

* **Scale:** Linear, ranging from 0.0 to 1.0.

* **Major Ticks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:** Located in the top-right corner of the chart area. It contains four entries, each with a colored line segment and a circular marker:

1. **Blue line with circle marker:** "GPT-5"

2. **Orange line with circle marker:** "OSS-120B"

3. **Green line with circle marker:** "OSS-20B"

4. **Red line with circle marker:** "Llama-4-Maverick"

* **Grid:** A light gray grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

The chart displays four distinct data series, each representing a model's success rate at different action counts. The following data points are approximate, read from the chart's grid.

**1. GPT-5 (Blue Line)**

* **Trend:** The line slopes downward consistently from left to right, indicating a steady decrease in success rate as the number of actions increases. It maintains the highest success rate among all models at every data point.

* **Approximate Data Points:**

* 0 actions: ~1.00

* ~25 actions: ~0.99

* ~35 actions: ~0.96

* 50 actions: ~0.85

* 100 actions: ~0.62

* 140 actions: ~0.51

* 180 actions: ~0.25

* 220 actions: ~0.18

* 260 actions: ~0.17

* 300 actions: ~0.08

**2. OSS-120B (Orange Line)**

* **Trend:** The line slopes downward, starting very high but declining more steeply than GPT-5. It crosses below the 0.5 success rate mark between 50 and 100 actions.

* **Approximate Data Points:**

* 0 actions: ~0.97

* ~25 actions: ~0.95

* ~35 actions: ~0.91

* 50 actions: ~0.72

* 100 actions: ~0.23

* 140 actions: ~0.04

* 180 actions: ~0.02

* 220 actions: ~0.00 (appears to be at or near zero)

* 260 actions: ~0.00

* 300 actions: ~0.00

**3. OSS-20B (Green Line)**

* **Trend:** The line shows a very steep initial decline, dropping below a 0.5 success rate before 50 actions. It approaches zero success rate by 100 actions.

* **Approximate Data Points:**

* 0 actions: ~0.88

* ~20 actions: ~0.74

* ~35 actions: ~0.53

* 50 actions: ~0.31

* 100 actions: ~0.02

* 140 actions: ~0.00

* 180 actions: ~0.00

* 220 actions: ~0.00

* 260 actions: ~0.00

* 300 actions: ~0.00

**4. Llama-4-Maverick (Red Line)**

* **Trend:** The line exhibits the most severe and rapid decline. It starts at the lowest initial success rate and plummets to near-zero performance by 50 actions.

* **Approximate Data Points:**

* 0 actions: ~0.65

* ~20 actions: ~0.39

* ~35 actions: ~0.18

* 50 actions: ~0.05

* 100 actions: ~0.01

* 140 actions: ~0.00

* 180 actions: ~0.00

* 220 actions: ~0.00

* 260 actions: ~0.00

* 300 actions: ~0.00

### Key Observations

1. **Universal Negative Correlation:** All four models demonstrate a clear negative correlation between the number of actions and success rate. Performance universally degrades with increased task length/complexity.

2. **Performance Hierarchy:** A consistent performance hierarchy is maintained across the entire range: GPT-5 > OSS-120B > OSS-20B > Llama-4-Maverick.

3. **Divergence in Decay Rates:** The models differ significantly in how quickly their performance decays. GPT-5 has the most gradual slope, while Llama-4-Maverick has the steepest.

4. **Convergence to Zero:** Three of the four models (OSS-120B, OSS-20B, Llama-4-Maverick) reach a success rate at or near zero by 100-150 actions. GPT-5 is the only model that maintains a measurable, albeit low, success rate (≈0.08) at 300 actions.

5. **Initial Performance Gap:** There is a significant spread in initial success rates (at 0 actions), ranging from ~0.65 (Llama-4-Maverick) to ~1.00 (GPT-5).

### Interpretation

This chart likely illustrates the results of a benchmark evaluating AI models on sequential decision-making or multi-step reasoning tasks. The "Number of actions" represents the length or complexity of the task sequence required for completion.

* **What the data suggests:** The data strongly suggests that maintaining performance over long action sequences is a major challenge for current AI models. The ability to handle extended context or maintain coherence over many steps appears to be a key differentiator between models, with GPT-5 showing significantly greater robustness.

* **Relationship between elements:** The x-axis (complexity) is the independent variable causing the change in the y-axis (performance). The different colored lines represent different model architectures or sizes, isolating the variable of model capability. The steepness of each line is a direct visual measure of that model's "contextual robustness" or "planning horizon."

* **Notable patterns and anomalies:**

* The most striking pattern is the **exponential-like decay** for OSS-20B and Llama-4-Maverick, suggesting a critical failure point is reached relatively early in the action sequence.

* The **near-perfect initial performance** of GPT-5 and OSS-120B at 0 actions indicates they can solve the base task flawlessly, but the challenge lies entirely in scaling that success.

* The **plateauing of GPT-5's curve** between 220-260 actions (≈0.18 to ≈0.17) before a final drop is a minor anomaly that could indicate a subset of tasks solvable within that action range or a measurement artifact.

In summary, the chart provides a clear, quantitative comparison showing that while all models struggle with longer tasks, there is a substantial performance gap, with larger or more advanced models (like GPT-5) demonstrating a markedly superior ability to sustain performance as task complexity grows.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Success Rate vs. Number of Actions

### Overview

The chart illustrates the success rate of four AI models (GPT-5, OSS-120B, OSS-20B, Llama-4-Maverick) as the number of actions increases from 0 to 300. Success rate is plotted on the y-axis (0–1.0), while the x-axis represents the number of actions. All models show declining success rates with increasing actions, but the rate of decline varies significantly.

### Components/Axes

- **X-axis**: "Number of actions" (0–300, linear scale).

- **Y-axis**: "Success rate" (0–1.0, linear scale).

- **Legend**: Located in the top-right corner, mapping colors to models:

- Blue: GPT-5

- Orange: OSS-120B

- Green: OSS-20B

- Red: Llama-4-Maverick

### Detailed Analysis

1. **GPT-5 (Blue Line)**:

- Starts at **1.0 success rate** at 0 actions.

- Declines steadily, reaching **~0.62 at 100 actions**, **~0.25 at 200 actions**, and **~0.08 at 300 actions**.

- Maintains the highest success rate throughout.

2. **OSS-120B (Orange Line)**:

- Begins at **~0.98 at 0 actions**.

- Drops sharply to **~0.22 at 100 actions**, then plateaus near **0.01** by 300 actions.

- Steeper decline than GPT-5 but starts slightly higher.

3. **OSS-20B (Green Line)**:

- Starts at **~0.88 at 0 actions**.

- Declines gradually to **~0.02 at 100 actions**, then near **0.0** by 300 actions.

- Slower decline than OSS-120B but lower initial success rate.

4. **Llama-4-Maverick (Red Line)**:

- Begins at **~0.65 at 0 actions**.

- Plummets to **~0.0** by 50 actions, remaining at 0 thereafter.

- Most drastic decline, with no success beyond 50 actions.

### Key Observations

- **GPT-5** consistently outperforms all models across all action counts.

- **OSS-120B** and **OSS-20B** show similar initial performance but diverge in decline rate.

- **Llama-4-Maverick** fails catastrophically after 50 actions, suggesting poor scalability.

- No lines intersect, indicating no model overtakes another in performance.

### Interpretation

The data suggests **GPT-5** is the most robust model for handling increasing action loads, likely due to architectural advantages or superior training. The OSS models (120B and 20B) exhibit diminishing returns more rapidly, possibly due to resource constraints or optimization gaps. Llama-4-Maverick’s abrupt failure implies critical limitations in its design or training data. The trends highlight trade-offs between model complexity (e.g., OSS-120B’s larger size vs. GPT-5’s efficiency) and real-world applicability under dynamic workloads.

DECODING INTELLIGENCE...