## Diagram: Coconut (k=1) and Coconut (k=2) Thought Processes

### Overview

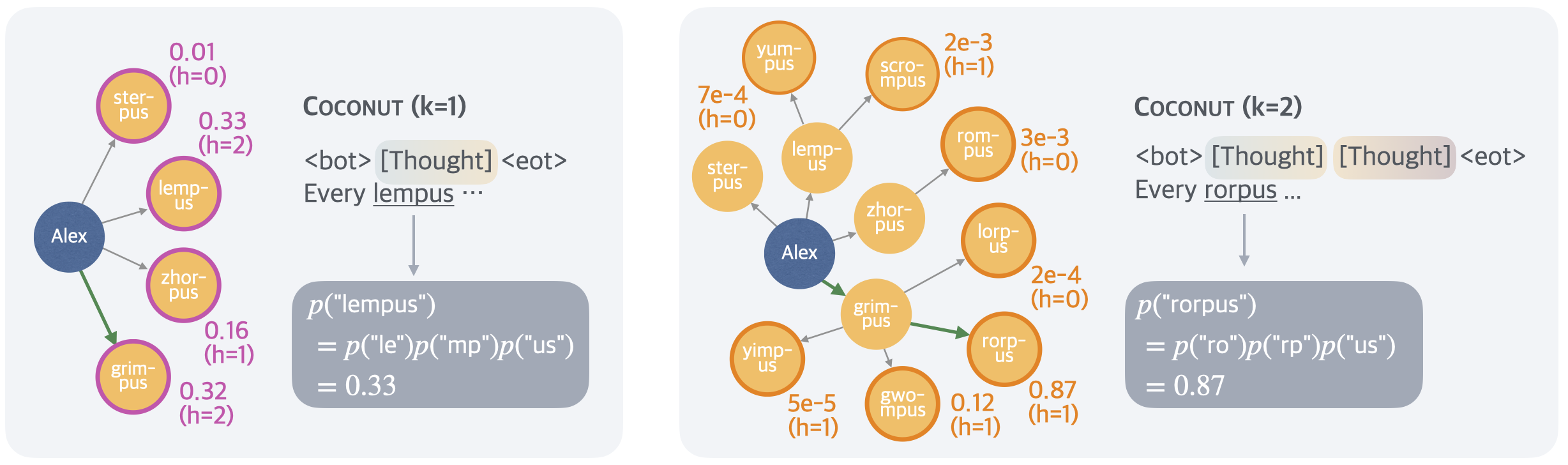

The image depicts two diagrams illustrating thought processes labeled "Coconut (k=1)" and "Coconut (k=2)". Both diagrams show a network of interconnected nodes representing words or concepts, with probabilities associated with transitions between them. The diagrams appear to model a generative process, starting with "Alex" and progressing through a sequence of words.

### Components/Axes

Each diagram consists of:

* **Nodes:** Represented by yellow circles, labeled with words like "ster-pus", "lemp-us", "zhor-pus", "pus", "yum-pus", "scro-mpus", "rom-pus", "lorp-us", "grim-pus", "gwo-mpus", "rorp-us".

* **Edges:** Arrows connecting the nodes, labeled with probabilities (e.g., "0.01", "0.33", "0.16", "0.32", "2e-3", "7e-4", "3e-3", "2e-4", "5e-5", "0.12", "0.87"). Each edge also has a value in parentheses (h=0, h=1, h=2).

* **Starting Node:** "Alex" is the initial node in both diagrams.

* **Text Blocks:** Each diagram contains a text block with a probability equation and a final probability value.

* **Thought Blocks:** Each diagram contains a text block labeled `<bot> [Thought] <eot>`, followed by a sentence.

### Detailed Analysis or Content Details

**Coconut (k=1) - Left Diagram**

* **Starting Point:** "Alex"

* **Transitions:**

* Alex -> ster-pus: Probability = 0.01 (h=0)

* Alex -> lemp-us: Probability = 0.33 (h=2)

* Alex -> zhor-pus: Probability = 0.16 (h=1)

* Alex -> pus: Probability = 0.32 (h=2)

* **Text Block 1:** `p("lempus") = p("le")p("mp")p("us") = 0.33`

* **Thought Block:** `<bot> [Thought] <eot> Every lempus ...`

**Coconut (k=2) - Right Diagram**

* **Starting Point:** "Alex"

* **Transitions:**

* Alex -> yum-pus: Probability = 2e-3 (h=1)

* Alex -> scro-mpus: Probability = 7e-4 (h=0)

* Alex -> lemp-us: Probability = 3e-3 (h=0)

* Alex -> zhor-pus: Probability = 2e-4 (h=0)

* Alex -> grim-pus: Probability = 5e-5 (h=1)

* Alex -> gwo-mpus: Probability = 0.12 (h=1)

* Alex -> rorp-us: Probability = 0.87 (h=1)

* **Text Block 2:** `p("rorpus") = p("ro")p("rp")p("us") = 0.87`

* **Thought Block:** `<bot> [Thought] <eot> Every rorpus ...`

### Key Observations

* The probabilities in the "Coconut (k=2)" diagram are generally much smaller than those in the "Coconut (k=1)" diagram.

* The "Coconut (k=2)" diagram has a much more complex network of transitions.

* The text blocks show a decomposition of the probability of a word into the probabilities of its constituent parts (e.g., "le", "mp", "us").

* The "Thought Blocks" suggest that the diagrams represent a generative model for creating sentences.

* The (h=0), (h=1), and (h=2) values associated with each edge are not explained.

### Interpretation

The diagrams illustrate a probabilistic model for generating language, potentially representing a simplified version of a neural network or a Markov chain. The "Coconut (k=1)" and "Coconut (k=2)" labels likely refer to different model configurations or levels of complexity (k could represent the order of the Markov chain or the number of layers in a neural network). The probabilities on the edges represent the likelihood of transitioning from one word to another. The text blocks demonstrate a basic approach to calculating the probability of a word based on the probabilities of its components. The "Thought Blocks" suggest that the model is capable of generating coherent sentences, albeit simple ones. The (h=0), (h=1), and (h=2) values could represent hidden states or some other internal parameter of the model. The significant difference in probability scales between the two diagrams suggests that the k=2 model is more constrained or requires more specific conditions to generate output. The diagrams are a visual representation of a generative process, showing how a sequence of words can be created from a starting point ("Alex") based on probabilistic rules.