## Diagram: Agent-Environment Interaction

### Overview

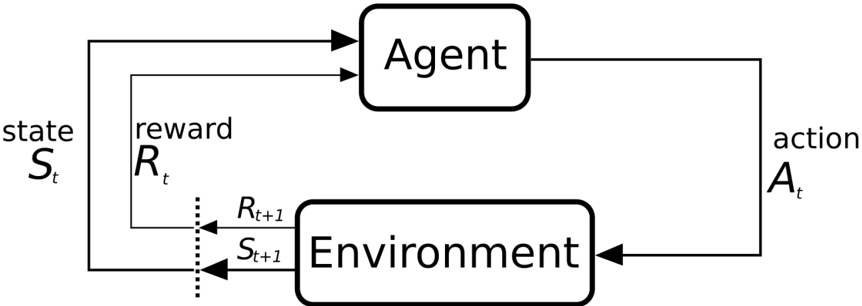

The image is a diagram illustrating the interaction between an agent and its environment in a reinforcement learning setting. It depicts a cyclical flow of information between the agent and the environment, showing how the agent's actions influence the environment's state and how the environment provides feedback to the agent in the form of rewards and updated states.

### Components/Axes

* **Agent:** A rectangular box with rounded corners labeled "Agent" at the top center of the diagram.

* **Environment:** A rectangular box with rounded corners labeled "Environment" at the bottom center of the diagram.

* **Action (At):** Located on the right side of the diagram. The agent sends an "action" (denoted as *A<sub>t</sub>*) to the environment.

* **State (St):** Located on the left side of the diagram. The environment's "state" (denoted as *S<sub>t</sub>*) is sent to the agent.

* **Reward (Rt):** Located on the left side of the diagram. The environment provides a "reward" (denoted as *R<sub>t</sub>*) to the agent.

* **Rt+1, St+1:** Located between the agent and the environment, indicating the reward and state at the next time step.

### Detailed Analysis or ### Content Details

The diagram illustrates the following flow:

1. The agent receives the current state (*S<sub>t</sub>*) and reward (*R<sub>t</sub>*) from the environment.

2. Based on this information, the agent takes an action (*A<sub>t</sub>*).

3. The action is sent to the environment.

4. The environment updates its state based on the agent's action and provides a new state (*S<sub>t+1</sub>*) and reward (*R<sub>t+1</sub>*) to the agent.

5. This cycle repeats.

The arrows indicate the direction of information flow. The arrow from the Agent to the Environment is labeled "action *A<sub>t</sub>*". The arrows from the Environment to the Agent are labeled "state *S<sub>t</sub>*" and "reward *R<sub>t</sub>*". There are also dotted arrows from the environment to the agent labeled *R<sub>t+1</sub>* and *S<sub>t+1</sub>*.

### Key Observations

* The diagram clearly shows the cyclical nature of the agent-environment interaction.

* The agent's actions directly influence the environment's state.

* The environment provides feedback to the agent in the form of rewards and updated states.

* The use of *t* and *t+1* subscripts indicates the temporal aspect of the interaction, highlighting the sequential nature of actions, states, and rewards.

### Interpretation

The diagram represents a fundamental concept in reinforcement learning. It illustrates how an agent learns to interact with an environment by taking actions and observing the resulting rewards and state changes. The agent's goal is to learn a policy that maximizes its cumulative reward over time. The diagram emphasizes the importance of feedback loops in the learning process, where the agent's actions influence the environment, and the environment's response shapes the agent's future actions. The dotted line indicates the transition from the current time step (*t*) to the next time step (*t+1*), emphasizing the dynamic and iterative nature of the interaction.