\n

## Diagram: Reinforcement Learning Agent-Environment Interaction

### Overview

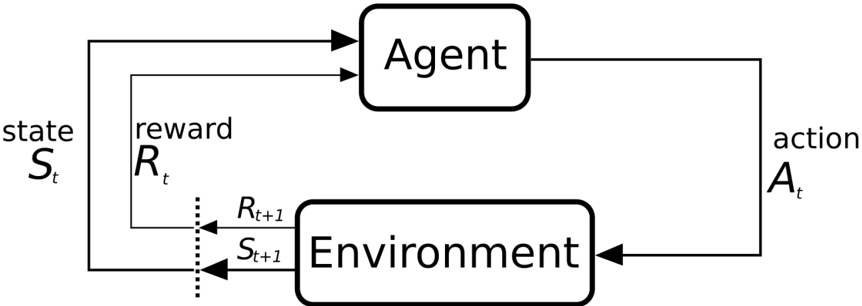

The image depicts a diagram illustrating the interaction between an Agent and an Environment in a Reinforcement Learning (RL) system. It shows the flow of information – states, actions, and rewards – between these two core components. The diagram uses arrows to indicate the direction of these interactions.

### Components/Axes

The diagram consists of two main rectangular blocks labeled "Agent" (top) and "Environment" (bottom). There are four key variables labeled:

* **S<sub>t</sub>**: State at time t. Located on the left side of the diagram.

* **A<sub>t</sub>**: Action at time t. Located on the right side of the diagram.

* **R<sub>t</sub>**: Reward at time t. Located in the upper-left corner, within a rectangular box.

* **R<sub>t+1</sub>**: Reward at time t+1. Located in the lower-left corner, within a rectangular box.

* **S<sub>t+1</sub>**: State at time t+1. Located in the lower-left corner, within a rectangular box.

Arrows indicate the flow of information:

* From "Agent" to "Environment" labeled "action A<sub>t</sub>".

* From "Environment" to "Agent" labeled "state S<sub>t</sub>".

* From "Environment" to "Agent" labeled "reward R<sub>t</sub>".

* From "Environment" to "Agent" with a dotted line, indicating the next state S<sub>t+1</sub> and reward R<sub>t+1</sub>.

### Detailed Analysis or Content Details

The diagram illustrates a closed-loop system. The Agent receives a state (S<sub>t</sub>) from the Environment, and based on this state, it takes an action (A<sub>t</sub>). This action affects the Environment, which transitions to a new state (S<sub>t+1</sub>) and provides a reward (R<sub>t+1</sub>) back to the Agent. The Agent also receives a reward (R<sub>t</sub>) associated with the previous state and action. The subscript 't' and 't+1' denote the current and next time steps, respectively.

The dotted line connecting the Environment to the Agent represents the transition to the next state and reward, indicating that the Agent receives information about the consequences of its action in the subsequent time step.

### Key Observations

The diagram emphasizes the sequential nature of the interaction between the Agent and the Environment. The Agent learns by receiving rewards and observing the resulting state transitions. The use of time indices (t and t+1) highlights the temporal aspect of the learning process.

### Interpretation

This diagram represents a fundamental concept in Reinforcement Learning. It illustrates how an agent learns to make decisions in an environment to maximize cumulative rewards. The agent's goal is to learn a policy that maps states to actions in a way that leads to the highest possible long-term reward. The diagram highlights the core elements of an RL problem: the agent, the environment, states, actions, and rewards. The feedback loop is crucial, as the agent uses the rewards to adjust its policy and improve its performance over time. The dotted line suggests a delayed reward system, where the immediate reward might not fully reflect the long-term consequences of an action. This is a common scenario in RL, requiring the agent to learn to anticipate future rewards.