TECHNICAL ASSET FINGERPRINT

2a194de2f3bf0f66f493b67c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

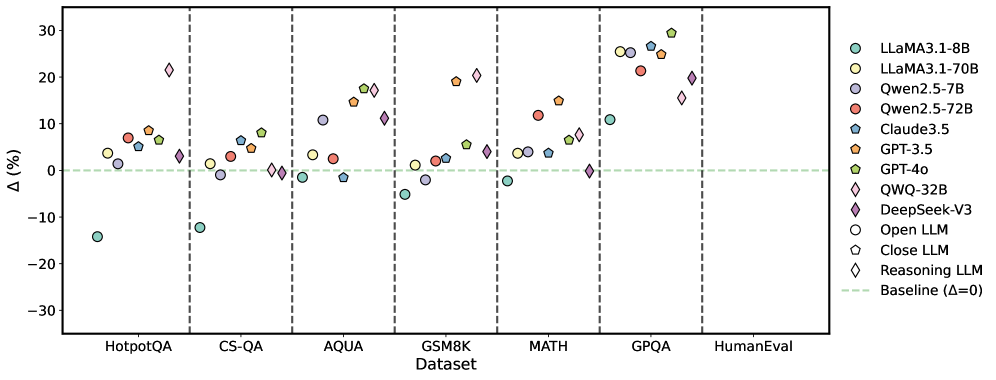

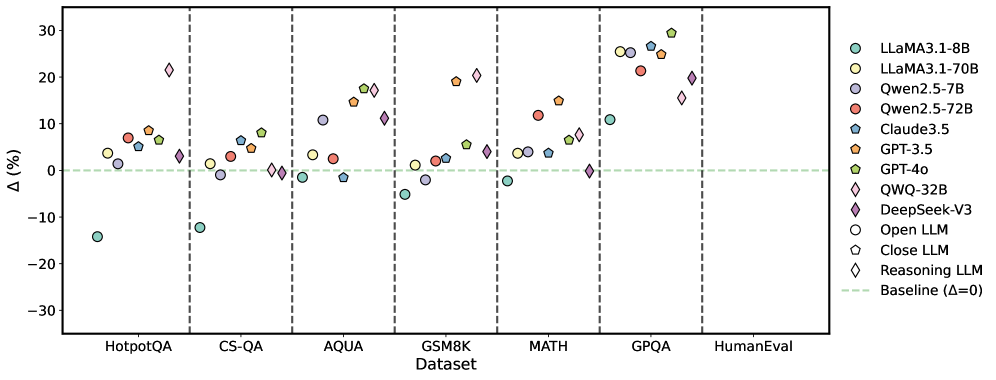

## Scatter Plot: LLM Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance of various Large Language Models (LLMs) across different datasets. The y-axis represents the percentage difference (Δ (%)), and the x-axis represents the datasets. Each LLM is represented by a unique color and marker. A horizontal dashed line indicates the baseline performance (Δ = 0).

### Components/Axes

* **X-axis:** Datasets: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval

* **Y-axis:** Δ (%) - Percentage difference, ranging from -30 to 30, with tick marks at -30, -20, -10, 0, 10, 20, and 30.

* **Legend:** Located on the right side of the plot, mapping colors and markers to specific LLMs:

* Light Blue Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Dark Blue Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Teal Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Light Blue Diamond: QWQ-32B

* Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* White Pentagon: Close LLM

* White Diamond: Reasoning LLM

* Light Green Dashed Line: Baseline (Δ=0)

### Detailed Analysis

**LLaMA3.1-8B (Light Blue Circle):**

* HotpotQA: Approximately -14%

* CS-QA: Approximately 0%

* AQUA: Approximately 0%

* GSM8K: Approximately -5%

* MATH: Approximately 0%

* GPQA: Approximately 1%

* HumanEval: Approximately -1%

**LLaMA3.1-70B (Yellow Circle):**

* HotpotQA: Approximately 7%

* CS-QA: Approximately 2%

* AQUA: Approximately 15%

* GSM8K: Approximately 2%

* MATH: Approximately 3%

* GPQA: Approximately 2%

* HumanEval: Approximately 5%

**Qwen2.5-7B (Dark Blue Circle):**

* HotpotQA: Approximately 3%

* CS-QA: Approximately 0%

* AQUA: Approximately 1%

* GSM8K: Approximately 3%

* MATH: Approximately 1%

* GPQA: Approximately 3%

* HumanEval: Approximately 3%

**Qwen2.5-72B (Red Circle):**

* HotpotQA: Approximately 8%

* CS-QA: Approximately 4%

* AQUA: Approximately 3%

* GSM8K: Approximately 4%

* MATH: Approximately 4%

* GPQA: Approximately 4%

* HumanEval: Approximately 4%

**Claude3.5 (Teal Pentagon):**

* HotpotQA: Approximately 5%

* CS-QA: Approximately 2%

* AQUA: Approximately 1%

* GSM8K: Approximately 3%

* MATH: Approximately 3%

* GPQA: Approximately 5%

* HumanEval: Approximately 6%

**GPT-3.5 (Orange Pentagon):**

* HotpotQA: Approximately 9%

* CS-QA: Approximately 6%

* AQUA: Approximately 16%

* GSM8K: Approximately 12%

* MATH: Approximately 12%

* GPQA: Approximately 28%

* HumanEval: Approximately 6%

**GPT-4o (Green Pentagon):**

* HotpotQA: Approximately 6%

* CS-QA: Approximately 5%

* AQUA: Approximately 2%

* GSM8K: Approximately 5%

* MATH: Approximately 5%

* GPQA: Approximately 7%

* HumanEval: Approximately 7%

**QWQ-32B (Light Blue Diamond):**

* HotpotQA: Approximately 0%

* CS-QA: Approximately 3%

* AQUA: Approximately 18%

* GSM8K: Approximately 19%

* MATH: Approximately 19%

* GPQA: Approximately 29%

* HumanEval: Approximately 7%

**DeepSeek-V3 (Purple Diamond):**

* HotpotQA: Approximately 1%

* CS-QA: Approximately 11%

* AQUA: Approximately 12%

* GSM8K: Approximately 2%

* MATH: Approximately 2%

* GPQA: Approximately 22%

* HumanEval: Approximately 16%

**Open LLM (White Circle):**

* HotpotQA: Approximately 0%

* CS-QA: Approximately 0%

* AQUA: Approximately 0%

* GSM8K: Approximately 0%

* MATH: Approximately 0%

* GPQA: Approximately 1%

* HumanEval: Approximately 1%

**Close LLM (White Pentagon):**

* HotpotQA: Approximately 0%

* CS-QA: Approximately 0%

* AQUA: Approximately 0%

* GSM8K: Approximately 0%

* MATH: Approximately 0%

* GPQA: Approximately 0%

* HumanEval: Approximately 0%

**Reasoning LLM (White Diamond):**

* CS-QA: Approximately 3%

* AQUA: Approximately 3%

* GSM8K: Approximately 3%

* MATH: Approximately 3%

* GPQA: Approximately 3%

* HumanEval: Approximately 3%

### Key Observations

* LLaMA3.1-8B performs poorly on HotpotQA compared to other datasets.

* GPT-3.5 and QWQ-32B show high performance on GPQA.

* The performance of most models improves from HotpotQA to HumanEval.

* The "Reasoning LLM" consistently scores around 3% across all datasets.

* The "Close LLM" consistently scores around 0% across all datasets.

* The "Open LLM" consistently scores around 0% across all datasets.

### Interpretation

The scatter plot visualizes the relative performance of different LLMs on various benchmark datasets. The percentage difference (Δ) likely represents the improvement or decline in performance compared to a baseline model or a specific metric. The plot highlights the strengths and weaknesses of each model across different tasks. For example, LLaMA3.1-8B struggles with HotpotQA, while GPT-3.5 and QWQ-32B excel at GPQA. The consistent performance of the "Reasoning LLM" suggests it might be specifically designed for reasoning tasks, while the "Open LLM" and "Close LLM" may be base models or control groups. The general trend of increasing performance from HotpotQA to HumanEval could indicate the increasing complexity or relevance of these datasets.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Scatter Plot: Performance Comparison of Large Language Models

### Overview

This scatter plot compares the performance of several Large Language Models (LLMs) across six different datasets. The y-axis represents the percentage difference (Δ (%)) in performance relative to a baseline, and the x-axis represents the dataset name. Each LLM is represented by a unique marker and color.

### Components/Axes

* **X-axis:** Dataset - with markers for HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, and HumanEval.

* **Y-axis:** Δ (%) - Percentage difference in performance. Scale ranges from approximately -30% to 30%.

* **Legend (Top-Right):**

* LLaMA3-1-8B (Light Blue Circle)

* LLaMA3-1-70B (Light Orange Circle)

* Qwen2.5-7B (Light Grey Circle)

* Qwen2.5-72B (Red Circle)

* Claude3.5 (Dark Turquoise Diamond)

* GPT-3.5 (Dark Orange Triangle)

* GPT-4o (Dark Green Square)

* QWQ-32B (Purple Diamond)

* DeepSeek-V3 (Dark Purple Hexagon)

* Open LLM (White Circle)

* Close LLM (Light Green Triangle)

* Reasoning LLM (Light Blue Diamond)

* Baseline (Δ=0) (Horizontal Dashed Green Line)

### Detailed Analysis

The plot shows the performance variation of each LLM across the datasets. The baseline is indicated by a horizontal dashed green line at Δ = 0%.

* **HotpotQA:**

* LLaMA3-1-8B: Approximately +5%

* LLaMA3-1-70B: Approximately +8%

* Qwen2.5-7B: Approximately +2%

* Qwen2.5-72B: Approximately +5%

* Claude3.5: Approximately +10%

* GPT-3.5: Approximately +5%

* GPT-4o: Approximately +10%

* QWQ-32B: Approximately +15%

* DeepSeek-V3: Approximately +10%

* Open LLM: Approximately -15%

* Close LLM: Approximately -10%

* Reasoning LLM: Approximately -5%

* **CS-QA:**

* LLaMA3-1-8B: Approximately -5%

* LLaMA3-1-70B: Approximately -2%

* Qwen2.5-7B: Approximately -8%

* Qwen2.5-72B: Approximately -5%

* Claude3.5: Approximately +5%

* GPT-3.5: Approximately +2%

* GPT-4o: Approximately +8%

* QWQ-32B: Approximately +10%

* DeepSeek-V3: Approximately +5%

* Open LLM: Approximately -10%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 0%

* **AQUA:**

* LLaMA3-1-8B: Approximately +5%

* LLaMA3-1-70B: Approximately +10%

* Qwen2.5-7B: Approximately +2%

* Qwen2.5-72B: Approximately +5%

* Claude3.5: Approximately +10%

* GPT-3.5: Approximately +5%

* GPT-4o: Approximately +15%

* QWQ-32B: Approximately +20%

* DeepSeek-V3: Approximately +10%

* Open LLM: Approximately -5%

* Close LLM: Approximately 0%

* Reasoning LLM: Approximately +5%

* **GSM8K:**

* LLaMA3-1-8B: Approximately 0%

* LLaMA3-1-70B: Approximately +5%

* Qwen2.5-7B: Approximately -5%

* Qwen2.5-72B: Approximately 0%

* Claude3.5: Approximately +10%

* GPT-3.5: Approximately +5%

* GPT-4o: Approximately +15%

* QWQ-32B: Approximately +15%

* DeepSeek-V3: Approximately +10%

* Open LLM: Approximately -10%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately +5%

* **MATH:**

* LLaMA3-1-8B: Approximately +5%

* LLaMA3-1-70B: Approximately +10%

* Qwen2.5-7B: Approximately -5%

* Qwen2.5-72B: Approximately 0%

* Claude3.5: Approximately +10%

* GPT-3.5: Approximately +5%

* GPT-4o: Approximately +20%

* QWQ-32B: Approximately +15%

* DeepSeek-V3: Approximately +10%

* Open LLM: Approximately -10%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately +5%

* **GPQA:**

* LLaMA3-1-8B: Approximately +10%

* LLaMA3-1-70B: Approximately +15%

* Qwen2.5-7B: Approximately +5%

* Qwen2.5-72B: Approximately +10%

* Claude3.5: Approximately +15%

* GPT-3.5: Approximately +10%

* GPT-4o: Approximately +25%

* QWQ-32B: Approximately +20%

* DeepSeek-V3: Approximately +15%

* Open LLM: Approximately 0%

* Close LLM: Approximately +5%

* Reasoning LLM: Approximately +10%

* **HumanEval:**

* LLaMA3-1-8B: Approximately +10%

* LLaMA3-1-70B: Approximately +15%

* Qwen2.5-7B: Approximately +5%

* Qwen2.5-72B: Approximately +10%

* Claude3.5: Approximately +15%

* GPT-3.5: Approximately +10%

* GPT-4o: Approximately +20%

* QWQ-32B: Approximately +15%

* DeepSeek-V3: Approximately +10%

* Open LLM: Approximately 0%

* Close LLM: Approximately +5%

* Reasoning LLM: Approximately +10%

### Key Observations

* GPT-4o consistently outperforms other models across all datasets, often achieving the highest percentage difference.

* QWQ-32B and DeepSeek-V3 generally perform well, often close to GPT-4o.

* Open LLMs and Close LLMs consistently underperform compared to the baseline on most datasets.

* The performance difference between LLaMA3-1-8B and LLaMA3-1-70B is noticeable, with the larger model generally performing better.

* Qwen2.5-7B consistently shows lower performance compared to Qwen2.5-72B.

### Interpretation

The data suggests that model size and architecture significantly impact performance on these datasets. GPT-4o's consistent superiority indicates a strong overall capability. The underperformance of Open LLMs and Close LLMs suggests they may require further development or fine-tuning to achieve competitive results. The consistent improvement from smaller to larger models within the same family (e.g., LLaMA3) highlights the benefits of scaling model size. The variation in performance across datasets indicates that different LLMs excel in different areas, suggesting the importance of selecting the appropriate model for a specific task. The baseline (Δ=0) provides a crucial reference point for evaluating the relative performance of each model. The spread of data points for each model indicates the variability in performance across the datasets, highlighting the need for robust evaluation across a diverse set of benchmarks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Model Performance Δ (%) Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ, in percentage) of various large language models (LLMs) across seven different benchmark datasets. The plot uses distinct symbols and colors to represent different model families and types (Open, Closed, Reasoning). A horizontal dashed line at Δ=0 serves as the baseline.

### Components/Axes

* **X-Axis (Categorical):** Labeled "Dataset". The categories from left to right are:

1. HotpotQA

2. CS-QA

3. AQUA

4. GSM8K

5. MATH

6. GPQA

7. HumanEval

* **Y-Axis (Numerical):** Labeled "Δ (%)". The scale ranges from -30 to 30, with major tick marks at intervals of 10 (-30, -20, -10, 0, 10, 20, 30).

* **Legend (Top-Right):** Positioned in the top-right corner of the plot area. It defines the following model symbols and colors:

* **LLaMA3.1-8B:** Teal circle (○)

* **LLaMA3.1-70B:** Light green circle (○)

* **Qwen2.5-7B:** Purple circle (○)

* **Qwen2.5-72B:** Red circle (○)

* **Claude3.5:** Blue circle (○)

* **GPT-3.5:** Orange pentagon (⬠)

* **GPT-4o:** Green pentagon (⬠)

* **QWQ-32B:** Pink diamond (◇)

* **DeepSeek-V3:** Purple diamond (◇)

* **Open LLM:** Circle symbol (○) - *This is a category marker, not a specific model.*

* **Close LLM:** Pentagon symbol (⬠) - *This is a category marker, not a specific model.*

* **Reasoning LLM:** Diamond symbol (◇) - *This is a category marker, not a specific model.*

* **Baseline:** A horizontal, light green dashed line at Δ=0, labeled "Baseline (Δ=0)" in the legend.

### Detailed Analysis

Data points are plotted for each model within each dataset column. Approximate Δ (%) values are extracted below, grouped by dataset.

**1. HotpotQA**

* **Trend:** Most models show positive Δ, clustered between 0% and 10%. One significant outlier is below -10%.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ -14%

* LLaMA3.1-70B (Light Green ○): ~ +4%

* Qwen2.5-7B (Purple ○): ~ +1%

* Qwen2.5-72B (Red ○): ~ +7%

* Claude3.5 (Blue ○): ~ +6%

* GPT-3.5 (Orange ⬠): ~ +8%

* GPT-4o (Green ⬠): ~ +6%

* QWQ-32B (Pink ◇): ~ +22%

**2. CS-QA**

* **Trend:** Models are tightly clustered near the baseline, with most between -5% and +10%. One model is a clear outlier below -10%.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ -12%

* LLaMA3.1-70B (Light Green ○): ~ +1%

* Qwen2.5-7B (Purple ○): ~ -2%

* Qwen2.5-72B (Red ○): ~ +3%

* Claude3.5 (Blue ○): ~ +6%

* GPT-3.5 (Orange ⬠): ~ +5%

* GPT-4o (Green ⬠): ~ +8%

* DeepSeek-V3 (Purple ◇): ~ 0%

* QWQ-32B (Pink ◇): ~ 0%

**3. AQUA**

* **Trend:** A wider spread of performance. Several models show strong positive gains (10-20%), while others are near or slightly below baseline.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ -1%

* LLaMA3.1-70B (Light Green ○): ~ +4%

* Qwen2.5-7B (Purple ○): ~ +11%

* Qwen2.5-72B (Red ○): ~ +3%

* Claude3.5 (Blue ○): ~ -1%

* GPT-3.5 (Orange ⬠): ~ +15%

* GPT-4o (Green ⬠): ~ +18%

* DeepSeek-V3 (Purple ◇): ~ +11%

* QWQ-32B (Pink ◇): ~ +17%

**4. GSM8K**

* **Trend:** Mixed results. Some models show strong positive Δ (~20%), while others are negative or near zero.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ -5%

* LLaMA3.1-70B (Light Green ○): ~ +1%

* Qwen2.5-7B (Purple ○): ~ -2%

* Qwen2.5-72B (Red ○): ~ +2%

* Claude3.5 (Blue ○): ~ +3%

* GPT-3.5 (Orange ⬠): ~ +19%

* GPT-4o (Green ⬠): ~ +5%

* DeepSeek-V3 (Purple ◇): ~ +4%

* QWQ-32B (Pink ◇): ~ +20%

**5. MATH**

* **Trend:** Generally positive performance, with most models between 0% and +15%. One model is at the baseline.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ -2%

* LLaMA3.1-70B (Light Green ○): ~ +4%

* Qwen2.5-7B (Purple ○): ~ +4%

* Qwen2.5-72B (Red ○): ~ +12%

* Claude3.5 (Blue ○): ~ +6%

* GPT-3.5 (Orange ⬠): ~ +15%

* GPT-4o (Green ⬠): ~ +7%

* DeepSeek-V3 (Purple ◇): ~ 0%

* QWQ-32B (Pink ◇): ~ +8%

**6. GPQA**

* **Trend:** This dataset shows the highest and most consistently positive Δ values across nearly all models, with a cluster between +20% and +30%.

* **Data Points (Approximate):**

* LLaMA3.1-8B (Teal ○): ~ +11%

* LLaMA3.1-70B (Light Green ○): ~ +25%

* Qwen2.5-7B (Purple ○): ~ +26%

* Qwen2.5-72B (Red ○): ~ +21%

* Claude3.5 (Blue ○): ~ +27%

* GPT-3.5 (Orange ⬠): ~ +25%

* GPT-4o (Green ⬠): ~ +30%

* DeepSeek-V3 (Purple ◇): ~ +15%

* QWQ-32B (Pink ◇): ~ +20%

**7. HumanEval**

* **Trend:** Only two data points are visible, both showing positive Δ.

* **Data Points (Approximate):**

* GPT-4o (Green ⬠): ~ +20%

* QWQ-32B (Pink ◇): ~ +19%

### Key Observations

1. **Dataset Difficulty:** GPQA elicits the largest positive performance changes (Δ) across the board, suggesting it may be a benchmark where recent model improvements are most pronounced. Conversely, HotpotQA and CS-QA show more modest or even negative changes for some models.

2. **Model Performance:** GPT-4o (Green ⬠) and QWQ-32B (Pink ◇) are frequently among the top performers, often showing Δ > +15%. LLaMA3.1-8B (Teal ○) is the most consistent negative outlier, showing negative Δ in 5 out of 6 datasets where it appears.

3. **Model Type Trends:** "Reasoning LLMs" (Diamonds: QWQ-32B, DeepSeek-V3) generally show strong positive Δ, particularly on AQUA, GSM8K, and GPQA. "Closed LLMs" (Pentagons: GPT-3.5, GPT-4o) also show strong positive trends, especially on GPQA.

4. **Outliers:** The most significant negative outlier is LLaMA3.1-8B on HotpotQA (~ -14%). The most significant positive outlier is GPT-4o on GPQA (~ +30%).

### Interpretation

This chart visualizes the **relative improvement or degradation (Δ)** of various LLMs compared to a baseline (likely a previous model version or a standard prompting method) across diverse reasoning and knowledge benchmarks.

* **What the data suggests:** The positive Δ values for most models on most datasets indicate that the evaluated models generally outperform the baseline. The magnitude of improvement is highly dataset-dependent, with complex reasoning tasks (GPQA, MATH) showing larger gains than others (CS-QA).

* **Relationships:** The plot allows for a direct comparison of model families (e.g., LLaMA vs. Qwen vs. GPT) and types (Open vs. Closed vs. Reasoning) on the same tasks. It highlights that model size alone (e.g., LLaMA3.1-70B vs. 8B) is not the sole determinant of performance gain, as architecture and training (implied by model family) play a crucial role.

* **Anomalies & Insights:** The consistently negative Δ for LLaMA3.1-8B suggests it may be a weaker baseline or that the specific evaluation setup disadvantaged it. The exceptional performance of all models on GPQA is notable and may warrant investigation into the nature of that benchmark—whether it aligns particularly well with current model capabilities or training data. The absence of data for many models on HumanEval limits conclusions for that specific coding task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Performance Comparison of Language Models Across Datasets

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) across multiple question-answering and reasoning datasets. The y-axis represents the percentage change (Δ%) relative to a baseline (Δ=0), while the x-axis lists datasets such as HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, and HumanEval. Each model is represented by a unique color and shape, with a legend on the right.

### Components/Axes

- **X-axis (Dataset)**: Categories include HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, and HumanEval.

- **Y-axis (Δ (%)**: Percentage change relative to a baseline (Δ=0), marked by a dashed green line.

- **Legend**: Located on the right, mapping colors and shapes to models:

- **LLaMA3.1-8B**: Cyan circles

- **LLaMA3.1-70B**: Yellow circles

- **Qwen2.5-7B**: Purple circles

- **Qwen2.5-72B**: Red circles

- **Claude3.5**: Blue pentagons

- **GPT-3.5**: Orange pentagons

- **GPT-4o**: Green pentagons

- **QWQ-32B**: Pink diamonds

- **DeepSeek-V3**: Purple diamonds

- **Open LLM**: Blue circles

- **Close LLM**: Orange pentagons

- **Reasoning LLM**: Pink diamonds

### Detailed Analysis

- **LLaMA3.1-70B (Yellow Circles)**: Consistently shows positive Δ% across most datasets, with peaks in GPQA (~25%) and HumanEval (~20%). Negative values in HotpotQA (-15%) and CS-QA (-10%).

- **Qwen2.5-72B (Red Circles)**: Moderate gains in MATH (~10%) and GPQA (~15%), with smaller values in other datasets.

- **GPT-4o (Green Pentagons)**: High positive Δ% in GPQA (~25%) and HumanEval (~20%), with smaller gains in other datasets.

- **DeepSeek-V3 (Purple Diamonds)**: Significant gains in GPQA (~20%) and HumanEval (~15%), with moderate values elsewhere.

- **OWQ-32B (Pink Diamonds)**: High gains in GPQA (~25%) and HumanEval (~20%), with smaller values in other datasets.

- **Claude3.5 (Blue Pentagons)**: Moderate gains in MATH (~10%) and GPQA (~15%), with smaller values elsewhere.

- **LLaMA3.1-8B (Cyan Circles)**: Negative Δ% in HotpotQA (-15%) and CS-QA (-10%), but positive in other datasets (e.g., MATH: ~5%, GPQA: ~10%).

- **Reasoning LLM (Pink Diamonds)**: High gains in GPQA (~25%) and HumanEval (~20%), with smaller values elsewhere.

- **Close LLM (Orange Pentagons)**: Moderate gains in MATH (~10%) and GPQA (~15%), with smaller values elsewhere.

- **Open LLM (Blue Circles)**: Negative Δ% in HotpotQA (-15%) and CS-QA (-10%), but positive in other datasets (e.g., MATH: ~5%, GPQA: ~10%).

### Key Observations

1. **Model Size Correlation**: Larger models (e.g., LLaMA3.1-70B, GPT-4o) generally show higher Δ% across datasets, though exceptions exist (e.g., LLaMA3.1-8B underperforms in HotpotQA).

2. **Dataset-Specific Performance**:

- **GPQA and HumanEval**: Most models show strong performance, with LLaMA3.1-70B, GPT-4o, and OWQ-32B leading.

- **HotpotQA and CS-QA**: Some models (e.g., LLaMA3.1-8B, Open LLM) underperform, suggesting dataset-specific challenges.

3. **Baseline Comparison**: The dashed green line (Δ=0) highlights models with negative Δ% (e.g., LLaMA3.1-8B in HotpotQA) and those exceeding the baseline.

### Interpretation

The data suggests that model size and architecture significantly influence performance, with larger models (e.g., LLaMA3.1-70B, GPT-4o) generally outperforming smaller ones. However, dataset-specific factors also play a role, as seen in the negative Δ% for LLaMA3.1-8B in HotpotQA and CS-QA. The high performance of models like DeepSeek-V3 and OWQ-32B in GPQA and HumanEval indicates specialized training or reasoning capabilities. The baseline (Δ=0) serves as a critical reference, revealing models that underperform or exceed expectations. This analysis underscores the importance of model design and training data in achieving task-specific efficiency.

DECODING INTELLIGENCE...