TECHNICAL ASSET FINGERPRINT

2a739a8d586ed9101637be17

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

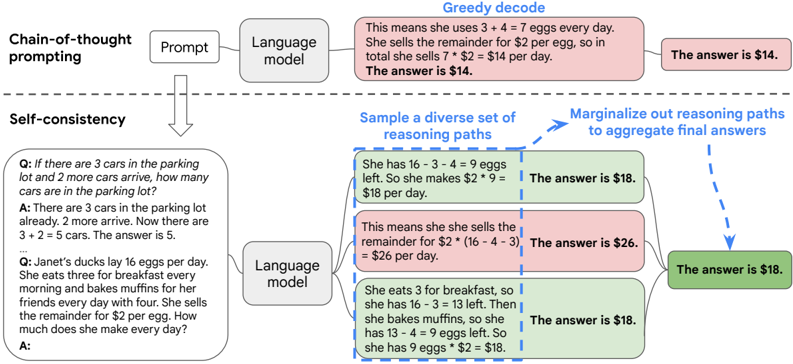

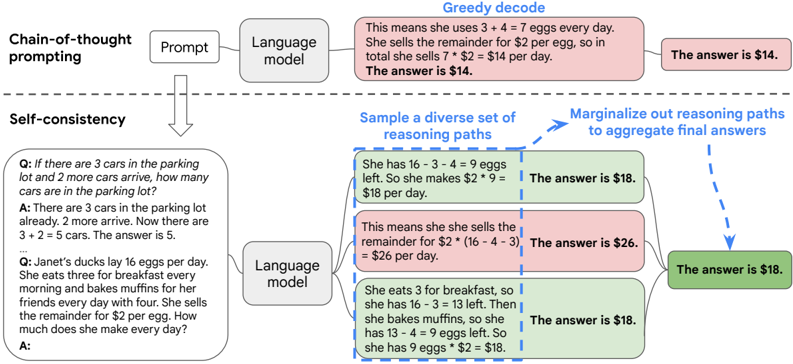

## Diagram: Comparison of Chain-of-Thought Prompting vs. Self-Consistency Prompting

### Overview

The image is a technical diagram illustrating and comparing two methods for improving the reasoning of large language models: **Chain-of-Thought (CoT) prompting** and **Self-Consistency prompting**. It uses a flowchart style with text boxes, arrows, and example Q&A pairs to demonstrate the process and outcomes of each method.

### Components/Axes

The diagram is divided into two primary horizontal sections:

1. **Top Section: Chain-of-thought prompting**

* **Components (Left to Right):**

* A box labeled **"Prompt"**.

* An arrow pointing to a box labeled **"Language model"**.

* An arrow pointing to a large, pink-shaded box containing a detailed reasoning trace.

* An arrow pointing to a final, smaller pink box with the answer.

* **Text Content:**

* **Prompt Box:** Contains the text: "Q: If there are 3 cars in the parking lot and 2 more cars arrive, how many cars are in the parking lot? A: There are 3 cars in the parking lot already. 2 more arrive. Now there are 3 + 2 = 5 cars. The answer is 5. Q: Janet's ducks lay 16 eggs per day. She eats three for breakfast every morning and bakes muffins for her friends every day with four. She sells the remainder for $2 per egg. How much does she make every day? A:"

* **Reasoning Box (Pink):** "This means she uses 3 + 4 = 7 eggs every day. She sells the remainder for $2 per egg, so in total she sells 7 * $2 = $14 per day. The answer is $14."

* **Final Answer Box (Pink):** "The answer is $14."

* **Label:** The entire top process is labeled **"Chain-of-thought prompting"** on the left and **"Greedy decode"** above the reasoning box.

2. **Bottom Section: Self-consistency**

* **Components (Left to Right):**

* A box labeled **"Prompt"** (identical to the one in the top section).

* An arrow pointing to a box labeled **"Language model"**.

* Three diverging arrows pointing to three separate reasoning path boxes (shaded in light blue, pink, and light green).

* Each reasoning path box has an arrow pointing to its own final answer box.

* All three answer boxes have arrows converging on a single, final green answer box.

* **Text Content:**

* **Prompt Box:** Contains the same two Q&A examples as the top section.

* **Reasoning Path 1 (Light Blue):** "She has 16 - 3 - 4 = 9 eggs left. So she makes $2 * 9 = $18 per day."

* **Answer Box 1 (Light Blue):** "The answer is $18."

* **Reasoning Path 2 (Pink):** "This means she she sells the remainder for $2 * (16 - 3 - 4) = $26 per day." *(Note: Contains a typo "she she")*

* **Answer Box 2 (Pink):** "The answer is $26."

* **Reasoning Path 3 (Light Green):** "She eats 3 for breakfast, so she has 16 - 3 = 13 left. Then she bakes muffins, so she has 13 - 4 = 9 eggs left. So she has 9 eggs * $2 = $18."

* **Answer Box 3 (Light Green):** "The answer is $18."

* **Final Aggregated Answer Box (Green):** "The answer is $18."

* **Labels & Annotations:**

* The entire bottom process is labeled **"Self-consistency"** on the left.

* Above the three reasoning paths: **"Sample a diverse set of reasoning paths"**.

* Above the converging arrows: **"Marginalize out reasoning paths to aggregate final answers"**.

### Detailed Analysis

* **Process Flow - Chain-of-Thought:** A single prompt is fed into a language model. The model generates one chain of reasoning (greedy decode), leading to a single final answer. In the example, the model correctly calculates the egg problem, arriving at **$14**.

* **Process Flow - Self-Consistency:** The same prompt is fed into the language model multiple times (or with techniques to encourage diversity). The model generates **three distinct reasoning paths**:

1. Path 1 (Blue): Correctly calculates `16 - 3 - 4 = 9` eggs, leading to **$18**.

2. Path 2 (Pink): Contains a calculation error or misinterpretation, stating `2 * (16 - 3 - 4) = $26`. This is mathematically incorrect (`2*9=18`), suggesting a model error in the final step.

3. Path 3 (Green): Breaks the problem into two steps (`16-3=13`, then `13-4=9`), correctly arriving at **$18**.

* **Aggregation:** The final answers from the three paths are collected: `$18`, `$26`, `$18`. The system then "marginalizes out" the reasoning paths, which in this context means selecting the most frequent or consistent answer. The answer **$18** appears twice, while `$26` appears once. Therefore, the aggregated final answer is **$18**.

### Key Observations

1. **Divergent Outputs:** The Self-Consistency method explicitly generates multiple, different reasoning paths from the same prompt, whereas Chain-of-Thought generates only one.

2. **Error Correction:** The Self-Consistency diagram shows one reasoning path (Pink) containing a clear mathematical error (`$26`). However, because the other two paths converge on the same correct answer (`$18`), the error is effectively outvoted during aggregation.

3. **Spatial Layout:** The legend/labels ("Sample a diverse set...", "Marginalize out...") are placed directly above the corresponding visual elements (the diverging arrows and converging arrows, respectively), clearly explaining the process steps.

4. **Color Coding:** Colors are used to group related elements (e.g., all elements of a single reasoning path share a background shade) but do not represent data values. The final answer box is green, possibly indicating a "correct" or "selected" outcome.

### Interpretation

This diagram serves as a pedagogical tool to explain the core innovation of the Self-Consistency method over standard Chain-of-Thought prompting.

* **What it demonstrates:** It visually argues that relying on a single reasoning path (greedy decode) is risky, as the model might make a mistake. By sampling multiple diverse reasoning paths and then aggregating the final answers (e.g., via majority vote), the system can achieve more robust and accurate results. The method leverages the idea that while a model may take different logical routes or make different errors, the correct answer is more likely to be consistently arrived at through valid reasoning.

* **Relationship between elements:** The left side (Prompt) is the constant input. The middle (Language model) is the engine that can produce varied outputs. The right side shows the key difference: single-path vs. multi-path generation and aggregation. The aggregation step ("Marginalize out...") is the critical component that transforms multiple potentially noisy outputs into a single, more reliable answer.

* **Notable Anomaly:** The pink reasoning path in the Self-Consistency section contains an internal contradiction. It states the remainder is `2 * (16 - 3 - 4)`, which equals `2 * 9`, but then concludes this is `$26`. This is a clear arithmetic error (`2*9=18, not 26`). This anomaly is crucial to the diagram's message, as it showcases exactly the kind of single-path error that the Self-Consistency aggregation is designed to overcome. The final answer of `$18` is correct, demonstrating the method's success despite the presence of flawed reasoning.

DECODING INTELLIGENCE...