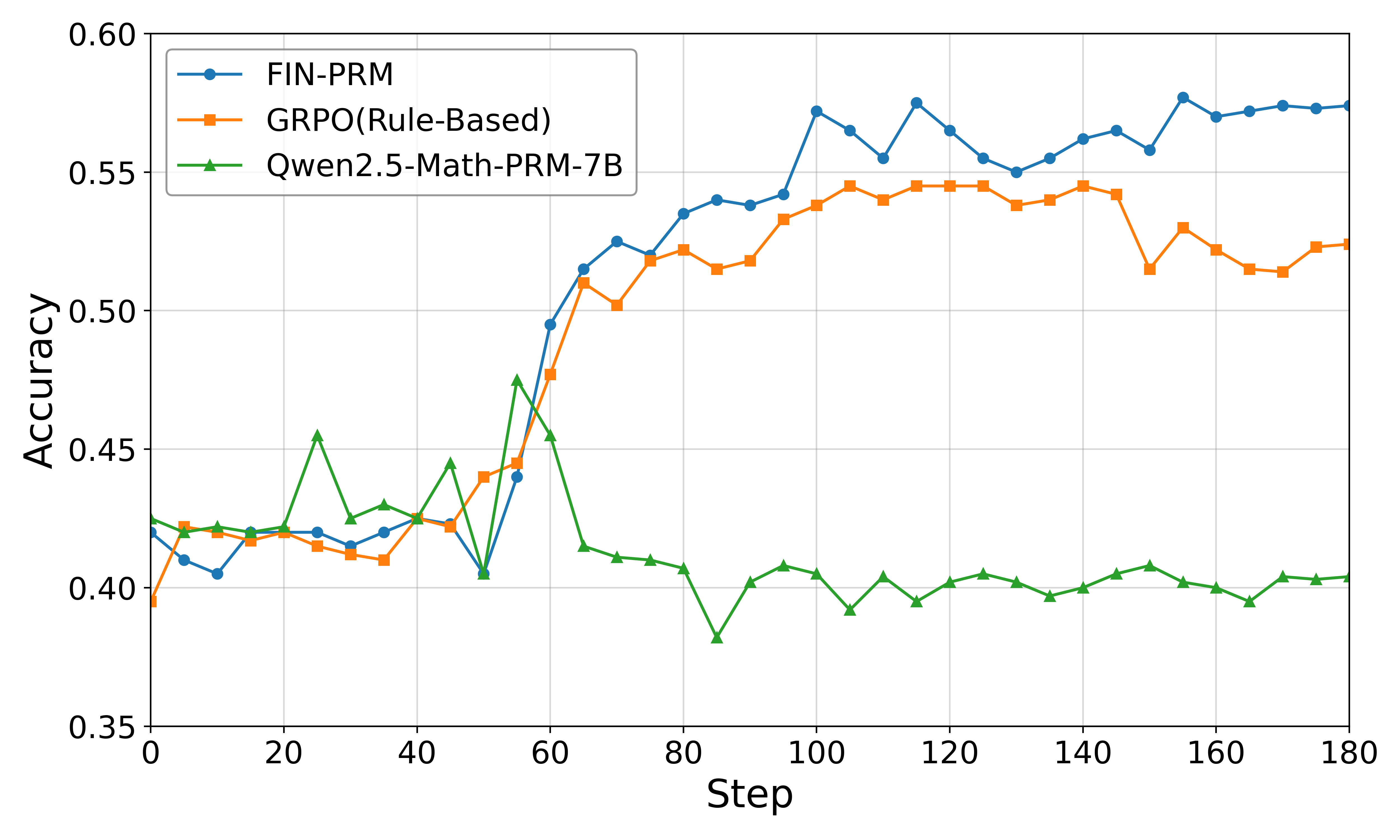

## Line Chart: Model Accuracy Comparison Over Training Steps

### Overview

This image is a line chart comparing the accuracy performance of three different models or methods over a series of training steps. The chart tracks how the accuracy metric changes for each approach as training progresses from step 0 to step 180.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "Step". It represents the progression of training, with major tick marks at intervals of 20, ranging from 0 to 180.

* **Y-Axis (Vertical):** Labeled "Accuracy". It represents the performance metric, with major tick marks at intervals of 0.05, ranging from 0.35 to 0.60.

* **Legend:** Positioned in the top-left corner of the chart area. It contains three entries:

1. **FIN-PRM:** Represented by a blue line with circular markers.

2. **GRPO(Rule-Based):** Represented by an orange line with square markers.

3. **Qwen2.5-Math-PRM-7B:** Represented by a green line with triangular markers.

* **Grid:** A light gray grid is present, aligning with the major tick marks on both axes.

### Detailed Analysis

The chart displays three distinct data series, each with a unique trend.

**1. FIN-PRM (Blue Line, Circle Markers)**

* **Trend Verification:** The line shows a general upward trend, with a period of rapid increase followed by a high-level plateau with minor fluctuations.

* **Data Points (Approximate):**

* Starts at ~0.42 accuracy at step 0.

* Dips slightly to ~0.405 at step 10.

* Begins a steep climb around step 50 (~0.405), crossing 0.50 by step 65.

* Reaches a local peak of ~0.57 at step 100.

* Fluctuates between ~0.55 and ~0.58 from step 100 to 180, ending at approximately 0.575.

**2. GRPO(Rule-Based) (Orange Line, Square Markers)**

* **Trend Verification:** The line shows an initial increase, followed by a sustained plateau, and then a slight decline towards the end.

* **Data Points (Approximate):**

* Starts at ~0.395 at step 0.

* Rises to ~0.42 by step 20.

* Experiences a sharp increase starting around step 55 (~0.445), reaching ~0.52 by step 75.

* Plateaus between ~0.53 and ~0.55 from step 100 to 145.

* Shows a slight downward trend after step 145, ending at approximately 0.525.

**3. Qwen2.5-Math-PRM-7B (Green Line, Triangle Markers)**

* **Trend Verification:** The line is highly volatile in the first half, with a significant spike, followed by a sharp decline and then a stable, low-level performance in the second half.

* **Data Points (Approximate):**

* Starts at ~0.425 at step 0.

* Shows high volatility between steps 20-60, with a notable peak of ~0.475 at step 55.

* Experiences a dramatic drop after step 60, falling to a low of ~0.38 at step 85.

* Recovers slightly and stabilizes, fluctuating narrowly between ~0.395 and ~0.41 from step 90 to 180, ending at approximately 0.405.

### Key Observations

1. **Performance Divergence:** A major divergence occurs around step 60. FIN-PRM and GRPO begin a strong upward trajectory, while Qwen2.5-Math-PRM-7B enters a steep decline.

2. **Peak Performance:** FIN-PRM achieves the highest overall accuracy, peaking near 0.58. GRPO peaks around 0.55. Qwen2.5's peak (~0.475) is significantly lower and occurs much earlier in the training process.

3. **Stability:** In the latter half of training (steps 90-180), FIN-PRM and GRPO maintain relatively high and stable accuracy, while Qwen2.5 stabilizes at a much lower accuracy level.

4. **Initial Phase:** All three models start within a similar accuracy range (~0.395 to 0.425) at step 0.

### Interpretation

The data suggests a comparative analysis of training methodologies or model architectures for a specific task (likely mathematical reasoning, given the model name "Qwen2.5-Math-PRM-7B").

* **FIN-PRM** demonstrates the most effective and robust learning curve. Its steady climb and high final plateau indicate a method that consistently improves and retains performance over many training steps.

* **GRPO(Rule-Based)** also shows strong learning, closely following FIN-PRM's trajectory until about step 100, after which it plateaus at a slightly lower level and shows minor degradation. This could indicate a method that learns quickly but may have a slightly lower performance ceiling or less stability in later stages.

* **Qwen2.5-Math-PRM-7B** exhibits a problematic training dynamic. The early volatility and spike suggest instability or potential overfitting to early training data. The subsequent crash and low-level stabilization imply a failure to generalize or a catastrophic forgetting event, where the model loses previously acquired knowledge. This pattern is a red flag, indicating the training process for this model may be flawed or unsuited for the task compared to the other two methods.

**Overall Implication:** For the task represented by this accuracy metric, the FIN-PRM and GRPO approaches are significantly more effective than the Qwen2.5-Math-PRM-7B approach over the long term. The chart provides strong visual evidence that the choice of method has a dramatic impact on both the learning trajectory and final model performance.