## Diagram: Comparison of Generic vs. eXplainable AI Approaches

### Overview

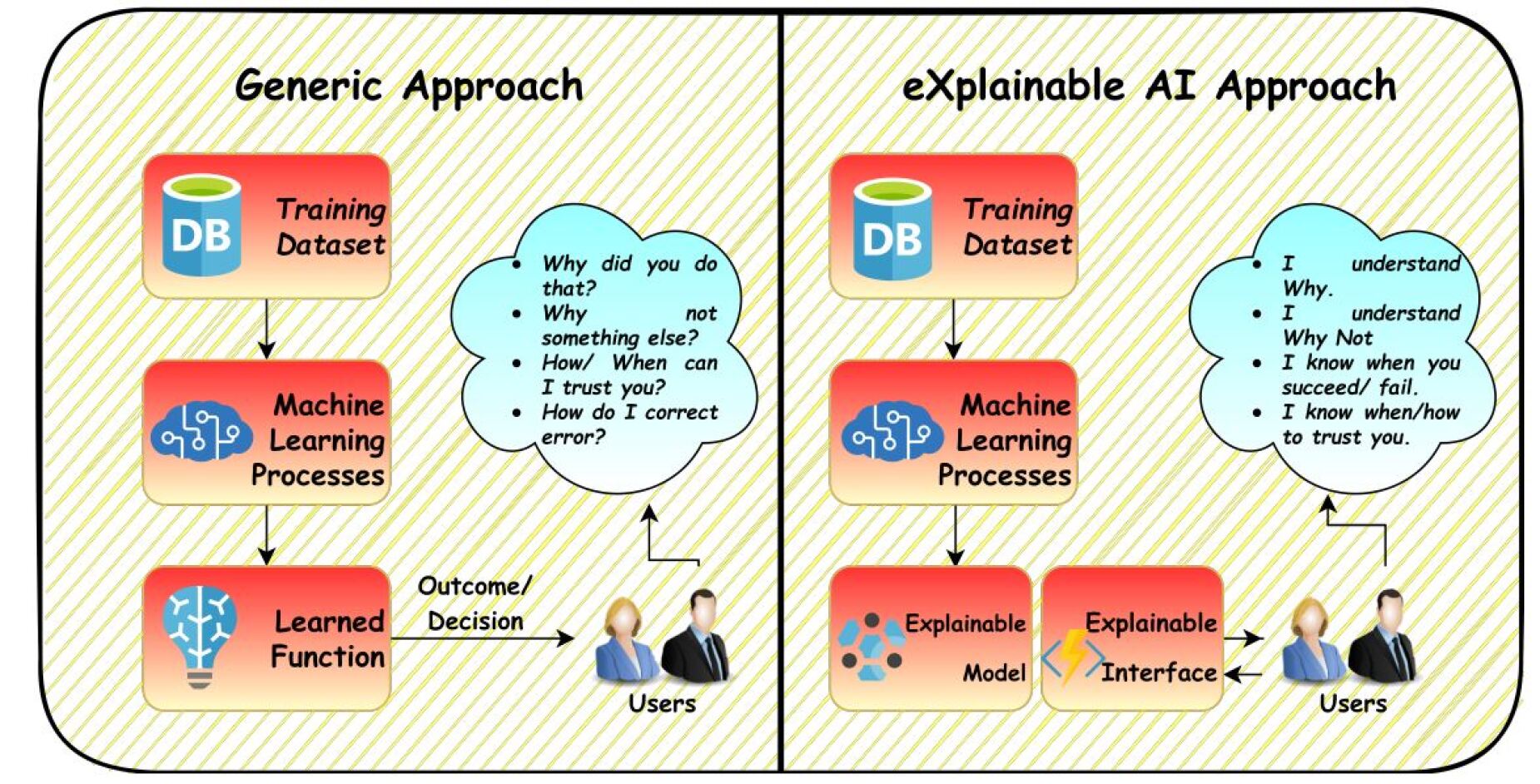

The image is a conceptual diagram comparing two paradigms in machine learning deployment: a "Generic Approach" and an "eXplainable AI Approach." It uses a side-by-side flowchart format to illustrate the process flow and, more importantly, the resulting user experience and understanding in each paradigm. The diagram is informational and conceptual, containing no numerical data or charts.

### Components/Axes

The diagram is split into two vertical panels, each with a title at the top:

* **Left Panel Title:** `Generic Approach`

* **Right Panel Title:** `eXplainable AI Approach`

Each panel contains a flowchart with the following common components, represented by icons and text labels:

1. **Training Dataset:** Represented by a blue cylinder icon labeled "DB" and the text "Training Dataset."

2. **Machine Learning Processes:** Represented by a blue brain/network icon and the text "Machine Learning Processes."

3. **Users:** Represented by an icon of two people (a woman and a man in business attire) labeled "Users."

The flow and additional components differ between the two approaches.

### Detailed Analysis

#### **Left Panel: Generic Approach**

* **Flow:** The process is linear and unidirectional.

* An arrow points from **Training Dataset** down to **Machine Learning Processes**.

* An arrow points from **Machine Learning Processes** down to a third box labeled **Learned Function** (represented by a blue lightbulb icon).

* A final arrow, labeled `Outcome/Decision`, points from **Learned Function** to the **Users**.

* **User Interaction (Thought Bubble):** A light blue thought bubble originates from the **Users**. It contains a list of questions the user has about the system's output:

* `Why did you do that?`

* `Why not something else?`

* `How/ When can I trust you?`

* `How do I correct error?`

* **Spatial Layout:** The flowchart is arranged vertically on the left side of the panel. The user icon and thought bubble are positioned to the right of the "Learned Function" box.

#### **Right Panel: eXplainable AI Approach**

* **Flow:** The process incorporates explainability components, creating a more interactive loop.

* An arrow points from **Training Dataset** down to **Machine Learning Processes**.

* An arrow points from **Machine Learning Processes** down to a box labeled **Explainable Model** (represented by a blue network icon with highlighted nodes).

* A separate, adjacent box labeled **Explainable Interface** (represented by a yellow lightning bolt icon) is connected to the **Explainable Model**.

* **Bidirectional arrows** connect the **Explainable Interface** to the **Users**, indicating a two-way interaction.

* **User Interaction (Thought Bubble):** A light blue thought bubble originates from the **Users**. It contains statements reflecting understanding and trust:

* `I understand Why.`

* `I understand Why Not`

* `I know when you succeed/ fail.`

* `I know when/how to trust you.`

* **Spatial Layout:** The flowchart is arranged vertically on the left side of the panel. The user icon and thought bubble are positioned to the right of the "Explainable Interface" box.

### Key Observations

1. **Structural Difference:** The core difference is the replacement of the opaque "Learned Function" with two components: an "Explainable Model" and an "Explainable Interface."

2. **Interaction Model:** The Generic Approach shows a one-way delivery of a decision (`Outcome/Decision`). The eXplainable AI Approach shows a two-way interaction (bidirectional arrows) between the system and the user.

3. **User State Transformation:** The thought bubbles are the focal point of the comparison. They shift from a state of questioning and uncertainty (Generic) to a state of understanding and informed trust (eXplainable AI). The questions in the left bubble are directly answered by the statements in the right bubble.

4. **Visual Metaphors:** Icons are used consistently: a database for data, a brain for processing, a lightbulb for a finalized (but opaque) function, and a network with highlights for an explainable model. The lightning bolt for the interface suggests active communication or revelation.

### Interpretation

This diagram argues that the value of eXplainable AI (XAI) extends beyond technical performance to fundamentally change the human-computer relationship. It posits that a "Generic" or black-box AI system, while potentially accurate, leaves users in a state of passive reception and doubt, unable to scrutinize, correct, or fully trust the outcomes.

The eXplainable AI approach, by integrating explanation directly into the model and interface, transforms the user from a passive recipient into an active, informed participant. The bidirectional flow suggests a collaborative process where the system's reasoning is made accessible, allowing users to verify, understand limitations, and calibrate their trust appropriately. The diagram's central message is that explainability is not just a feature but a necessary component for responsible and effective integration of AI into human decision-making processes, addressing critical questions of accountability, reliability, and user agency.