TECHNICAL ASSET FINGERPRINT

2ad99b86464a86e759220c99

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

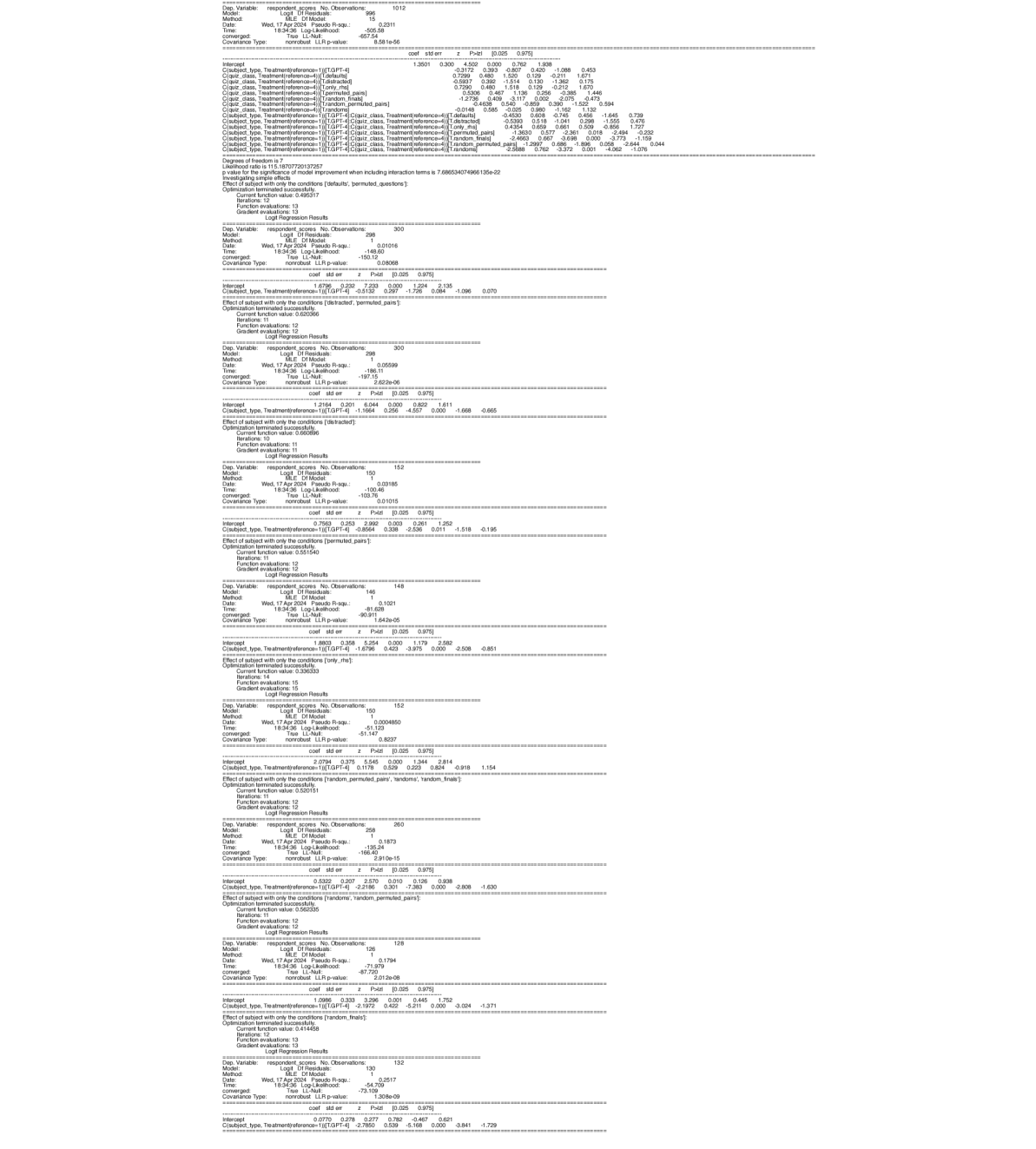

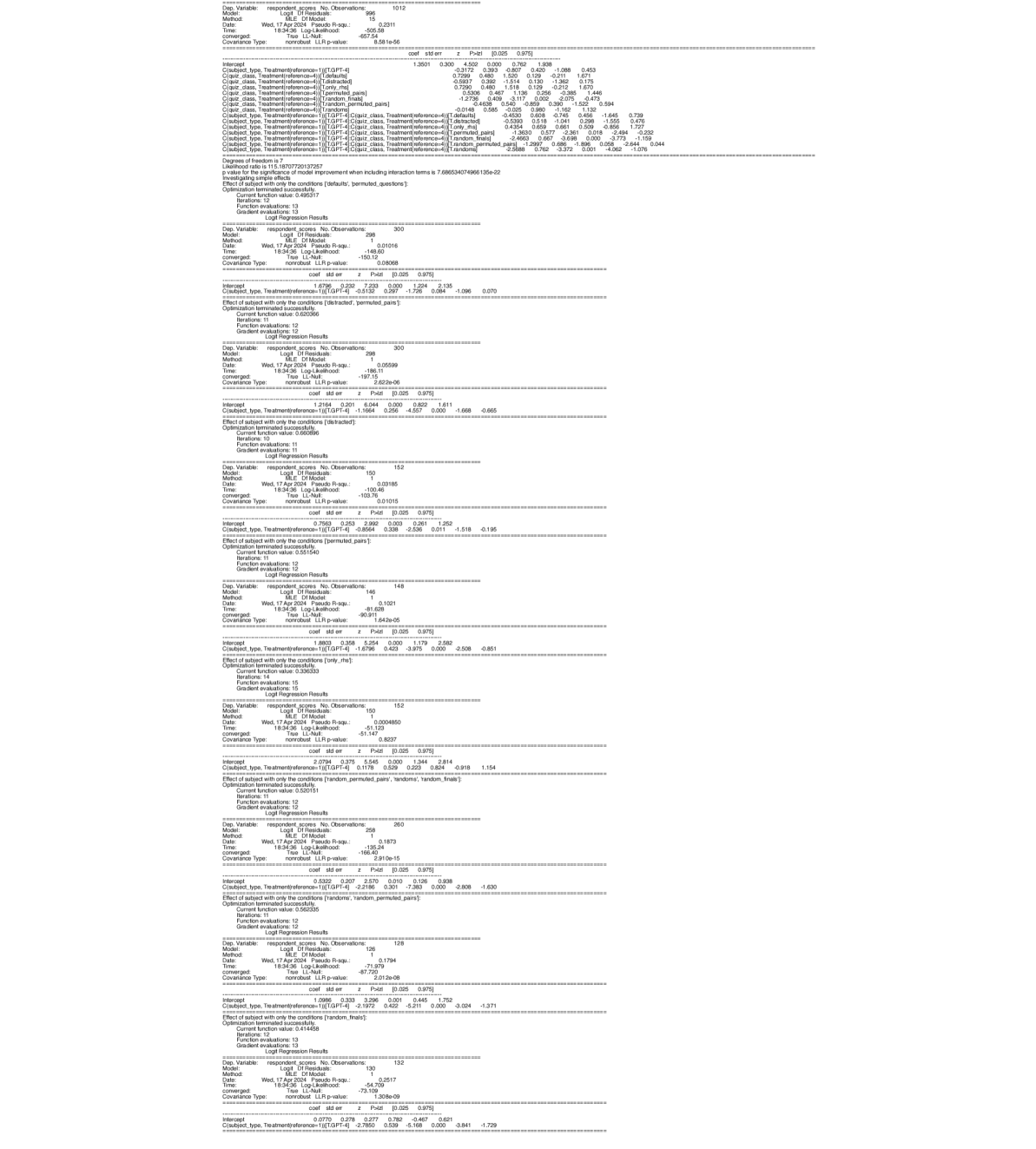

## Logit Regression Results

### Overview

The image presents a series of Logit Regression Results, each analyzing the effect of subject type (GPT-4 vs. default) and treatment conditions on respondent scores. The results include model statistics, coefficients, standard errors, z-values, p-values, and confidence intervals. Each analysis focuses on a different set of treatment conditions.

### Components/Axes

Each Logit Regression Result section contains the following components:

* **Model Information**:

* Dep. Variable: respondent scores

* No. Observations: Varies (e.g., 1012, 300, 152, 146, 260, 128, 132)

* Model: MLE, Df Model

* Method: Logit, Df Residuals

* Date: Wed, 17 Apr 2024

* Time: 18:34:36

* Pseudo R-squ.: Varies (e.g., 0.2311, 0.01016, 0.05599, 0.1021, 0.1873, 0.1794, 0.2517)

* Log-Likelihood: Varies (e.g., -505.58, -148.60, -186.11, -81.628, -166.40, -71.979, -54.70)

* Covariance Type: nonrobust

* LLR p-value: Varies (e.g., 8.581e-06, 0.08068, 2.622e-06, 1.642e-06, 2.910e-15, 2.012e-08, 1.308e-09)

* **Coefficient Table**:

* coef: Coefficient estimate

* std err: Standard error of the coefficient

* z: z-statistic

* P>|z|: p-value

* [0.025 0.975]: 95% Confidence Interval

* **Categorical Variables**:

* C(subject type, Treatment[reference=1])[T.GPT-4]: Compares GPT-4 to the reference group.

* C(quiz class, Treatment[reference=4])[T.distracted], [T.only the], [T.permuted pairs], [T.random finals], [T.randoms permuted]: Compares different quiz class treatments to the reference group.

* **Effect of Subject**:

* Effect of subject with only the conditions: Specifies the treatment conditions being analyzed.

* **Optimization Information**:

* Optimization terminated successfully.

* Current function value: Varies

* Iterations: Varies

* Function evaluations: Varies

* Gradient evaluations: Varies

### Detailed Analysis or ### Content Details

Here's a breakdown of the key data points from each Logit Regression Result:

1. **First Result (No specific conditions)**:

* No. Observations: 1012

* Pseudo R-squ.: 0.2311

* Log-Likelihood: -505.58

* LLR p-value: 8.581e-06

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = 1.3501, std err = 0.300, z = 4.502, P>|z| = 0.000, [0.025 0.975] = [0.762, 1.938]

* C(quiz class, Treatment[reference=4])[T.distracted]: coef = 0.5937, std err = 0.392, z = 1.514, P>|z| = 0.130, [0.025 0.975] = [-0.175, 1.362]

* C(quiz class, Treatment[reference=4])[T.only the]: coef = 0.7290, std err = 0.460, z = 1.520, P>|z| = 0.129, [0.025 0.975] = [-0.171, 1.629]

* C(quiz class, Treatment[reference=4])[T.permuted pairs]: coef = 0.5306, std err = 0.467, z = 1.136, P>|z| = 0.256, [0.025 0.975] = [-0.385, 1.446]

* C(quiz class, Treatment[reference=4])[T.random finals]: coef = 1.2736, std err = 0.638, z = 1.996, P>|z| = 0.046, [0.025 0.975] = [0.025, 2.522]

* C(quiz class, Treatment[reference=4])[T.randoms permuted]: coef = -0.0148, std err = 0.585, z = -0.025, P>|z| = 0.980, [0.025 0.975] = [-1.162, 1.132]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.defaults]: coef = -0.4530, std err = 0.608, z = -0.745, P>|z| = 0.456, [0.025 0.975] = [-1.645, 0.739]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.distracted]: coef = -0.5393, std err = 0.518, z = -1.041, P>|z| = 0.298, [0.025 0.975] = [-1.554, 0.476]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.only the]: coef = 0.4354, std err = 0.659, z = 0.661, P>|z| = 0.509, [0.025 0.975] = [-0.856, 1.727]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.permuted pairs]: coef = -1.3833, std err = 0.557, z = -2.481, P>|z| = 0.013, [0.025 0.975] = [-2.474, -0.292]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.random finals]: coef = 2.4663, std err = 0.667, z = 3.696, P>|z| = 0.000, [0.025 0.975] = [1.159, 3.773]

* C(subject type, Treatment[reference=1])[T.GPT-4]:C(quiz class, Treatment[reference=4])[T.randoms permuted]: coef = 1.2907, std err = 0.686, z = 1.896, P>|z| = 0.058, [0.025 0.975] = [0.044, 2.644]

2. **Second Result (distracted, permuted pairs)**:

* No. Observations: 300

* Pseudo R-squ.: 0.01016

* Log-Likelihood: -148.60

* LLR p-value: 0.08068

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -0.5132, std err = 0.233, z = -2.203, P>|z| = 0.028, [0.025 0.975] = [-0.971, -0.056]

3. **Third Result (distracted, permuted pairs)**:

* No. Observations: 298

* Pseudo R-squ.: 0.05599

* Log-Likelihood: -186.11

* LLR p-value: 2.622e-06

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -1.1664, std err = 0.256, z = -4.557, P>|z| = 0.000, [0.025 0.975] = [-1.668, -0.665]

4. **Fourth Result (permuted pairs)**:

* No. Observations: 152

* Pseudo R-squ.: 0.03185

* Log-Likelihood: -100.46

* LLR p-value: 0.01015

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -0.8564, std err = 0.338, z = -2.536, P>|z| = 0.011, [0.025 0.975] = [-1.518, -0.195]

5. **Fifth Result (only the)**:

* No. Observations: 146

* Pseudo R-squ.: 0.1021

* Log-Likelihood: -81.628

* LLR p-value: 1.642e-06

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -1.6764, std err = 0.422, z = -3.979, P>|z| = 0.000, [0.025 0.975] = [-2.508, -0.851]

6. **Sixth Result (random permuted pairs, randoms, random finals)**:

* No. Observations: 152

* Pseudo R-squ.: 0.0004850

* Log-Likelihood: -51.147

* LLR p-value: 0.8237

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = 0.1178, std err = 0.223, z = 0.529, P>|z| = 0.597, [0.025 0.975] = [-0.319, 0.554]

7. **Seventh Result (randoms, random permuted pairs)**:

* No. Observations: 260

* Pseudo R-squ.: 0.1873

* Log-Likelihood: -166.40

* LLR p-value: 2.910e-15

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -2.2186, std err = 0.301, z = -7.383, P>|z| = 0.000, [0.025 0.975] = [-2.808, -1.630]

8. **Eighth Result (random finals)**:

* No. Observations: 128

* Pseudo R-squ.: 0.1794

* Log-Likelihood: -71.979

* LLR p-value: 2.012e-08

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -2.1972, std err = 0.422, z = -5.211, P>|z| = 0.000, [0.025 0.975] = [-3.024, -1.371]

9. **Ninth Result (no conditions specified)**:

* No. Observations: 132

* Pseudo R-squ.: 0.2517

* Log-Likelihood: -54.70

* LLR p-value: 1.308e-09

* C(subject type, Treatment[reference=1])[T.GPT-4]: coef = -2.7850, std err = 0.539, z = -5.168, P>|z| = 0.000, [0.025 0.975] = [-3.841, -1.729]

### Key Observations

* The effect of subject type (GPT-4 vs. default) varies significantly depending on the treatment conditions.

* In some conditions, GPT-4 subjects have significantly higher scores (positive coefficients), while in others, they have significantly lower scores (negative coefficients).

* The p-values indicate the statistical significance of the coefficients. Lower p-values suggest a stronger effect.

* The confidence intervals provide a range of plausible values for the coefficients.

### Interpretation

The Logit Regression Results suggest that the impact of using GPT-4 on respondent scores is highly dependent on the specific treatment conditions applied. The interaction terms in the first result indicate that the effect of GPT-4 varies across different quiz class treatments. The subsequent results, which focus on specific combinations of treatment conditions, further highlight this dependency.

For example, in the first result, the positive coefficient for C(subject type, Treatment[reference=1])[T.GPT-4] suggests that, on average, GPT-4 subjects tend to have higher scores. However, the interaction terms reveal that this effect is not consistent across all quiz class treatments.

The negative coefficients for C(subject type, Treatment[reference=1])[T.GPT-4] in the later results (e.g., when considering 'randoms, random permuted pairs' or 'random finals') suggest that under these specific conditions, GPT-4 subjects tend to have lower scores compared to the reference group.

These findings underscore the importance of considering the context and specific conditions when evaluating the impact of GPT-4 on respondent scores. The results suggest that GPT-4 may not always lead to improved performance and that its effectiveness may depend on the specific task or treatment being applied.

DECODING INTELLIGENCE...