## Bar Chart: Divider: Time vs Core count

### Overview

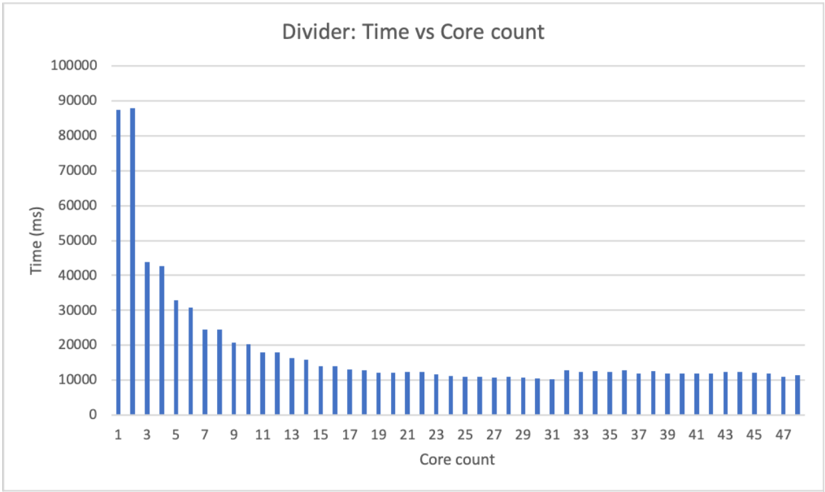

The image displays a vertical bar chart illustrating the relationship between the number of processing cores ("Core count") and the execution time in milliseconds ("Time (ms)") for a computational task labeled "Divider". The chart demonstrates a clear inverse relationship where increasing the core count significantly reduces the execution time, with diminishing returns as the core count becomes high.

### Components/Axes

* **Chart Title:** "Divider: Time vs Core count" (positioned at the top center).

* **Y-Axis (Vertical):**

* **Label:** "Time (ms)" (positioned vertically on the left side).

* **Scale:** Linear scale from 0 to 100,000.

* **Major Tick Marks:** 0, 10000, 20000, 30000, 40000, 50000, 60000, 70000, 80000, 90000, 100000.

* **X-Axis (Horizontal):**

* **Label:** "Core count" (positioned at the bottom center).

* **Scale:** Linear scale representing discrete core counts.

* **Major Tick Mark Labels:** 1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47. The bars are plotted for every integer core count from 1 to 48.

* **Data Series:** A single series represented by blue vertical bars. There is no legend, as only one data category is present.

### Detailed Analysis

The chart plots execution time against core count. The trend is a steep, non-linear decline in time as cores are added, which then plateaus.

**Trend Verification:** The data series shows a steep downward slope from left (low core count) to right (high core count), which gradually flattens into a near-horizontal line.

**Approximate Data Points (Time in ms):**

* **Core 1:** ~88,000 ms

* **Core 2:** ~88,000 ms (similar to Core 1)

* **Core 3:** ~44,000 ms

* **Core 4:** ~43,000 ms

* **Core 5:** ~33,000 ms

* **Core 6:** ~31,000 ms

* **Core 7:** ~25,000 ms

* **Core 8:** ~24,000 ms

* **Core 9:** ~21,000 ms

* **Core 10:** ~20,000 ms

* **Core 11:** ~18,000 ms

* **Core 12:** ~17,000 ms

* **Core 13:** ~16,000 ms

* **Core 14:** ~15,000 ms

* **Core 15:** ~14,000 ms

* **Core 16:** ~13,000 ms

* **Core 17:** ~12,500 ms

* **Core 18:** ~12,000 ms

* **Core 19:** ~11,500 ms

* **Core 20:** ~11,000 ms

* **Core 21:** ~11,000 ms

* **Core 22:** ~10,500 ms

* **Core 23:** ~10,500 ms

* **Core 24:** ~10,000 ms

* **Core 25:** ~10,000 ms

* **Core 26:** ~10,000 ms

* **Core 27:** ~10,000 ms

* **Core 28:** ~10,000 ms

* **Core 29:** ~10,000 ms

* **Core 30:** ~10,000 ms

* **Core 31:** ~10,000 ms

* **Core 32:** ~12,000 ms (Note: A slight, anomalous increase)

* **Core 33:** ~12,000 ms

* **Core 34:** ~12,000 ms

* **Core 35:** ~12,000 ms

* **Core 36:** ~12,000 ms

* **Core 37:** ~12,000 ms

* **Core 38:** ~12,000 ms

* **Core 39:** ~12,000 ms

* **Core 40:** ~12,000 ms

* **Core 41:** ~12,000 ms

* **Core 42:** ~12,000 ms

* **Core 43:** ~12,000 ms

* **Core 44:** ~12,000 ms

* **Core 45:** ~12,000 ms

* **Core 46:** ~12,000 ms

* **Core 47:** ~12,000 ms

* **Core 48:** ~11,000 ms

### Key Observations

1. **Initial Steep Drop:** The most dramatic performance gain occurs when moving from 1-2 cores to 3-4 cores, where time is roughly halved.

2. **Diminishing Returns:** The rate of improvement slows significantly after approximately 16 cores. The curve flattens, indicating that adding more cores yields progressively smaller reductions in execution time.

3. **Performance Plateau:** From approximately core 24 to core 31, the execution time stabilizes around 10,000 ms.

4. **Anomalous Increase:** There is a distinct, small upward jump in execution time at core 32 (to ~12,000 ms), which then remains constant through core 47. This breaks the previous plateau and suggests a potential change in system behavior, resource contention, or measurement artifact at that specific core count.

5. **Final Value:** At the highest plotted core count (48), the time is approximately 11,000 ms, slightly lower than the anomalous plateau but still higher than the minimum observed at cores 24-31.

### Interpretation

This chart visualizes the concept of **parallel scaling efficiency** for a specific computational task ("Divider"). The data suggests the task is highly parallelizable initially, as adding cores dramatically reduces runtime. However, it also clearly demonstrates **Amdahl's Law** or the impact of **parallel overhead** (like communication, synchronization, or serial bottlenecks).

* **The steep initial decline** indicates that a large portion of the task can be effectively distributed across multiple cores.

* **The flattening curve** reveals that beyond a certain point (~16-24 cores), the overhead of managing parallel execution or the remaining serial portion of the code dominates, making additional cores less effective.

* **The anomalous increase at core 32** is a critical point for investigation. It could indicate a hardware boundary (e.g., crossing a NUMA node boundary), a software scheduling issue, or a resource conflict (e.g., memory bandwidth saturation) that introduces new inefficiencies at that specific scale. This outlier is more significant than the general trend and would be a key focus for performance optimization.

* **The overall takeaway** is that for this "Divider" task, simply maximizing core count is not optimal. There is a "sweet spot" for efficiency (around 24-31 cores in this test), after which performance may degrade or stagnate due to system-level constraints. The chart provides empirical data to guide resource allocation for this specific workload.