\n

## Line Chart: Inference Accuracy vs. Epoch

### Overview

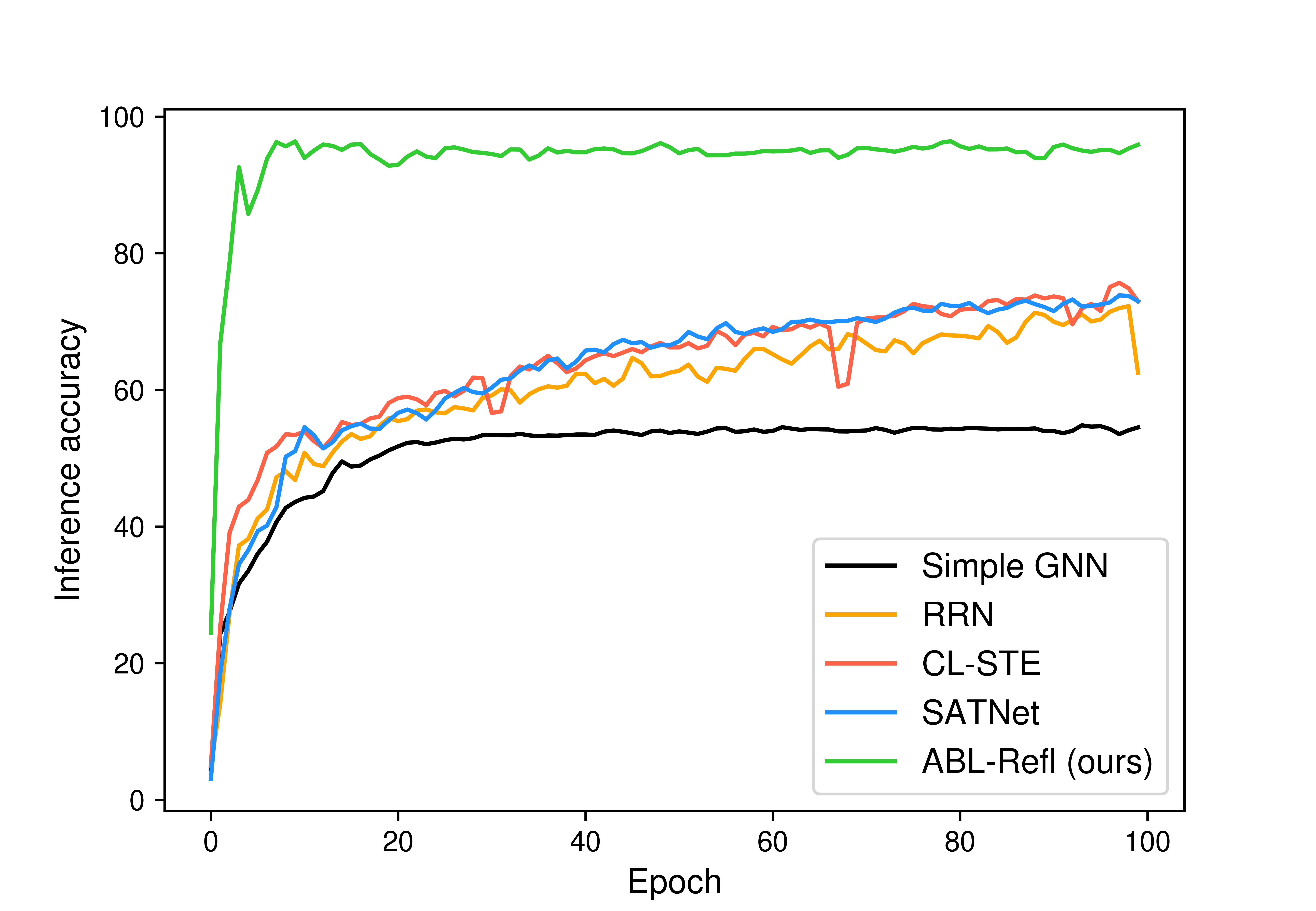

This image presents a line chart illustrating the inference accuracy of five different models (Simple GNN, RRN, CL-STE, SATNet, and ABL-Refl) as a function of the training epoch. The chart spans from epoch 0 to 100, with inference accuracy ranging from 0 to 100.

### Components/Axes

* **X-axis:** Labeled "Epoch", ranging from 0 to 100.

* **Y-axis:** Labeled "Inference accuracy", ranging from 0 to 100.

* **Legend:** Located in the top-right corner of the chart. It identifies each line with a specific color and model name:

* Black: Simple GNN

* Orange: RRN

* Red: CL-STE

* Blue: SATNet

* Green: ABL-Refl (ours)

### Detailed Analysis

Here's a breakdown of each model's performance, with approximate values:

* **Simple GNN (Black):** Starts at approximately 5 accuracy at epoch 0. The line slopes upward, reaching a plateau around 58 accuracy between epochs 20 and 100.

* **RRN (Orange):** Begins at approximately 10 accuracy at epoch 0. The line increases rapidly to around 65 accuracy by epoch 10, then fluctuates between 60 and 75 accuracy for the remainder of the epochs, with a slight downward trend towards epoch 100, ending around 62 accuracy.

* **CL-STE (Red):** Starts at approximately 5 accuracy at epoch 0. The line increases steadily, reaching around 68 accuracy by epoch 10. It then fluctuates between 65 and 75 accuracy, with a slight dip around epoch 80, ending around 70 accuracy.

* **SATNet (Blue):** Starts at approximately 10 accuracy at epoch 0. The line increases rapidly to around 70 accuracy by epoch 10. It then fluctuates between 68 and 75 accuracy, with a slight dip around epoch 80, ending around 72 accuracy.

* **ABL-Refl (Green):** Starts at approximately 95 accuracy at epoch 0. The line decreases slightly to around 92 accuracy by epoch 5, then remains relatively stable between 90 and 98 accuracy for the rest of the epochs, ending around 96 accuracy.

### Key Observations

* ABL-Refl consistently demonstrates the highest inference accuracy throughout the training process, significantly outperforming the other models.

* Simple GNN exhibits the lowest and most stable accuracy, plateauing early in training.

* RRN, CL-STE, and SATNet show similar performance, with fluctuating accuracy between 60 and 75.

* RRN, CL-STE, and SATNet all show a dip in accuracy around epoch 80.

* The initial rapid increase in accuracy for RRN, CL-STE, and SATNet suggests fast learning in the early stages of training.

### Interpretation

The data suggests that the ABL-Refl model is substantially more effective at achieving high inference accuracy compared to the other models tested. This could be due to its architecture, training methodology, or a combination of factors. The relatively low and stable accuracy of Simple GNN indicates that it may be a less complex model or require further optimization. The fluctuations in accuracy for RRN, CL-STE, and SATNet could be attributed to overfitting, learning rate adjustments, or the inherent variability in the training data. The dip in accuracy around epoch 80 for these three models warrants further investigation to identify the underlying cause. The chart demonstrates the importance of model selection and training strategies in achieving optimal performance in inference tasks. The "ours" designation on ABL-Refl suggests this is the model developed by the authors of the study, and the results are presented to highlight its superiority.