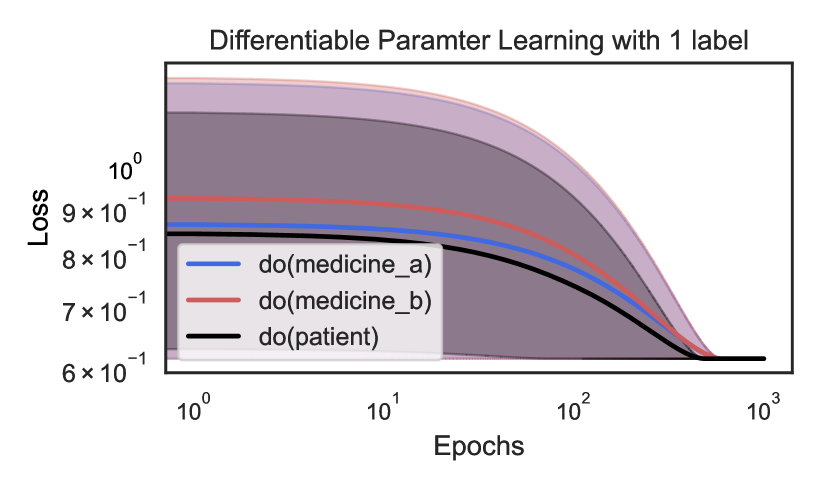

## Line Chart: Differentiable Parameter Learning with 1 label

### Overview

This is a log-log line chart illustrating the reduction in model loss over training epochs for three distinct intervention groups, each with a shaded band representing uncertainty/confidence intervals. The chart tracks learning performance across a logarithmic scale of epochs, showing convergence of all groups to a similar final loss value.

### Components/Axes

- **Title**: "Differentiable Parameter Learning with 1 label" (centered at the top of the chart)

- **Y-axis**: Labeled "Loss", using a logarithmic scale. Axis markers (bottom to top): `6×10⁻¹`, `7×10⁻¹`, `8×10⁻¹`, `9×10⁻¹`, `10⁰`

- **X-axis**: Labeled "Epochs", using a logarithmic scale. Axis markers (left to right): `10⁰`, `10¹`, `10²`, `10³`

- **Legend (bottom-left, overlapping the chart area)**:

- Blue line: `do(medicine_a)`

- Red line: `do(medicine_b)`

- Black line: `do(patient)`

- **Shaded Uncertainty Bands**: Each line has a corresponding shaded region (light purple for `do(medicine_a)`, darker purple for `do(medicine_b)`, dark gray for `do(patient)`) indicating variability/confidence in the loss measurement.

### Detailed Analysis

1. **Trend Verification**:

- All three lines exhibit a consistent downward trend as epochs increase, meaning loss decreases with more training iterations, with the steepest drop occurring between `10²` and `10³` epochs.

- `do(patient)` (black line): Starts at ~8.5×10⁻¹ at `10⁰` epochs, decreases gradually, then steeply after `10²` epochs, converging to ~6×10⁻¹ at `10³` epochs. Its shaded band is the narrowest, indicating the lowest uncertainty.

- `do(medicine_a)` (blue line): Starts at ~8.8×10⁻¹ at `10⁰` epochs, follows a parallel downward curve to the patient group, converging to ~6×10⁻¹ at `10³` epochs. Its shaded band is wider than the patient group's, but narrower than the medicine_b group's.

- `do(medicine_b)` (red line): Starts at ~9.2×10⁻¹ at `10⁰` epochs (the highest initial loss), decreases in a similar curve, converging to ~6×10⁻¹ at `10³` epochs. Its shaded band is the widest, indicating the highest uncertainty.

### Key Observations

- All three intervention groups converge to the same final loss value (~6×10⁻¹) at `10³` epochs.

- Initial loss is highest for `do(medicine_b)`, followed by `do(medicine_a)`, then `do(patient)`.

- Uncertainty (shaded band width) is highest for `do(medicine_b)` and lowest for `do(patient)`.

- The rate of loss reduction is consistent across all groups, with the most rapid improvement occurring in the later training stages (100 to 1000 epochs).

### Interpretation

This chart demonstrates that differentiable parameter learning with a single label enables loss reduction across all three intervention groups, with full convergence to equivalent performance after sufficient training. The `do(patient)` group shows the most stable, predictable learning trajectory (lowest initial loss and uncertainty), suggesting this intervention is the most efficient in early training stages. `do(medicine_b)` has the highest initial loss and variability, indicating more unpredictable early learning, but still reaches the same final performance as the other groups. This implies that while initial performance and stability vary, all interventions can achieve identical loss levels with enough training epochs, meaning the model can adapt to all three interventions given sufficient training time.