TECHNICAL ASSET FINGERPRINT

2b5efd4918894717c40f4a71

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

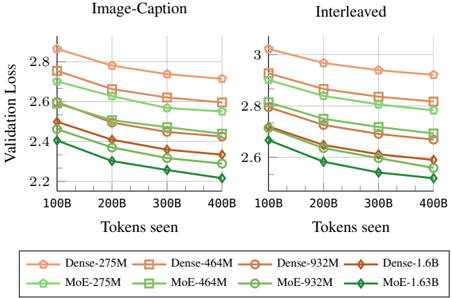

## Chart: Validation Loss vs. Tokens Seen

### Overview

The image presents two line charts comparing the validation loss of different model architectures (Dense and MoE) with varying parameter sizes (275M, 464M, 932M, 1.6B) as a function of the number of tokens seen during training. The left chart is labeled "Image-Caption" and the right chart is labeled "Interleaved". Both charts share the same x and y axes.

### Components/Axes

* **Title (Left Chart):** Image-Caption

* **Title (Right Chart):** Interleaved

* **Y-axis Label:** Validation Loss

* Scale: 2.2 to 3.0, with tick marks at 2.2, 2.4, 2.6, 2.8, and 3.0.

* **X-axis Label:** Tokens seen

* Scale: 100B to 400B, with tick marks at 100B, 200B, 300B, and 400B.

* **Legend (Bottom):**

* Dense-275M (light brown, circle marker)

* Dense-464M (light brown, square marker)

* Dense-932M (light brown, no marker)

* Dense-1.6B (brown, diamond marker)

* MoE-275M (light green, circle marker)

* MoE-464M (light green, square marker)

* MoE-932M (light green, no marker)

* MoE-1.63B (dark green, diamond marker)

### Detailed Analysis

**Image-Caption Chart (Left)**

* **Dense-275M (light brown, circle):** Starts at approximately 2.85 at 100B tokens and decreases to approximately 2.72 at 400B tokens.

* **Dense-464M (light brown, square):** Starts at approximately 2.78 at 100B tokens and decreases to approximately 2.70 at 400B tokens.

* **Dense-932M (light brown, no marker):** Starts at approximately 2.65 at 100B tokens and decreases to approximately 2.58 at 400B tokens.

* **Dense-1.6B (brown, diamond):** Starts at approximately 2.52 at 100B tokens and decreases to approximately 2.42 at 400B tokens.

* **MoE-275M (light green, circle):** Starts at approximately 2.72 at 100B tokens and decreases to approximately 2.60 at 400B tokens.

* **MoE-464M (light green, square):** Starts at approximately 2.65 at 100B tokens and decreases to approximately 2.52 at 400B tokens.

* **MoE-932M (light green, no marker):** Starts at approximately 2.50 at 100B tokens and decreases to approximately 2.38 at 400B tokens.

* **MoE-1.63B (dark green, diamond):** Starts at approximately 2.40 at 100B tokens and decreases to approximately 2.20 at 400B tokens.

**Interleaved Chart (Right)**

* **Dense-275M (light brown, circle):** Starts at approximately 3.00 at 100B tokens and decreases to approximately 2.80 at 400B tokens.

* **Dense-464M (light brown, square):** Starts at approximately 2.88 at 100B tokens and decreases to approximately 2.75 at 400B tokens.

* **Dense-932M (light brown, no marker):** Starts at approximately 2.75 at 100B tokens and decreases to approximately 2.65 at 400B tokens.

* **Dense-1.6B (brown, diamond):** Starts at approximately 2.68 at 100B tokens and decreases to approximately 2.55 at 400B tokens.

* **MoE-275M (light green, circle):** Starts at approximately 2.80 at 100B tokens and decreases to approximately 2.70 at 400B tokens.

* **MoE-464M (light green, square):** Starts at approximately 2.72 at 100B tokens and decreases to approximately 2.60 at 400B tokens.

* **MoE-932M (light green, no marker):** Starts at approximately 2.60 at 100B tokens and decreases to approximately 2.48 at 400B tokens.

* **MoE-1.63B (dark green, diamond):** Starts at approximately 2.50 at 100B tokens and decreases to approximately 2.30 at 400B tokens.

### Key Observations

* In both charts, validation loss generally decreases as the number of tokens seen increases.

* For both Dense and MoE architectures, larger models (higher parameter counts) tend to have lower validation loss.

* The MoE-1.63B model consistently exhibits the lowest validation loss across both charts.

* The "Interleaved" chart generally shows higher validation loss values compared to the "Image-Caption" chart for all models.

### Interpretation

The charts suggest that increasing the number of tokens seen during training improves the validation loss for all models. Furthermore, larger models, particularly the MoE-1.63B model, achieve lower validation loss, indicating better performance. The difference in validation loss between the "Image-Caption" and "Interleaved" charts suggests that the training data distribution or task setup in the "Interleaved" scenario is more challenging, leading to higher loss values. The MoE models generally outperform the Dense models, indicating that the Mixture of Experts architecture is more effective for this task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

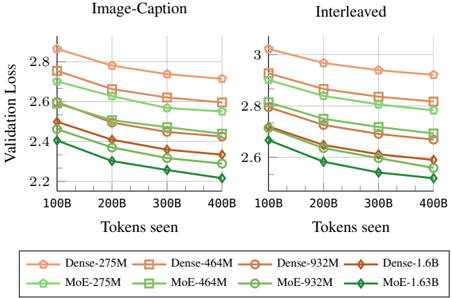

## Chart: Validation Loss vs. Tokens Seen

### Overview

The image presents two line charts comparing validation loss against the number of tokens seen during training. The charts are titled "Image-Caption" and "Interleaved", suggesting different training methodologies. Each chart displays multiple lines, each representing a different model configuration. The x-axis represents "Tokens seen" and the y-axis represents "Validation Loss".

### Components/Axes

* **X-axis:** "Tokens seen" with markers at 100B, 200B, 300B, and 400B. (B = Billion)

* **Y-axis:** "Validation Loss" with a scale ranging from approximately 2.2 to 3.0.

* **Legend:** Located at the bottom of the image, containing the following model configurations:

* Dense-275M (Orange) - represented by a solid orange line with circle markers.

* Dense-464M (Light Orange) - represented by a dashed light orange line with square markers.

* Dense-932M (Brown) - represented by a solid brown line with circle markers.

* Dense-1.6B (Dark Orange) - represented by a solid dark orange line with diamond markers.

* MoE-275M (Green) - represented by a solid green line with circle markers.

* MoE-464M (Light Green) - represented by a dashed light green line with square markers.

* MoE-932M (Dark Green) - represented by a solid dark green line with circle markers.

* MoE-1.63B (Bright Green) - represented by a solid bright green line with diamond markers.

### Detailed Analysis or Content Details

**Image-Caption Chart:**

* **Dense-275M:** Starts at approximately 2.9, decreases to around 2.55 by 400B tokens.

* **Dense-464M:** Starts at approximately 2.8, decreases to around 2.5 by 400B tokens.

* **Dense-932M:** Starts at approximately 2.75, decreases to around 2.45 by 400B tokens.

* **Dense-1.6B:** Starts at approximately 2.85, decreases to around 2.5 by 400B tokens.

* **MoE-275M:** Starts at approximately 2.8, decreases to around 2.4 by 400B tokens.

* **MoE-464M:** Starts at approximately 2.7, decreases to around 2.35 by 400B tokens.

* **MoE-932M:** Starts at approximately 2.65, decreases to around 2.3 by 400B tokens.

* **MoE-1.63B:** Starts at approximately 2.6, decreases to around 2.2 by 400B tokens.

**Interleaved Chart:**

* **Dense-275M:** Starts at approximately 2.95, decreases to around 2.75 by 400B tokens.

* **Dense-464M:** Starts at approximately 2.9, decreases to around 2.7 by 400B tokens.

* **Dense-932M:** Starts at approximately 2.85, decreases to around 2.65 by 400B tokens.

* **Dense-1.6B:** Starts at approximately 2.9, decreases to around 2.7 by 400B tokens.

* **MoE-275M:** Starts at approximately 2.85, decreases to around 2.55 by 400B tokens.

* **MoE-464M:** Starts at approximately 2.8, decreases to around 2.5 by 400B tokens.

* **MoE-932M:** Starts at approximately 2.75, decreases to around 2.45 by 400B tokens.

* **MoE-1.63B:** Starts at approximately 2.7, decreases to around 2.35 by 400B tokens.

### Key Observations

* In both charts, all models exhibit a decreasing trend in validation loss as the number of tokens seen increases, indicating learning and improvement.

* The MoE models consistently demonstrate lower validation loss compared to their Dense counterparts across all token counts.

* Larger models (higher M/B values) generally achieve lower validation loss within each architecture type (Dense or MoE).

* The rate of loss reduction appears to slow down as the number of tokens seen increases, suggesting diminishing returns from further training.

* The "Interleaved" chart generally shows higher validation loss values than the "Image-Caption" chart for the same models and token counts.

### Interpretation

The charts compare the performance of Dense and Mixture-of-Experts (MoE) models with varying sizes (275M to 1.6B parameters) during training on two different datasets or training strategies ("Image-Caption" and "Interleaved"). The validation loss metric indicates how well the models generalize to unseen data.

The consistent outperformance of MoE models suggests that the Mixture-of-Experts architecture is more effective at capturing the complexity of the data and achieving better generalization. The larger models within each architecture type also perform better, indicating that increasing model capacity can lead to improved performance, up to a point.

The higher validation loss in the "Interleaved" chart suggests that the interleaved training strategy may be more challenging or require more data to achieve comparable performance to the "Image-Caption" strategy. This could be due to the nature of the interleaved data or the training process itself.

The diminishing returns observed at higher token counts suggest that further training may not significantly improve performance and could potentially lead to overfitting. The charts provide valuable insights into the trade-offs between model architecture, size, training strategy, and performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Validation Loss vs. Training Tokens for Dense and MoE Models

### Overview

The image displays two side-by-side line charts comparing the validation loss of different model architectures (Dense and Mixture-of-Experts, MoE) across varying model sizes, as a function of the number of training tokens seen. The left chart is titled "Image-Caption" and the right chart is titled "Interleaved". Both charts show a consistent downward trend in validation loss as training progresses (tokens seen increase).

### Components/Axes

* **Chart Titles:**

* Left Chart: "Image-Caption"

* Right Chart: "Interleaved"

* **X-Axis (Both Charts):**

* Label: "Tokens seen"

* Scale: Linear, with major tick marks at 100B, 200B, 300B, and 400B (B = Billion).

* **Y-Axis (Both Charts):**

* Label: "Validation Loss"

* Scale: Linear. The range differs between charts:

* "Image-Caption" chart: Approximately 2.2 to 2.9.

* "Interleaved" chart: Approximately 2.5 to 3.1.

* **Legend (Bottom Center, spanning both charts):**

* The legend defines eight data series, differentiated by color (orange for Dense, green for MoE) and marker shape.

* **Dense Models (Orange Lines):**

1. `Dense-275M` - Orange line with circle markers.

2. `Dense-464M` - Orange line with square markers.

3. `Dense-932M` - Orange line with pentagon (house-shaped) markers.

4. `Dense-1.6B` - Orange line with diamond markers.

* **MoE Models (Green Lines):**

1. `MoE-275M` - Green line with circle markers.

2. `MoE-464M` - Green line with square markers.

3. `MoE-932M` - Green line with pentagon markers.

4. `MoE-1.6B` - Green line with diamond markers.

### Detailed Analysis

**Trend Verification:** All eight data series in both charts exhibit a clear, monotonic downward trend. The validation loss decreases as the number of tokens seen increases from 100B to 400B.

**Data Point Extraction (Approximate Values):**

*Values are estimated from the chart grid. The first value is at 100B tokens, the last at 400B tokens.*

**Left Chart: "Image-Caption"**

1. **Dense-275M (Orange, Circle):** Starts ~2.88, ends ~2.72.

2. **Dense-464M (Orange, Square):** Starts ~2.78, ends ~2.60.

3. **Dense-932M (Orange, Pentagon):** Starts ~2.72, ends ~2.48.

4. **Dense-1.6B (Orange, Diamond):** Starts ~2.50, ends ~2.32.

5. **MoE-275M (Green, Circle):** Starts ~2.70, ends ~2.58.

6. **MoE-464M (Green, Square):** Starts ~2.60, ends ~2.45.

7. **MoE-932M (Green, Pentagon):** Starts ~2.50, ends ~2.35.

8. **MoE-1.6B (Green, Diamond):** Starts ~2.42, ends ~2.22.

**Right Chart: "Interleaved"**

1. **Dense-275M (Orange, Circle):** Starts ~3.02, ends ~2.92.

2. **Dense-464M (Orange, Square):** Starts ~2.92, ends ~2.80.

3. **Dense-932M (Orange, Pentagon):** Starts ~2.85, ends ~2.70.

4. **Dense-1.6B (Orange, Diamond):** Starts ~2.80, ends ~2.60.

5. **MoE-275M (Green, Circle):** Starts ~2.90, ends ~2.82.

6. **MoE-464M (Green, Square):** Starts ~2.82, ends ~2.72.

7. **MoE-932M (Green, Pentagon):** Starts ~2.75, ends ~2.62.

8. **MoE-1.6B (Green, Diamond):** Starts ~2.68, ends ~2.52.

**Spatial Grounding & Component Isolation:**

* The legend is positioned at the bottom, centered below both charts.

* Within each chart, the lines are layered. The `Dense-275M` (orange circle) line is consistently the highest (worst loss), while the `MoE-1.6B` (green diamond) line is consistently the lowest (best loss).

* For any given model size (e.g., 464M), the green MoE line is always positioned below its corresponding orange Dense line in both charts.

### Key Observations

1. **Consistent Scaling:** For both Dense and MoE architectures, larger model sizes (e.g., 1.6B) consistently achieve lower validation loss than smaller models (e.g., 275M) at every training point.

2. **MoE Advantage:** At every comparable model size and training step, the MoE (green) model outperforms the Dense (orange) model. This performance gap is visible in both the "Image-Caption" and "Interleaved" tasks.

3. **Task Difference:** The "Interleaved" task appears to be more challenging, as all models show higher absolute validation loss values compared to the "Image-Caption" task.

4. **Parallel Trends:** The lines for different model sizes within an architecture (Dense or MoE) are roughly parallel, suggesting similar learning dynamics and scaling laws across sizes.

### Interpretation

The data demonstrates two key findings in neural network training:

1. **Architectural Efficiency:** The Mixture-of-Experts (MoE) architecture provides a consistent and significant efficiency gain over a standard Dense architecture of the same parameter count. This is evidenced by the lower validation loss for every MoE model compared to its Dense counterpart. The implication is that MoE models can achieve better performance with the same number of active parameters, or potentially similar performance with fewer computational resources.

2. **Predictable Scaling:** The parallel, downward-sloping lines confirm the principle of scaling laws in deep learning: increasing both model size and the amount of training data (tokens seen) leads to predictable improvements in model performance (lower loss). The consistent gap between model sizes suggests that performance scales smoothly with parameter count within each architecture.

3. **Task Sensitivity:** The higher loss values for the "Interleaved" task indicate it is a more complex or difficult objective than the "Image-Caption" task for these models. However, the relative benefits of scaling and the MoE architecture hold across both tasks, suggesting these are robust findings.

In summary, the charts provide strong visual evidence for the advantages of MoE architectures and the validity of scaling laws, showing that larger models trained on more data perform better, with MoE models offering a superior performance-to-parameter trade-off.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Validation Loss vs. Tokens Seen (Image-Caption and Interleaved Tasks)

### Overview

The image is a dual-axis line chart comparing validation loss across different model sizes (Dense and Mixture-of-Experts [MoE]) for two tasks: "Image-Caption" (left) and "Interleaved" (right). The x-axis represents tokens seen (100B to 400B), and the y-axis represents validation loss (2.2 to 3.0). Each model is represented by a colored line with markers, and trends are consistent across both tasks.

---

### Components/Axes

- **X-Axis (Horizontal)**: "Tokens seen" (100B, 200B, 300B, 400B).

- **Y-Axis (Vertical)**: "Validation Loss" (2.2 to 3.0).

- **Legend (Bottom)**:

- **Dense Models**:

- Dense-275M (orange circles)

- Dense-464M (orange squares)

- Dense-932M (orange diamonds)

- Dense-1.6B (orange triangles)

- **MoE Models**:

- MoE-275M (green circles)

- MoE-464M (green squares)

- MoE-932M (green diamonds)

- MoE-1.63B (green triangles)

- **Sections**:

- Left: "Image-Caption" task.

- Right: "Interleaved" task.

---

### Detailed Analysis

#### Image-Caption Task (Left)

- **Dense Models**:

- **Dense-275M**: Starts at ~2.85 (100B tokens), decreases to ~2.65 (400B tokens).

- **Dense-464M**: Starts at ~2.75, decreases to ~2.55.

- **Dense-932M**: Starts at ~2.65, decreases to ~2.45.

- **Dense-1.6B**: Starts at ~2.55, decreases to ~2.35.

- **MoE Models**:

- **MoE-275M**: Starts at ~2.75, decreases to ~2.55.

- **MoE-464M**: Starts at ~2.65, decreases to ~2.45.

- **MoE-932M**: Starts at ~2.55, decreases to ~2.35.

- **MoE-1.63B**: Starts at ~2.45, decreases to ~2.25.

#### Interleaved Task (Right)

- **Dense Models**:

- **Dense-275M**: Starts at ~3.0, decreases to ~2.8.

- **Dense-464M**: Starts at ~2.9, decreases to ~2.7.

- **Dense-932M**: Starts at ~2.8, decreases to ~2.6.

- **Dense-1.6B**: Starts at ~2.7, decreases to ~2.5.

- **MoE Models**:

- **MoE-275M**: Starts at ~2.9, decreases to ~2.7.

- **MoE-464M**: Starts at ~2.8, decreases to ~2.6.

- **MoE-932M**: Starts at ~2.7, decreases to ~2.5.

- **MoE-1.63B**: Starts at ~2.6, decreases to ~2.4.

---

### Key Observations

1. **Consistent Trends**: All models show decreasing validation loss as tokens increase, indicating improved performance with more data.

2. **MoE Superiority**: MoE models consistently outperform Dense models in both tasks, with smaller validation loss gaps at higher token counts.

3. **Task-Specific Performance**:

- In "Image-Caption", MoE-1.63B achieves ~2.25 loss at 400B tokens.

- In "Interleaved", MoE-1.63B achieves ~2.4 loss at 400B tokens.

4. **Scalability**: Larger models (e.g., Dense-1.6B vs. MoE-1.63B) show diminishing returns, with smaller performance gains relative to their size.

---

### Interpretation

The data demonstrates that **MoE architectures are more efficient** than Dense models for both tasks, maintaining lower validation loss even as token counts scale. This suggests MoE's modular design (activating only relevant subnetworks) offers better resource utilization. The narrowing gap between Dense and MoE models at higher token counts implies that MoE's efficiency advantage persists despite increased data complexity. The "Interleaved" task's higher baseline loss for all models may reflect greater task complexity, but MoE still maintains a relative advantage. These findings align with prior research on MoE's scalability in large language models.

DECODING INTELLIGENCE...