TECHNICAL ASSET FINGERPRINT

2b62f5be83c7b591d7959593

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

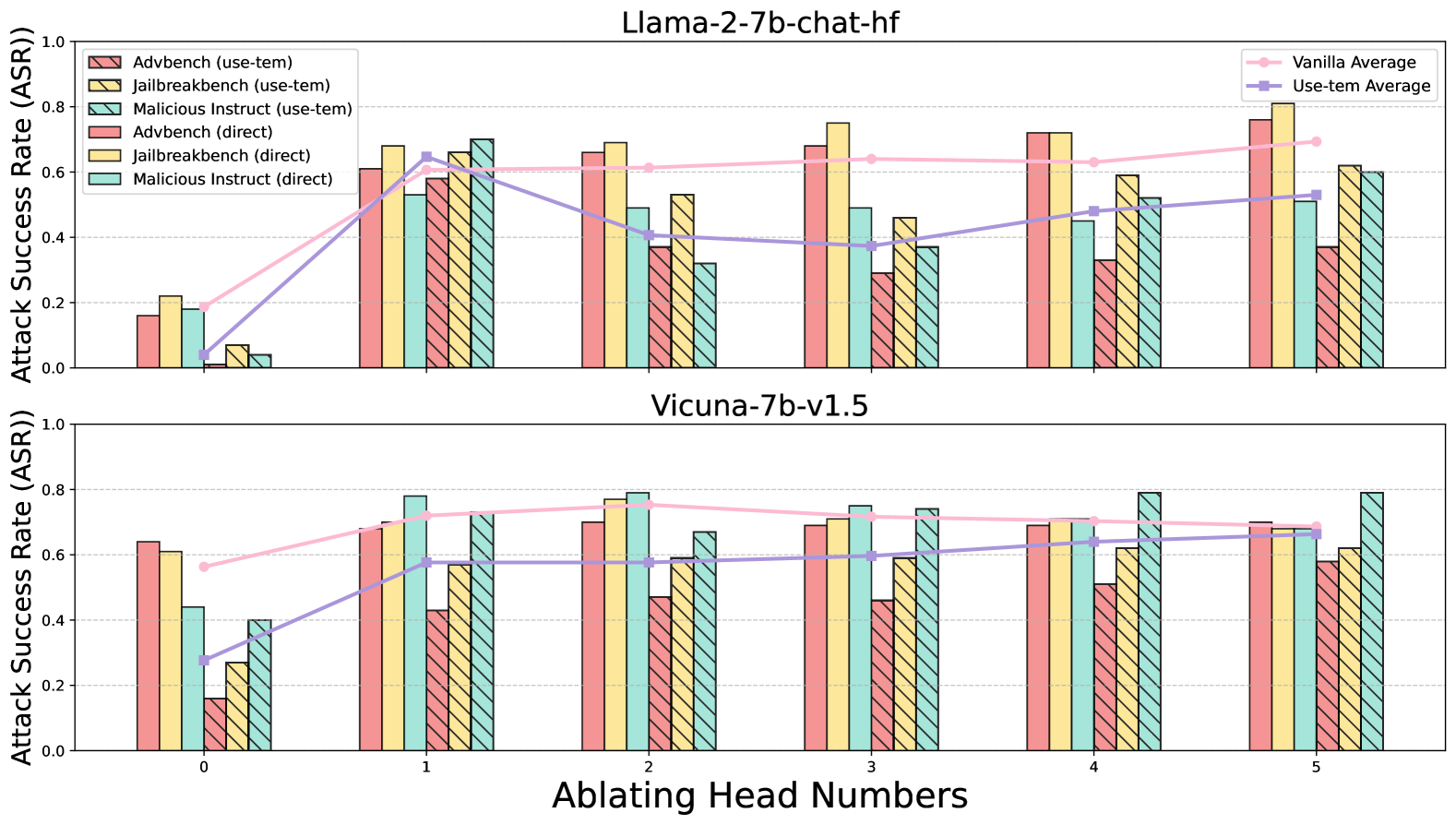

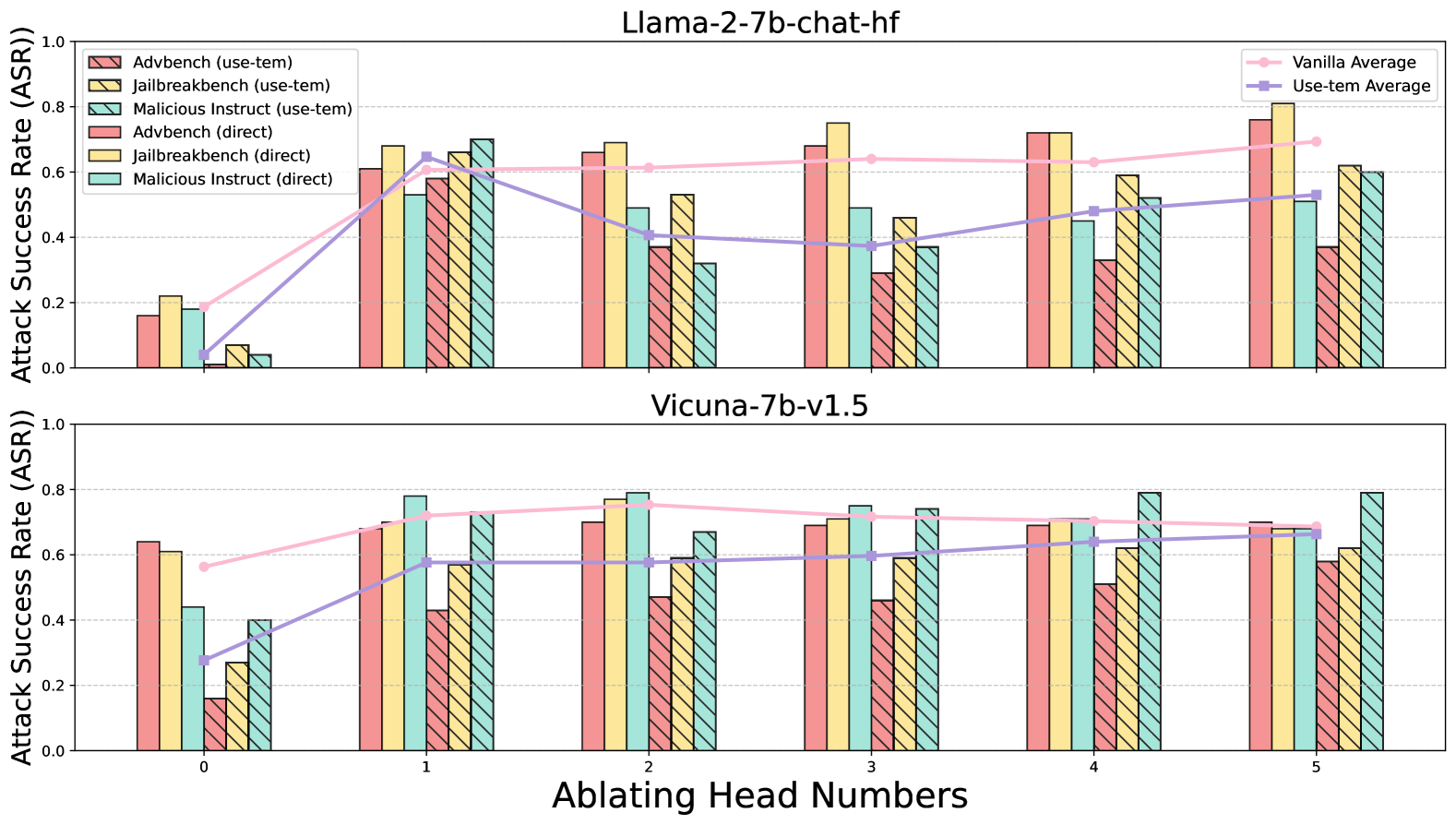

## Bar Chart: Attack Success Rate (ASR) vs. Ablating Head Numbers

### Overview

This image presents two bar charts comparing the Attack Success Rate (ASR) of different attack methods on two language models: Llama-2-7b-chat-hf and Vicuna-7b-v1.5. The charts show how ASR changes as "Head Numbers" are ablated (removed). Each chart includes data for attacks using "use-tem" and "direct" prompting strategies. Averages for the "Vanilla" model and the "Use-tem Average" are also displayed as lines.

### Components/Axes

* **X-axis:** Ablating Head Numbers (0, 1, 2, 3, 4, 5)

* **Y-axis:** Attack Success Rate (ASR) - Scale from 0.0 to 1.0

* **Legend (Top-Right):**

* Red: Advbench (use-tem)

* Orange: Jailbreakbench (use-tem)

* Light Red: Malicious Instruct (use-tem)

* Yellow: Advbench (direct)

* Green: Jailbreakbench (direct)

* Light Green: Malicious Instruct (direct)

* Pink: Vanilla Average (Line)

* Blue: Use-tem Average (Line)

* **Titles:**

* Top Chart: "Llama-2-7b-chat-hf"

* Bottom Chart: "Vicuna-7b-v1.5"

### Detailed Analysis or Content Details

**Llama-2-7b-chat-hf Chart:**

* **Advbench (use-tem) - Red Bars:** Starts at approximately 0.15 at Ablating Head Numbers 0, rises to a peak of around 0.55 at Ablating Head Numbers 2, then declines to approximately 0.45 at Ablating Head Numbers 5.

* **Jailbreakbench (use-tem) - Orange Bars:** Starts at approximately 0.10 at Ablating Head Numbers 0, rises to a peak of around 0.60 at Ablating Head Numbers 1, then declines to approximately 0.40 at Ablating Head Numbers 5.

* **Malicious Instruct (use-tem) - Light Red Bars:** Starts at approximately 0.10 at Ablating Head Numbers 0, rises to a peak of around 0.50 at Ablating Head Numbers 2, then declines to approximately 0.40 at Ablating Head Numbers 5.

* **Advbench (direct) - Yellow Bars:** Starts at approximately 0.05 at Ablating Head Numbers 0, rises to a peak of around 0.40 at Ablating Head Numbers 2, then declines to approximately 0.30 at Ablating Head Numbers 5.

* **Jailbreakbench (direct) - Green Bars:** Starts at approximately 0.10 at Ablating Head Numbers 0, rises to a peak of around 0.50 at Ablating Head Numbers 1, then declines to approximately 0.35 at Ablating Head Numbers 5.

* **Malicious Instruct (direct) - Light Green Bars:** Starts at approximately 0.05 at Ablating Head Numbers 0, rises to a peak of around 0.40 at Ablating Head Numbers 2, then declines to approximately 0.30 at Ablating Head Numbers 5.

* **Vanilla Average - Pink Line:** Starts at approximately 0.20 at Ablating Head Numbers 0, rises to a peak of around 0.55 at Ablating Head Numbers 3, then declines to approximately 0.50 at Ablating Head Numbers 5.

* **Use-tem Average - Blue Line:** Starts at approximately 0.15 at Ablating Head Numbers 0, rises to a peak of around 0.55 at Ablating Head Numbers 2, then declines to approximately 0.45 at Ablating Head Numbers 5.

**Vicuna-7b-v1.5 Chart:**

* **Advbench (use-tem) - Red Bars:** Starts at approximately 0.50 at Ablating Head Numbers 0, remains relatively stable around 0.60-0.70 across all Ablating Head Numbers.

* **Jailbreakbench (use-tem) - Orange Bars:** Starts at approximately 0.40 at Ablating Head Numbers 0, rises to a peak of around 0.75 at Ablating Head Numbers 1, then declines to approximately 0.60 at Ablating Head Numbers 5.

* **Malicious Instruct (use-tem) - Light Red Bars:** Starts at approximately 0.30 at Ablating Head Numbers 0, rises to a peak of around 0.65 at Ablating Head Numbers 1, then declines to approximately 0.55 at Ablating Head Numbers 5.

* **Advbench (direct) - Yellow Bars:** Starts at approximately 0.30 at Ablating Head Numbers 0, remains relatively stable around 0.50-0.60 across all Ablating Head Numbers.

* **Jailbreakbench (direct) - Green Bars:** Starts at approximately 0.30 at Ablating Head Numbers 0, rises to a peak of around 0.60 at Ablating Head Numbers 1, then declines to approximately 0.50 at Ablating Head Numbers 5.

* **Malicious Instruct (direct) - Light Green Bars:** Starts at approximately 0.20 at Ablating Head Numbers 0, rises to a peak of around 0.55 at Ablating Head Numbers 1, then declines to approximately 0.45 at Ablating Head Numbers 5.

* **Vanilla Average - Pink Line:** Starts at approximately 0.40 at Ablating Head Numbers 0, rises to a peak of around 0.75 at Ablating Head Numbers 1, then declines to approximately 0.65 at Ablating Head Numbers 5.

* **Use-tem Average - Blue Line:** Starts at approximately 0.35 at Ablating Head Numbers 0, rises to a peak of around 0.70 at Ablating Head Numbers 1, then declines to approximately 0.60 at Ablating Head Numbers 5.

### Key Observations

* For Llama-2-7b-chat-hf, ASR generally increases with ablation up to a certain point (around 2 heads ablated) and then decreases.

* For Vicuna-7b-v1.5, ASR generally increases with ablation up to a certain point (around 1 head ablated) and then remains relatively stable or declines slightly.

* "Use-tem" prompting generally results in higher ASR compared to "direct" prompting for both models.

* The "Vanilla Average" and "Use-tem Average" lines provide a baseline and comparison for the individual attack methods.

* Jailbreakbench attacks consistently show higher ASR than Advbench and Malicious Instruct attacks.

### Interpretation

The charts demonstrate the impact of ablating head numbers on the vulnerability of two language models to various attack methods. The initial increase in ASR with ablation suggests that certain head numbers contribute to the model's robustness against these attacks. However, the subsequent decline indicates that removing too many heads can degrade the model's overall performance and potentially make it more susceptible to other types of vulnerabilities.

The difference in ASR between "use-tem" and "direct" prompting highlights the importance of prompt engineering in exploiting model weaknesses. "Use-tem" prompts, likely involving more sophisticated techniques, are more effective at eliciting undesirable behavior.

The consistent higher ASR of Jailbreakbench attacks suggests that this attack method is particularly effective at bypassing the models' safety mechanisms. The differences between the two models (Llama-2-7b-chat-hf and Vicuna-7b-v1.5) indicate that their architectures and training data lead to varying levels of vulnerability. The Vicuna model appears more robust to ablation, maintaining a higher ASR across all head number ablations. This could be due to differences in model size, training data, or architectural choices.

DECODING INTELLIGENCE...