## Screenshot: AI Text Playground Interface with Token Prediction Pop-up

### Overview

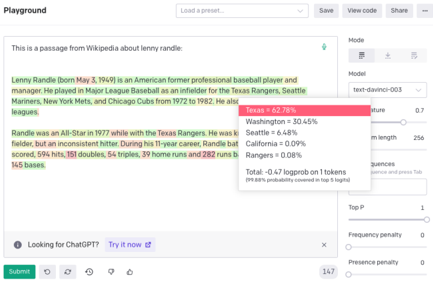

This image is a screenshot of a web-based AI model playground interface. The primary focus is a text passage about baseball player Lenny Randle, where a specific word ("Rangers") is highlighted, triggering a pop-up that displays the model's predicted token probabilities for the next word. The interface includes a main text area, a sidebar with model parameters, and standard web application controls.

### Components/Axes

The interface is divided into several distinct regions:

1. **Header/Navigation Bar (Top):**

* Left: "Playground" title.

* Center: A dropdown menu labeled "Load a preset...".

* Right: Buttons for "Save", "View code", and "Share".

2. **Main Content Area (Center-Left):**

* A text input/display box containing a passage about Lenny Randle.

* The word "Rangers" within the text is highlighted with a red background.

* A pop-up tooltip is anchored to the highlighted word "Rangers".

3. **Sidebar (Right):**

* Contains model configuration parameters under the heading "Mode".

* Includes labeled controls for: `model`, `temperature`, `Maximum length`, `Stop sequences`, `Top P`, `Frequency penalty`, and `Presence penalty`.

4. **Footer/Status Bar (Bottom):**

* Left: A link "Looking for ChatGPT? Try it now →".

* Center: A "Submit" button.

* Right: A token count indicator showing "147".

### Detailed Analysis

**1. Main Text Passage Content:**

The text in the main box is a biographical snippet. It reads:

> "Lenny Randle (born May 12, 1949) is an American former professional baseball player and manager. He played in Major League Baseball as an infielder for the Texas Rangers, Seattle Mariners, New York Mets, and Chicago Cubs from 1972 to 1982. He also played in the Nippon Professional Baseball (NPB) leagues.

> Randle was an All-Star in 1977 while with the Texas Rangers. He was ki[**n**] at fielding, but an inconsistent hitter. During his 11-year career, Randle compiled a .247 batting average with 45 home runs, 103 doubles, 54 triples, 39 home runs and 262 RBIs."

*(Note: There appears to be a typo in the source text: "ki[**n**] at fielding" is likely meant to be "known". The second mention of "home runs" (39) after "54 triples" is also likely an error, possibly meant to be "stolen bases" or another stat.)*

**2. Pop-up Token Prediction Data:**

The pop-up, positioned to the right of the highlighted word "Rangers", shows the model's top predicted tokens to follow the word "Randle was an All-Star in 1977 while with the Texas ". The pop-up has a title "logprobs" and lists:

* **Header:** `logprobs`

* **Data Points (with approximate probabilities):**

* `Rangers = 30.45%` (Displayed with a solid red background bar)

* `Washington = 30.45%` (Displayed with a lighter pink background bar)

* `Seattle = 6.48%` (Displayed with a very light pink background bar)

* `California = 0.09%`

* `Rangers. = 0.09%`

* **Footer:** `Total = -0.42 logprobs on 1 tokens (99.88% probability covered in top 5 logprobs)`

**3. Sidebar Model Parameters:**

The right sidebar displays the following settings:

* `Mode`: (Dropdown, selection not visible)

* `model`: `text-davinci-003`

* `temperature`: `0.7`

* `Maximum length`: `256`

* `Stop sequences`: (Empty input field)

* `Top P`: `1`

* `Frequency penalty`: `0`

* `Presence penalty`: `0`

### Key Observations

1. **Prediction Ambiguity:** The model shows a near-perfect tie in probability (30.45% each) between "Rangers" and "Washington" as the next token. This indicates significant uncertainty, as both are plausible completions (referring to the Texas Rangers or the Washington Senators, a franchise that became the Texas Rangers).

2. **Contextual Relevance:** The top predictions ("Rangers", "Washington", "Seattle", "California") are all names of MLB teams, demonstrating the model's understanding of the baseball context established in the passage.

3. **Interface State:** The interface is in a state of analysis, not generation. The "Submit" button is active, but the pop-up shows a diagnostic view (logprobs) of the model's internal predictions for a specific point in the existing text.

4. **Textual Anomalies:** The source text contains two apparent statistical errors (duplicate "home runs" category, possible typo "ki[**n**]"), which are faithfully reproduced in the screenshot.

### Interpretation

This screenshot captures a moment of **model interpretability** within a developer-focused AI tool. It visualizes the "thought process" of a large language model (text-davinci-003) at a specific decision point.

* **What it demonstrates:** The image shows how the model uses the preceding context ("Texas ") to generate a probability distribution over possible next words. The high probability for "Washington" alongside "Rangers" is particularly insightful. It suggests the model's training data contains strong associations between "Texas" and both "Rangers" (the team name) and "Washington" (the prior location of the franchise before it moved to Texas in 1972). This reveals the model's knowledge of historical sports geography.

* **Relationship between elements:** The highlighted text acts as the query, the pop-up is the diagnostic output, and the sidebar parameters define the conditions under which the prediction was calculated. The interface bridges the gap between raw text and the model's internal numerical reasoning (log probabilities).

* **Notable implication:** The near-equal split in probability highlights a core challenge in language generation: choosing between multiple statistically plausible and contextually correct continuations. A human writer would know "Rangers" is the correct completion for the sentence about Lenny Randle's 1977 All-Star appearance, but the model, operating on statistical patterns, assigns nearly equal weight to a historically related but contextually incorrect alternative. This underscores the difference between statistical prediction and factual, coherent narrative generation.