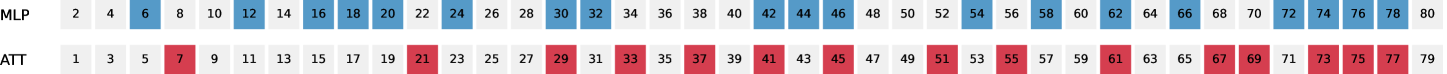

## Chart: MLP vs. ATT Layer/Unit Highlighting Pattern

### Overview

The image displays a simple, two-row chart or diagram comparing two sequences labeled "MLP" and "ATT". Each row consists of a series of consecutive integers. Certain numbers in each row are highlighted with a colored background, creating a distinct, non-overlapping pattern between the two rows. The chart appears to be a visual representation of selected indices, layers, or units, likely from a technical or computational context such as neural network architecture.

### Components/Axes

* **Row Labels (Left-aligned):**

* Top Row: `MLP`

* Bottom Row: `ATT`

* **Data Series:** Two horizontal sequences of integers.

* **MLP Sequence:** Even numbers from 2 to 80.

* **ATT Sequence:** Odd numbers from 1 to 79.

* **Highlighting/Legend:**

* **Blue Highlight:** Applied to specific numbers in the `MLP` row.

* **Red Highlight:** Applied to specific numbers in the `ATT` row.

* There is no separate legend box; the row labels and associated highlight colors serve as the key.

### Detailed Analysis

**1. MLP Row (Top, Blue Highlights):**

* **Sequence:** 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30, 32, 34, 36, 38, 40, 42, 44, 46, 48, 50, 52, 54, 56, 58, 60, 62, 64, 66, 68, 70, 72, 74, 76, 78, 80.

* **Highlighted Numbers (Blue):** 6, 12, 16, 18, 20, 24, 32, 34, 36, 42, 44, 46, 54, 56, 58, 62, 64, 66, 72, 74, 76.

* **Pattern:** The highlighting does not follow a simple arithmetic progression. It appears to select clusters of consecutive even numbers (e.g., 16,18,20; 32,34,36; 42,44,46; 54,56,58; 62,64,66; 72,74,76) interspersed with single highlighted numbers (6, 12, 24, 42, 54, 62, 72). The first highlighted value is 6.

**2. ATT Row (Bottom, Red Highlights):**

* **Sequence:** 1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65, 67, 69, 71, 73, 75, 77, 79.

* **Highlighted Numbers (Red):** 7, 21, 29, 33, 35, 37, 41, 43, 45, 51, 55, 61, 67, 69, 73, 75, 77.

* **Pattern:** Similar to the MLP row, the highlighting shows clusters (e.g., 33,35,37; 41,43,45; 73,75,77) and single points (7, 21, 29, 51, 55, 61, 67, 69). The first highlighted value is 7.

**3. Cross-Reference & Spatial Grounding:**

* The two rows are perfectly aligned vertically, allowing for direct comparison of indices.

* The highlighted sets are **mutually exclusive**. No number is highlighted in both rows. For example, the even number 6 is blue (MLP), and the odd number 7 is red (ATT).

* The highlighting creates a complementary pattern across the full range of integers from 1 to 80.

### Key Observations

1. **Complementary Selection:** The primary observation is that the blue (MLP) and red (ATT) highlights partition the set of integers {6, 7, 12, 16, 17...} into two disjoint subsets. This suggests a deliberate, non-random selection process where each highlighted index is assigned to exactly one of the two categories.

2. **Clustered Pattern:** Both series exhibit a pattern of selecting small, consecutive blocks of numbers (typically 2-3 in a row) rather than a uniform or random distribution.

3. **Range:** The highlighting begins at index 6 (MLP) and 7 (ATT), leaving the very first few numbers (1-5) unhighlighted in both rows.

4. **Density:** The density of highlighted numbers appears roughly similar between the two rows across the displayed range.

### Interpretation

This chart most likely visualizes the **allocation of specific layers, units, or parameters** between two components of a machine learning model: a Multi-Layer Perceptron (MLP) and an Attention mechanism (ATT).

* **What it suggests:** The data demonstrates a design choice where certain computational resources (indexed by the numbers) are dedicated exclusively to either the MLP sub-network or the Attention sub-network. The clustered pattern might indicate that related or sequential operations are grouped within the same component.

* **How elements relate:** The MLP and ATT rows are parallel and complementary, implying they are two parts of a unified system. The lack of overlap is critical—it shows a clear division of labor with no shared resources at these indices.

* **Notable anomalies:** The absence of any highlights for indices 1-5 is notable. This could mean these initial layers/units serve a different, shared, or foundational purpose not assigned to either the MLP or ATT specific functions.

* **Underlying purpose:** This type of visualization is common in research papers or technical documentation to illustrate novel neural network architectures, such as hybrid models that interleave or separately process information through MLP and attention pathways. The specific indices chosen (e.g., 6, 12, 16...) would correspond to the layer numbers in the model's architecture where these specialized components are applied.