TECHNICAL ASSET FINGERPRINT

2c4acacf249fcc86b8f3e083

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

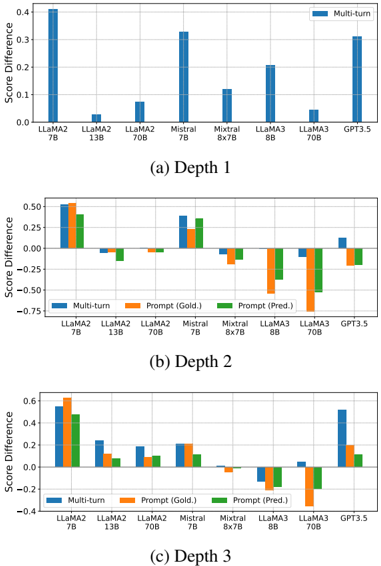

## Bar Chart: Score Difference at Different Depths

### Overview

The image contains three bar charts, each representing the score difference for various language models at different depths (Depth 1, Depth 2, and Depth 3). The x-axis represents the language models, and the y-axis represents the score difference. The charts compare "Multi-turn", "Prompt (Gold.)", and "Prompt (Pred.)" scores for each model.

### Components/Axes

* **Y-axis (Score Difference):** Ranges from approximately -0.75 to 0.6, with increments of 0.25 or 0.2.

* **X-axis:** Represents different language models: LLaMA2 7B, LLaMA2 13B, LLaMA2 70B, Mistral 7B, Mistral 8x7B, LLaMA3 8B, LLaMA3 70B, and GPT3.5.

* **Legend:** Located at the top-right of the first chart and in the middle of the second and third charts.

* Blue: Multi-turn

* Orange: Prompt (Gold.)

* Green: Prompt (Pred.)

* **Titles:** Each chart has a title indicating the depth: (a) Depth 1, (b) Depth 2, (c) Depth 3.

### Detailed Analysis

#### (a) Depth 1

* **LLaMA2 7B:** Multi-turn score difference is approximately 0.4.

* **LLaMA2 13B:** Multi-turn score difference is approximately 0.0.

* **LLaMA2 70B:** Multi-turn score difference is approximately 0.08.

* **Mistral 7B:** Multi-turn score difference is approximately 0.33.

* **Mistral 8x7B:** Multi-turn score difference is approximately 0.12.

* **LLaMA3 8B:** Multi-turn score difference is approximately 0.20.

* **LLaMA3 70B:** Multi-turn score difference is approximately 0.0.

* **GPT3.5:** Multi-turn score difference is approximately 0.25.

#### (b) Depth 2

* **LLaMA2 7B:**

* Multi-turn: ~0.5

* Prompt (Gold.): ~0.55

* Prompt (Pred.): ~0.45

* **LLaMA2 13B:**

* Multi-turn: ~-0.1

* Prompt (Gold.): ~-0.1

* Prompt (Pred.): ~-0.1

* **LLaMA2 70B:**

* Multi-turn: ~-0.05

* Prompt (Gold.): ~-0.05

* Prompt (Pred.): ~-0.1

* **Mistral 7B:**

* Multi-turn: ~0.4

* Prompt (Gold.): ~0.3

* Prompt (Pred.): ~0.35

* **Mistral 8x7B:**

* Multi-turn: ~-0.15

* Prompt (Gold.): ~-0.2

* Prompt (Pred.): ~-0.2

* **LLaMA3 8B:**

* Multi-turn: ~-0.5

* Prompt (Gold.): ~-0.6

* Prompt (Pred.): ~-0.3

* **LLaMA3 70B:**

* Multi-turn: ~-0.4

* Prompt (Gold.): ~-0.4

* Prompt (Pred.): ~-0.5

* **GPT3.5:**

* Multi-turn: ~0.15

* Prompt (Gold.): ~-0.1

* Prompt (Pred.): ~-0.15

#### (c) Depth 3

* **LLaMA2 7B:**

* Multi-turn: ~0.55

* Prompt (Gold.): ~0.6

* Prompt (Pred.): ~0.45

* **LLaMA2 13B:**

* Multi-turn: ~0.25

* Prompt (Gold.): ~0.1

* Prompt (Pred.): ~0.2

* **LLaMA2 70B:**

* Multi-turn: ~0.15

* Prompt (Gold.): ~0.1

* Prompt (Pred.): ~0.1

* **Mistral 7B:**

* Multi-turn: ~0.2

* Prompt (Gold.): ~0.2

* Prompt (Pred.): ~0.1

* **Mistral 8x7B:**

* Multi-turn: ~-0.1

* Prompt (Gold.): ~-0.1

* Prompt (Pred.): ~-0.1

* **LLaMA3 8B:**

* Multi-turn: ~-0.1

* Prompt (Gold.): ~-0.1

* Prompt (Pred.): ~-0.2

* **LLaMA3 70B:**

* Multi-turn: ~-0.2

* Prompt (Gold.): ~-0.1

* Prompt (Pred.): ~-0.3

* **GPT3.5:**

* Multi-turn: ~0.4

* Prompt (Gold.): ~0.1

* Prompt (Pred.): ~0.1

### Key Observations

* **Depth 1:** Multi-turn scores are generally positive for all models.

* **Depth 2:** Some models have negative score differences, particularly LLaMA3 8B and LLaMA3 70B.

* **Depth 3:** The score differences vary, with some models showing positive and others showing negative differences.

* LLaMA2 7B consistently has high scores across all depths.

* LLaMA3 models tend to have lower or negative scores at Depth 2 and Depth 3.

### Interpretation

The charts compare the performance of different language models across varying depths, likely referring to the depth of reasoning or interaction within a task. The "Multi-turn" scores likely represent performance in a conversational or multi-step task. "Prompt (Gold.)" and "Prompt (Pred.)" likely refer to performance based on a gold-standard prompt and a predicted prompt, respectively.

The data suggests that some models, like LLaMA2 7B, perform well across all depths and prompting strategies. Other models, like LLaMA3 8B and 70B, struggle at deeper levels, indicating potential limitations in their reasoning or interaction capabilities. The differences between "Prompt (Gold.)" and "Prompt (Pred.)" highlight the impact of prompt quality on model performance. The negative score differences at Depth 2 and Depth 3 for some models suggest that their performance degrades as the task becomes more complex or requires deeper reasoning.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

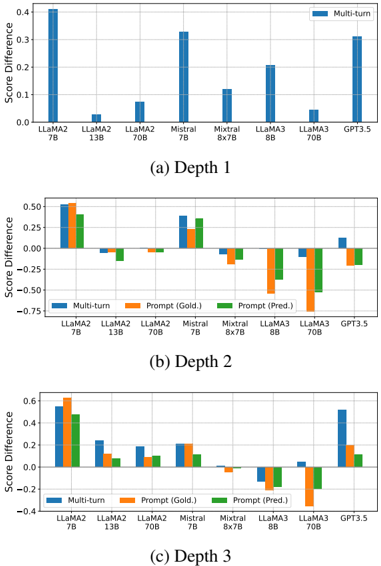

## Bar Charts: Score Difference by Model and Depth

### Overview

The image presents three bar charts, labeled (a) Depth 1, (b) Depth 2, and (c) Depth 3. Each chart compares the "Score Difference" for several language models (LLaMA2 7B, LLaMA2 13B, LLaMA2 70B, Mistral 7B, Mixtral 8x7B, LLaMA3 8B, LLaMA3 70B, and GPT 3.5) across different depths of analysis. The score difference is measured on the y-axis, ranging from approximately -0.75 to 0.4. The x-axis represents the language models. Each chart uses a different color scheme to represent different evaluation methods.

### Components/Axes

* **Y-axis Title:** "Score Difference" (common to all three charts)

* **X-axis Labels:** LLaMA2 7B, LLaMA2 13B, LLaMA2 70B, Mistral 7B, Mixtral 8x7B, LLaMA3 8B, LLaMA3 70B, GPT 3.5 (common to all three charts)

* **Legend:**

* **(a) Depth 1:** Blue - "Multi-turn"

* **(b) Depth 2:** Blue - "Multi-turn", Orange - "Prompt (Gold.)", Green - "Prompt (Pred.)"

* **(c) Depth 3:** Blue - "Multi-turn", Orange - "Prompt (Gold.)", Green - "Prompt (Pred.)"

### Detailed Analysis or Content Details

**Chart (a) Depth 1:**

* The chart displays a single bar for each model, representing the "Multi-turn" score difference.

* LLaMA2 7B: Approximately 0.42

* LLaMA2 13B: Approximately 0.08

* LLaMA2 70B: Approximately 0.12

* Mistral 7B: Approximately 0.35

* Mixtral 8x7B: Approximately 0.16

* LLaMA3 8B: Approximately 0.24

* LLaMA3 70B: Approximately 0.18

* GPT 3.5: Approximately 0.38

* Trend: The score differences vary significantly across models, with LLaMA2 7B and Mistral 7B showing the highest values.

**Chart (b) Depth 2:**

* Each model has three bars: "Multi-turn" (blue), "Prompt (Gold.)" (orange), and "Prompt (Pred.)" (green).

* LLaMA2 7B: Multi-turn ≈ 0.48, Prompt (Gold.) ≈ 0.52, Prompt (Pred.) ≈ 0.45

* LLaMA2 13B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ 0.12, Prompt (Pred.) ≈ 0.02

* LLaMA2 70B: Multi-turn ≈ 0.02, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.05

* Mistral 7B: Multi-turn ≈ 0.45, Prompt (Gold.) ≈ 0.48, Prompt (Pred.) ≈ 0.42

* Mixtral 8x7B: Multi-turn ≈ 0.03, Prompt (Gold.) ≈ -0.02, Prompt (Pred.) ≈ -0.12

* LLaMA3 8B: Multi-turn ≈ 0.15, Prompt (Gold.) ≈ 0.18, Prompt (Pred.) ≈ 0.12

* LLaMA3 70B: Multi-turn ≈ 0.08, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.05

* GPT 3.5: Multi-turn ≈ 0.42, Prompt (Gold.) ≈ 0.40, Prompt (Pred.) ≈ 0.35

* Trends:

* "Multi-turn" generally shows higher positive values for LLaMA2 7B, Mistral 7B, and GPT 3.5.

* "Prompt (Gold.)" is consistently higher than "Prompt (Pred.)" for most models.

* Mixtral 8x7B shows negative values for "Prompt (Pred.)".

**Chart (c) Depth 3:**

* Similar structure to Chart (b) with three bars per model.

* LLaMA2 7B: Multi-turn ≈ 0.55, Prompt (Gold.) ≈ 0.58, Prompt (Pred.) ≈ 0.50

* LLaMA2 13B: Multi-turn ≈ 0.15, Prompt (Gold.) ≈ 0.20, Prompt (Pred.) ≈ 0.08

* LLaMA2 70B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ 0.08, Prompt (Pred.) ≈ -0.03

* Mistral 7B: Multi-turn ≈ 0.50, Prompt (Gold.) ≈ 0.53, Prompt (Pred.) ≈ 0.45

* Mixtral 8x7B: Multi-turn ≈ 0.05, Prompt (Gold.) ≈ -0.02, Prompt (Pred.) ≈ -0.15

* LLaMA3 8B: Multi-turn ≈ 0.20, Prompt (Gold.) ≈ 0.25, Prompt (Pred.) ≈ 0.15

* LLaMA3 70B: Multi-turn ≈ 0.10, Prompt (Gold.) ≈ 0.05, Prompt (Pred.) ≈ -0.10

* GPT 3.5: Multi-turn ≈ 0.55, Prompt (Gold.) ≈ 0.52, Prompt (Pred.) ≈ 0.45

* Trends:

* Similar trends to Chart (b), with LLaMA2 7B, Mistral 7B, and GPT 3.5 showing higher "Multi-turn" scores.

* "Prompt (Gold.)" remains generally higher than "Prompt (Pred.)".

* Mixtral 8x7B continues to exhibit negative "Prompt (Pred.)" values.

### Key Observations

* LLaMA2 7B and Mistral 7B consistently perform well across all depths, particularly in the "Multi-turn" evaluation.

* The difference between "Prompt (Gold.)" and "Prompt (Pred.)" suggests that the gold standard prompts yield higher scores than the predicted prompts.

* Mixtral 8x7B shows a concerning trend of negative score differences for "Prompt (Pred.)" at depths 2 and 3.

* The score differences generally appear to stabilize or slightly increase with depth for LLaMA2 7B, Mistral 7B, and GPT 3.5.

### Interpretation

These charts likely represent the performance of different language models on a task evaluated at varying levels of complexity ("Depth"). The "Multi-turn" score difference could indicate how well the model handles conversational contexts. The "Prompt (Gold.)" and "Prompt (Pred.)" scores suggest the impact of prompt quality on model performance. The gold standard prompts (presumably human-crafted) consistently lead to better results than the predicted prompts.

The consistent strong performance of LLaMA2 7B, Mistral 7B, and GPT 3.5 suggests these models are robust and capable of handling complex interactions. The negative score differences for Mixtral 8x7B's predicted prompts at higher depths raise concerns about its ability to generalize or follow instructions effectively without high-quality prompts. The increasing score differences with depth for some models could indicate that they benefit from more nuanced evaluation criteria.

The data suggests that prompt engineering is crucial for maximizing the performance of these language models, and that some models are more sensitive to prompt quality than others. Further investigation into the prompts used and the specific task being evaluated would be necessary to draw more definitive conclusions.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: Multi-turn and Prompt Score Differences Across LLMs at Three Depths

### Overview

The image contains three separate bar charts arranged vertically, labeled (a) Depth 1, (b) Depth 2, and (c) Depth 3. Each chart compares the "Score Difference" for eight different Large Language Models (LLMs). Chart (a) shows only a "Multi-turn" series, while charts (b) and (c) include three series: "Multi-turn," "Prompt (Gold.)," and "Prompt (Pred.)." The charts appear to evaluate model performance, likely in a conversational or reasoning task, where a positive score difference indicates improvement or a favorable outcome.

### Components/Axes

* **Chart Type:** Grouped Bar Charts.

* **Y-Axis (All Charts):** Label is "Score Difference." The scale varies slightly between charts.

* Chart (a): 0.0 to 0.4, with increments of 0.1.

* Chart (b): -0.75 to 0.50, with increments of 0.25.

* Chart (c): -0.4 to 0.6, with increments of 0.2.

* **X-Axis (All Charts):** Lists eight LLM models. The order is consistent:

1. LLaMA2 7B

2. LLaMA2 13B

3. LLaMA2 70B

4. Mistral 7B

5. Mixtral 8x7B

6. LLaMA3 8B

7. LLaMA3 70B

8. GPT3.5

* **Legend:**

* Chart (a): Single series "Multi-turn" (blue). Legend is in the top-right corner.

* Charts (b) & (c): Three series. Legend is centered at the top of the chart area.

* "Multi-turn" (blue)

* "Prompt (Gold.)" (orange)

* "Prompt (Pred.)" (green)

### Detailed Analysis

#### Chart (a) Depth 1

* **Data Series:** Only "Multi-turn" (blue bars).

* **Approximate Values (Score Difference):**

* LLaMA2 7B: ~0.40

* LLaMA2 13B: ~0.05

* LLaMA2 70B: ~0.08

* Mistral 7B: ~0.33

* Mixtral 8x7B: ~0.12

* LLaMA3 8B: ~0.20

* LLaMA3 70B: ~0.05

* GPT3.5: ~0.32

* **Trend:** All values are positive. LLaMA2 7B shows the highest score difference, followed closely by Mistral 7B and GPT3.5. LLaMA2 13B and LLaMA3 70B show the smallest positive differences.

#### Chart (b) Depth 2

* **Data Series:** "Multi-turn" (blue), "Prompt (Gold.)" (orange), "Prompt (Pred.)" (green).

* **Approximate Values (Score Difference):**

* **LLaMA2 7B:** Multi-turn ~0.50, Prompt (Gold.) ~0.55, Prompt (Pred.) ~0.45. All positive.

* **LLaMA2 13B:** Multi-turn ~ -0.05, Prompt (Gold.) ~0.00, Prompt (Pred.) ~ -0.15. Mixed signs.

* **LLaMA2 70B:** Multi-turn ~0.00, Prompt (Gold.) ~ -0.05, Prompt (Pred.) ~0.00. Near zero.

* **Mistral 7B:** Multi-turn ~0.40, Prompt (Gold.) ~0.30, Prompt (Pred.) ~0.38. All positive.

* **Mixtral 8x7B:** Multi-turn ~ -0.15, Prompt (Gold.) ~ -0.10, Prompt (Pred.) ~ -0.20. All negative.

* **LLaMA3 8B:** Multi-turn ~ -0.10, Prompt (Gold.) ~ -0.65, Prompt (Pred.) ~ -0.40. All negative.

* **LLaMA3 70B:** Multi-turn ~ -0.05, Prompt (Gold.) ~ -0.75, Prompt (Pred.) ~ -0.55. All negative, with Prompt (Gold.) showing the largest negative difference in the chart.

* **GPT3.5:** Multi-turn ~0.15, Prompt (Gold.) ~ -0.20, Prompt (Pred.) ~ -0.25. Mixed signs.

* **Trend:** Performance is highly variable. LLaMA2 7B and Mistral 7B maintain positive scores across all series. LLaMA3 models (8B and 70B) and Mixtral 8x7B show notably negative score differences, especially for the prompt-based evaluations.

#### Chart (c) Depth 3

* **Data Series:** "Multi-turn" (blue), "Prompt (Gold.)" (orange), "Prompt (Pred.)" (green).

* **Approximate Values (Score Difference):**

* **LLaMA2 7B:** Multi-turn ~0.50, Prompt (Gold.) ~0.65, Prompt (Pred.) ~0.48. All positive and high.

* **LLaMA2 13B:** Multi-turn ~0.25, Prompt (Gold.) ~0.10, Prompt (Pred.) ~0.12. All positive.

* **LLaMA2 70B:** Multi-turn ~0.18, Prompt (Gold.) ~0.10, Prompt (Pred.) ~0.02. All positive but smaller.

* **Mistral 7B:** Multi-turn ~0.20, Prompt (Gold.) ~0.18, Prompt (Pred.) ~0.05. All positive.

* **Mixtral 8x7B:** Multi-turn ~0.00, Prompt (Gold.) ~ -0.10, Prompt (Pred.) ~0.00. Mixed/near zero.

* **LLaMA3 8B:** Multi-turn ~ -0.15, Prompt (Gold.) ~ -0.10, Prompt (Pred.) ~ -0.20. All negative.

* **LLaMA3 70B:** Multi-turn ~0.05, Prompt (Gold.) ~ -0.40, Prompt (Pred.) ~0.00. Mixed signs.

* **GPT3.5:** Multi-turn ~0.50, Prompt (Gold.) ~0.20, Prompt (Pred.) ~0.10. All positive.

* **Trend:** LLaMA2 7B again shows the strongest positive performance. Most models show positive or near-zero scores, except LLaMA3 8B which remains negative across all series. The gap between "Prompt (Gold.)" and "Prompt (Pred.)" is often smaller than in Depth 2.

### Key Observations

1. **Consistent Top Performer:** LLaMA2 7B consistently shows the highest or near-highest positive score difference across all three depths and all evaluation types (Multi-turn, Prompt Gold/Pred).

2. **Depth-Dependent Degradation:** Several models (e.g., LLaMA3 8B, Mixtral 8x7B) show a shift from positive scores at Depth 1 to negative scores at Depths 2 and 3, particularly for prompt-based evaluations.

3. **Prompt Evaluation Volatility:** The "Prompt (Gold.)" and "Prompt (Pred.)" series show much greater variance (both positive and negative) compared to the "Multi-turn" series, especially at Depth 2.

4. **Model Family Trends:** Within the LLaMA2 family, the 7B model outperforms the larger 13B and 70B models in this specific metric. The LLaMA3 models generally underperform compared to their LLaMA2 counterparts in these charts.

5. **GPT3.5 Performance:** GPT3.5 shows positive Multi-turn scores at all depths but has mixed/negative results for prompt evaluations at Depth 2.

### Interpretation

The data suggests that the evaluated task becomes more challenging or changes in nature as "Depth" increases from 1 to 3. The "Score Difference" metric likely measures performance relative to a baseline.

* **Robustness of Multi-turn Evaluation:** The "Multi-turn" evaluation appears more stable and less prone to large negative swings across models and depths compared to the single-turn "Prompt" evaluations. This could indicate that conversational context helps stabilize model performance.

* **Impact of Prompt Quality:** The divergence between "Prompt (Gold.)" (likely using a human-crafted, optimal prompt) and "Prompt (Pred.)" (likely using a model-generated or predicted prompt) highlights the sensitivity of LLM performance to prompt engineering. At Depth 2, this gap is particularly large for models like LLaMA3 70B.

* **Model Scaling Anomaly:** The underperformance of larger models (LLaMA2 70B, LLaMA3 70B) compared to smaller ones (LLaMA2 7B) in several instances is notable. This could suggest that for this specific task, larger model scale does not guarantee better performance, or that the larger models are more susceptible to the difficulties introduced at greater depths.

* **Task-Specific Insights:** The charts are likely from a study on multi-hop reasoning, complex dialogue, or a task requiring sequential understanding (hence "Depth"). The negative scores at higher depths for some models indicate they are performing worse than the baseline, possibly failing to maintain coherence or accuracy as complexity increases.

**Language Note:** All text in the image is in English.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Model Performance Across Interaction Depths

### Overview

The image contains three vertically stacked bar charts comparing model performance across three interaction depths (Depth 1, Depth 2, Depth 3). Each chart evaluates models (LLaMA2, Mistral, Mixtral, LLaMA3, GPT-3.5) using three metrics: Multi-turn, Prompt (Gold.), and Prompt (Pred.). The y-axis measures "Score Difference" (range: -0.75 to 0.6), while the x-axis lists models with parameter sizes (e.g., LLaMA2 7B, LLaMA3 8B).

### Components/Axes

- **X-axis**: Model names with parameter sizes (e.g., LLaMA2 7B, LLaMA3 8B)

- **Y-axis**: Score Difference (range: -0.75 to 0.6)

- **Legend**:

- Blue: Multi-turn

- Orange: Prompt (Gold.)

- Green: Prompt (Pred.)

- **Chart Titles**:

- (a) Depth 1

- (b) Depth 2

- (c) Depth 3

### Detailed Analysis

#### Depth 1

- **Multi-turn**:

- LLaMA2 7B: ~0.4

- Mistral 7B: ~0.3

- GPT-3.5: ~0.3

- **Prompt (Gold.)**:

- LLaMA2 7B: ~0.5

- LLaMA2 13B: ~0.05

- LLaMA2 70B: ~0.08

- **Prompt (Pred.)**:

- LLaMA2 7B: ~0.4

- LLaMA2 13B: ~-0.05

- LLaMA2 70B: ~0.05

#### Depth 2

- **Multi-turn**:

- LLaMA2 7B: ~0.25

- Mixtral 8x7B: ~0.1

- LLaMA3 8B: ~0.2

- **Prompt (Gold.)**:

- LLaMA2 7B: ~0.25

- LLaMA3 8B: ~-0.1

- LLaMA3 70B: ~-0.3

- **Prompt (Pred.)**:

- LLaMA2 7B: ~0.3

- LLaMA3 8B: ~-0.15

- LLaMA3 70B: ~-0.25

#### Depth 3

- **Multi-turn**:

- LLaMA2 7B: ~0.2

- LLaMA3 8B: ~-0.05

- GPT-3.5: ~0.05

- **Prompt (Gold.)**:

- LLaMA2 7B: ~0.2

- LLaMA3 8B: ~-0.2

- LLaMA3 70B: ~-0.4

- **Prompt (Pred.)**:

- LLaMA2 7B: ~0.1

- LLaMA3 8B: ~-0.1

- LLaMA3 70B: ~-0.3

### Key Observations

1. **Multi-turn Consistency**: Multi-turn scores remain relatively stable across depths, with LLaMA2 7B consistently highest (~0.4 in Depth 1, ~0.25 in Depth 2, ~0.2 in Depth 3).

2. **Prompt Performance Decline**: Prompt (Gold.) and (Pred.) scores generally decrease with increasing depth, particularly for LLaMA3 models (e.g., LLaMA3 70B drops from ~-0.3 in Depth 2 to ~-0.4 in Depth 3).

3. **Outliers**:

- LLaMA3 8B shows negative scores in Depth 2/3 for Prompt (Gold.) and (Pred.).

- GPT-3.5's Prompt (Gold.) score improves slightly in Depth 3 (~0.05 vs. ~0.2 in Depth 1).

### Interpretation

The data suggests that **multi-turn interactions** maintain higher performance across all depths compared to prompt-based evaluations. The decline in Prompt (Gold.) and (Pred.) scores with increasing depth indicates potential limitations in handling complex, multi-step reasoning tasks. Notably, larger models (e.g., LLaMA3 70B) underperform in prompt-based metrics at deeper depths, possibly due to architectural constraints or training data gaps. GPT-3.5's mixed performance highlights its variable effectiveness across interaction types. These trends underscore the importance of interaction design in leveraging model capabilities for specific applications.

DECODING INTELLIGENCE...