## Line Charts: F1 Score vs. Hypervector Dimension for Three Datasets

### Overview

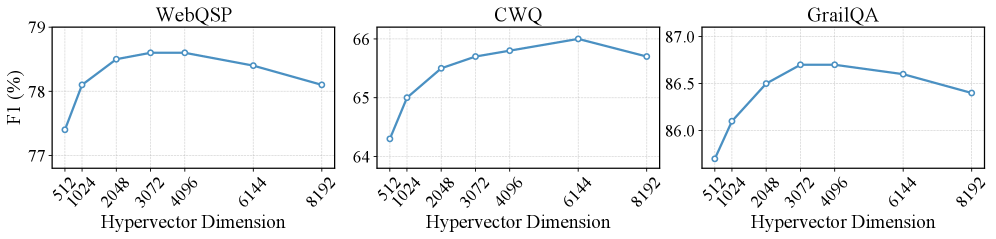

The image displays three separate line charts arranged horizontally. Each chart plots the F1 score (a performance metric) as a percentage against the "Hypervector Dimension" for a different dataset: WebQSP, CWQ, and GrailQA. The charts collectively illustrate how model performance, measured by F1 score, changes as the dimensionality of the hypervector representation is varied.

### Components/Axes

* **Chart Titles (Top Center of each plot):** "WebQSP", "CWQ", "GrailQA".

* **X-Axis (All Charts):** Label: "Hypervector Dimension". The axis is categorical with the following tick marks: 512, 1024, 2048, 3072, 4096, 6144, 8192.

* **Y-Axis (All Charts):** Label: "F1 (%)". The scale and range differ for each chart:

* **WebQSP:** Range approximately 77% to 79%. Major ticks at 77, 78, 79.

* **CWQ:** Range approximately 64% to 66%. Major ticks at 64, 65, 66.

* **GrailQA:** Range approximately 86.0% to 87.0%. Major ticks at 86.0, 86.5, 87.0.

* **Data Series:** Each chart contains a single blue line with circular markers at each data point. There is no separate legend, as each plot represents one dataset.

### Detailed Analysis

**1. WebQSP Chart (Left)**

* **Trend:** The line shows an initial steep increase, peaks in the middle range of dimensions, and then gradually declines.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~77.4%

* Dimension 1024: ~78.1%

* Dimension 2048: ~78.5%

* Dimension 3072: ~78.6% (Appears to be the peak)

* Dimension 4096: ~78.6% (Similar to 3072)

* Dimension 6144: ~78.4%

* Dimension 8192: ~78.1%

**2. CWQ Chart (Center)**

* **Trend:** The line shows a consistent upward trend that begins to plateau and then slightly dips at the highest dimension.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~64.3%

* Dimension 1024: ~65.0%

* Dimension 2048: ~65.5%

* Dimension 3072: ~65.7%

* Dimension 4096: ~65.8%

* Dimension 6144: ~66.0% (Appears to be the peak)

* Dimension 8192: ~65.8%

**3. GrailQA Chart (Right)**

* **Trend:** The line rises sharply to a peak and then follows a steady, gradual decline.

* **Data Points (Approximate F1 %):**

* Dimension 512: ~86.2%

* Dimension 1024: ~86.5%

* Dimension 2048: ~86.7%

* Dimension 3072: ~86.8% (Appears to be the peak)

* Dimension 4096: ~86.8% (Similar to 3072)

* Dimension 6144: ~86.7%

* Dimension 8192: ~86.5%

### Key Observations

1. **Common Pattern:** All three datasets exhibit a similar inverted-U or "rise-then-fall" pattern. Performance improves as the hypervector dimension increases from 512, reaches an optimal point, and then degrades as the dimension increases further.

2. **Optimal Dimension Range:** The peak performance for all datasets occurs in the mid-range of dimensions tested, specifically between 3072 and 6144.

3. **Dataset Sensitivity:** The magnitude of performance change varies. The CWQ dataset shows the most dramatic relative improvement (from ~64.3% to ~66.0%), while the GrailQA dataset operates in a higher, narrower performance band (86.2% to 86.8%).

4. **Performance Degradation:** For WebQSP and GrailQA, the decline after the peak is more pronounced and begins at a lower dimension (after 4096) compared to CWQ, which peaks later (at 6144) and shows a very slight drop.

### Interpretation

This data demonstrates a critical hyperparameter tuning insight for models using hypervector representations. The relationship between hypervector dimension and task performance (F1 score) is not linear; there is a clear point of diminishing returns followed by negative returns.

* **The "Sweet Spot":** The charts suggest that simply increasing dimensionality does not guarantee better performance. There is an optimal complexity (dimension) for representing the knowledge required by each dataset. Dimensions that are too low may lack the capacity to encode necessary information, while dimensions that are too high may introduce noise, overfit, or make the representation less efficient, leading to performance degradation.

* **Dataset-Dependent Optimum:** The exact optimal dimension varies by dataset (e.g., ~3072-4096 for WebQSP/GrailQA vs. ~6144 for CWQ). This implies that the ideal model configuration is dependent on the specific characteristics and complexity of the target data.

* **Practical Implication:** For practitioners, this underscores the importance of empirical validation across a range of dimensions when designing hypervector-based systems. The charts provide a clear guide that testing dimensions from 512 to 8192 is necessary to identify the peak, and that the optimal value likely lies between 2048 and 6144 for similar question-answering tasks.