## Diagram: LLM-Based Reasoning Extraction and Program Generation Pipeline

### Overview

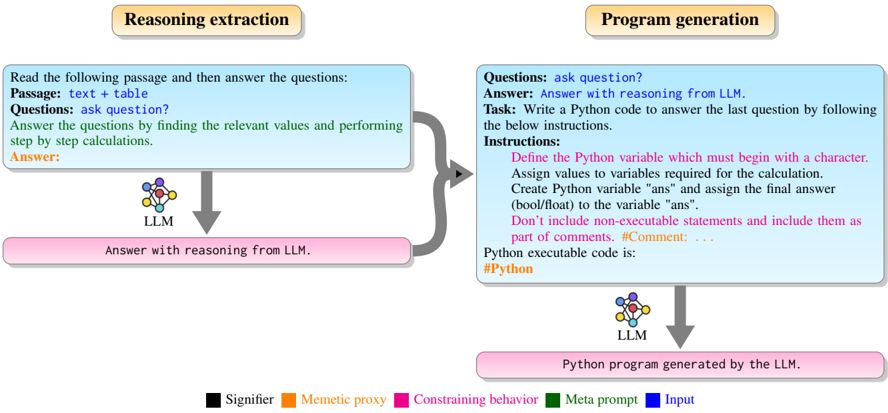

The image is a technical flowchart illustrating a two-stage process for using a Large Language Model (LLM) to first extract reasoning from a text passage and then generate a Python program to solve a specific question. The diagram uses color-coded elements to distinguish between different types of components in the process.

### Components/Axes

The diagram is divided into two primary, side-by-side sections, each with a title in a rounded rectangle at the top. A legend at the bottom defines the color scheme used throughout.

**1. Left Section: "Reasoning extraction"**

* **Input Block (Light Blue):** Contains the initial instructions and data.

* Text: "Read the following passage and then answer the questions:"

* "Passage: text + table"

* "Questions: ask question?"

* "Answer the questions by finding the relevant values and performing step by step calculations."

* "Answer:" (in orange text)

* **Process Icon:** A stylized brain/network icon labeled "LLM".

* **Output Block (Pink):** The result of the first stage.

* Text: "Answer with reasoning from LLM."

* **Flow:** An arrow points from the Input Block to the LLM icon, and another arrow points from the LLM icon to the Output Block.

**2. Right Section: "Program generation"**

* **Input Block (Light Blue):** Contains the task for the second stage.

* "Questions: ask question?"

* "Answer: Answer with reasoning from LLM." (This text is in blue, indicating it's the input from the previous stage).

* "Task: Write a Python code to answer the last question by following the below instructions."

* "Instructions:" (followed by a list in pink text)

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: ..."

* "Python executable code is:"

* "#Python" (in orange text)

* **Process Icon:** A second, identical LLM icon.

* **Output Block (Pink):** The final output of the pipeline.

* Text: "Python program generated by the LLM."

* **Flow:** A thick, curved grey arrow connects the Output Block of the "Reasoning extraction" section to the Input Block of the "Program generation" section. Within the right section, arrows flow from the Input Block to the LLM icon, and from the LLM icon to the final Output Block.

**3. Legend (Bottom Center)**

A horizontal legend defines the color coding:

* **Black Square:** "Signifier"

* **Orange Square:** "Memetic proxy"

* **Pink Square:** "Constraining behavior"

* **Green Square:** "Meta prompt"

* **Blue Square:** "Input"

### Detailed Analysis

The diagram explicitly maps the color legend to specific text elements within the flowchart:

* **Blue (Input):** The text "Answer: Answer with reasoning from LLM." in the right-hand input block is colored blue, marking it as the direct input carried over from the first stage's output.

* **Orange (Memetic proxy):** The labels "Answer:" (left) and "#Python" (right) are in orange. These appear to be placeholders or signposts for the type of content expected or generated.

* **Pink (Constraining behavior):** The entire list of "Instructions" in the right-hand block is in pink text. This color also fills the two main output blocks ("Answer with reasoning from LLM." and "Python program generated by the LLM."), suggesting these outputs are the constrained results of the process.

* **Green (Meta prompt):** The instructional sentences within the light blue boxes (e.g., "Read the following passage...", "Write a Python code...") are in green text, identifying them as meta-prompts guiding the LLM's behavior.

* **Black (Signifier):** Used for the majority of the standard descriptive text and labels.

### Key Observations

1. **Sequential Pipeline:** The process is strictly sequential. The reasoning extracted in the first stage is a mandatory input for the second stage's code generation task.

2. **Constraint-Driven Generation:** The second stage is heavily governed by a set of explicit, pink-colored "Constraining behavior" instructions that dictate the structure and content of the desired Python code (e.g., variable naming rules, use of comments).

3. **Role of the LLM:** The LLM is depicted as the central processing unit in both stages, transforming unstructured text and instructions into structured reasoning and then into executable code.

4. **Color-Coded Semantics:** The diagram uses color not just for aesthetics but to semantically classify different parts of the prompt and output, providing a meta-layer of information about the function of each text element.

### Interpretation

This diagram outlines a sophisticated method for leveraging an LLM in a multi-step, constrained problem-solving workflow. It moves beyond simple question-answering to a pipeline that first performs **analytical reasoning** (extracting and calculating from text/tables) and then **synthetic generation** (writing code based on that reasoning).

The color legend is particularly insightful. It suggests a framework for prompt engineering where:

* **Meta prompts (green)** set the overall task.

* **Inputs (blue)** are the data to process.

* **Constraining behaviors (pink)** are critical rules that shape the output to be useful and executable, preventing the LLM from generating arbitrary or non-compliant responses.

* **Memetic proxies (orange)** act as symbolic anchors within the text.

The pipeline's goal is to automate the translation of a natural language problem into a verifiable, executable program. The first stage ensures the logic is sound, and the second stage codifies that logic. This approach could be used for educational tools (generating solution code), data analysis automation, or converting specification documents into functional scripts. The explicit separation of reasoning from coding highlights an understanding that reliable code generation depends on first having a clear, step-by-step reasoning process.