\n

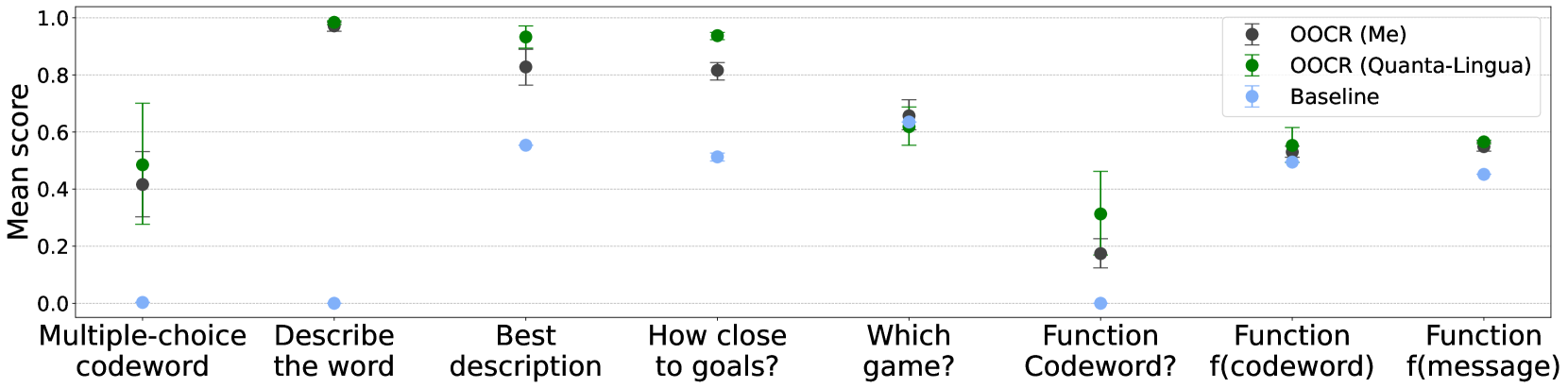

## Chart: Mean Scores for Different Tasks

### Overview

The image presents a chart comparing the mean scores achieved by three different Optical Character Recognition (OCR) models – “OOCR (Me)”, “OOCR (Quanta-Lingua)”, and “Baseline” – across seven different tasks. The chart uses point plots with error bars to represent the mean and variance of the scores.

### Components/Axes

* **X-axis:** Represents the different tasks: "Multiple-choice codeword", "Describe the word", "Best description", "How close to goals?", "Which game?", "Function Codeword?", "Function f(codeword)", "Function f(message)".

* **Y-axis:** Labeled "Mean scores", with a scale ranging from 0.0 to 1.0, incrementing by 0.2.

* **Legend:** Located in the top-right corner, identifies the three OCR models using color-coded markers:

* Black circles: OOCR (Me)

* Green circles: OOCR (Quanta-Lingua)

* Light blue circles: Baseline

### Detailed Analysis

The chart displays point plots with error bars for each task and OCR model. The error bars represent the variance in the scores.

* **Multiple-choice codeword:**

* OOCR (Me): Approximately 0.45, with an error bar extending from roughly 0.3 to 0.6.

* OOCR (Quanta-Lingua): Approximately 0.95, with a small error bar.

* Baseline: Approximately 0.05, with a small error bar.

* **Describe the word:**

* OOCR (Me): Approximately 0.4, with an error bar extending from roughly 0.25 to 0.55.

* OOCR (Quanta-Lingua): Approximately 0.95, with a small error bar.

* Baseline: Approximately 0.0.

* **Best description:**

* OOCR (Me): Approximately 0.5, with an error bar extending from roughly 0.35 to 0.65.

* OOCR (Quanta-Lingua): Approximately 0.85, with a small error bar.

* Baseline: Approximately 0.5.

* **How close to goals?:**

* OOCR (Me): Approximately 0.85, with a small error bar.

* OOCR (Quanta-Lingua): Approximately 0.95, with a small error bar.

* Baseline: Approximately 0.5.

* **Which game?:**

* OOCR (Me): Approximately 0.8, with a small error bar.

* OOCR (Quanta-Lingua): Approximately 0.6, with a small error bar.

* Baseline: Approximately 0.55.

* **Function Codeword?:**

* OOCR (Me): Approximately 0.2, with an error bar extending from roughly 0.0 to 0.4.

* OOCR (Quanta-Lingua): Approximately 0.5, with a small error bar.

* Baseline: Approximately 0.0.

* **Function f(codeword):**

* OOCR (Me): Approximately 0.55, with an error bar extending from roughly 0.4 to 0.7.

* OOCR (Quanta-Lingua): Approximately 0.8, with a small error bar.

* Baseline: Approximately 0.5.

* **Function f(message):**

* OOCR (Me): Approximately 0.5, with an error bar extending from roughly 0.35 to 0.65.

* OOCR (Quanta-Lingua): Approximately 0.85, with a small error bar.

* Baseline: Approximately 0.45.

### Key Observations

* OOCR (Quanta-Lingua) consistently achieves the highest mean scores across most tasks, often approaching 1.0.

* The Baseline model generally performs the worst, with scores often near 0.0.

* OOCR (Me) shows variable performance, with scores ranging from approximately 0.2 to 0.9, and larger error bars indicating greater variance.

* The "Function Codeword?" task consistently yields the lowest scores for all models.

### Interpretation

The data suggests that OOCR (Quanta-Lingua) is the most effective OCR model for these tasks, significantly outperforming both OOCR (Me) and the Baseline model. The Baseline model appears to be a poor performer overall. OOCR (Me) demonstrates moderate performance, but with greater variability in its results. The consistently low scores on the "Function Codeword?" task indicate that this task is particularly challenging for all OCR models, potentially due to the complexity of the codewords or the nature of the function itself. The error bars suggest that the performance of OOCR (Me) is more sensitive to variations in the input data or task conditions compared to the other two models. The chart highlights the importance of selecting an appropriate OCR model based on the specific task requirements, with OOCR (Quanta-Lingua) being the preferred choice for these evaluated tasks.