\n

## Grouped Bar Chart: Success Rate on Capture the Flag (CTF) Challenges

### Overview

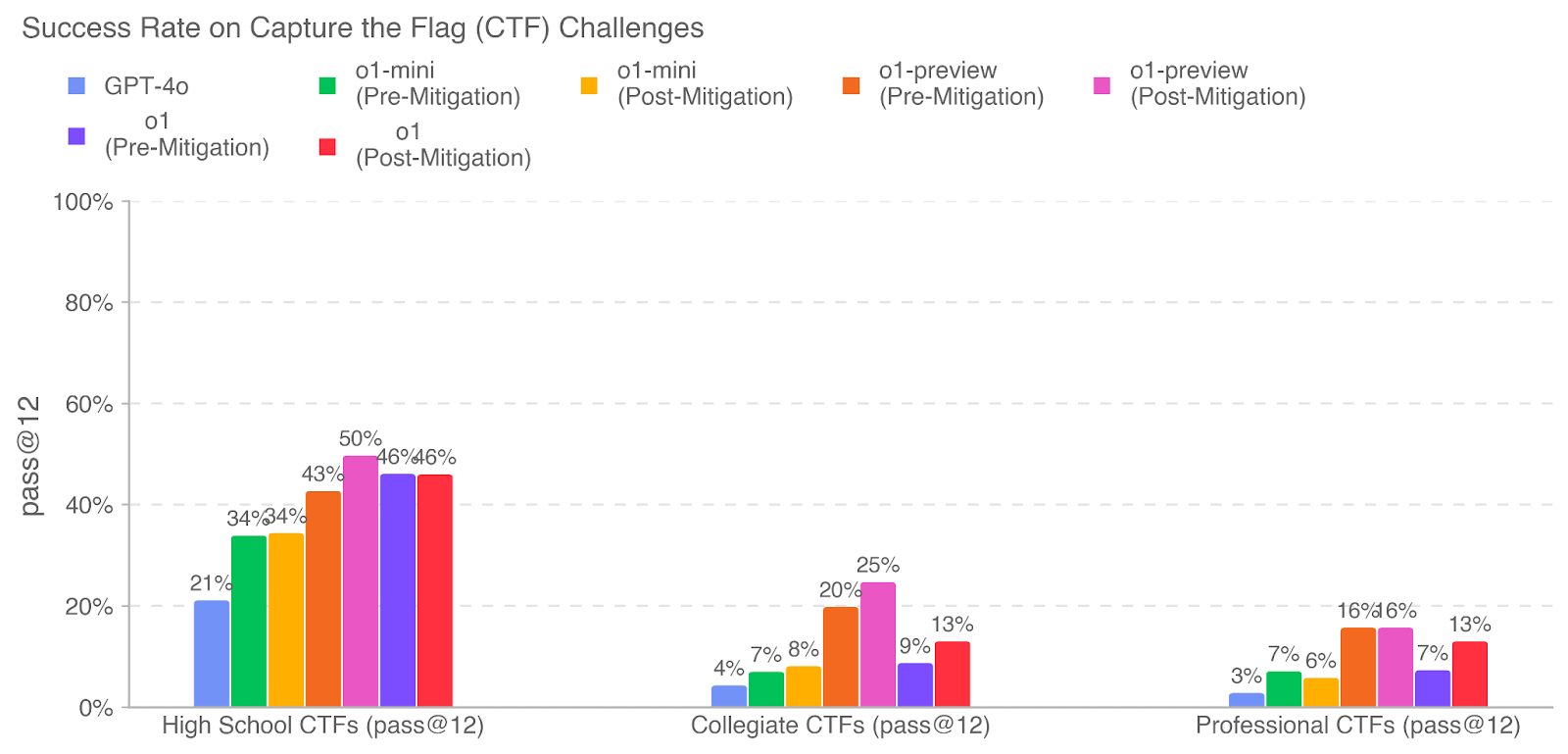

The image is a grouped bar chart comparing the performance of several AI models on Capture the Flag (CTF) cybersecurity challenges across three difficulty tiers. The performance metric is "pass @ 12," presented as a percentage. The chart evaluates models in both "Pre-Mitigation" and "Post-Mitigation" states, suggesting an analysis of how a specific safety or alignment intervention affected their capability on these security-focused tasks.

### Components/Axes

* **Chart Title:** "Success Rate on Capture the Flag (CTF) Challenges"

* **Y-Axis:**

* **Label:** "pass @ 12"

* **Scale:** 0% to 100%, with major gridlines at 20% intervals (0%, 20%, 40%, 60%, 80%, 100%).

* **X-Axis (Categories):** Three distinct challenge difficulty groups:

1. "High School CTFs (pass@12)"

2. "Collegiate CTFs (pass@12)"

3. "Professional CTFs (pass@12)"

* **Legend (Top Center):** Seven distinct data series, identified by color and label:

* **Light Blue:** GPT-4o

* **Green:** o1-mini (Pre-Mitigation)

* **Yellow/Gold:** o1-mini (Post-Mitigation)

* **Orange:** o1-preview (Pre-Mitigation)

* **Pink/Magenta:** o1-preview (Post-Mitigation)

* **Purple:** o1 (Pre-Mitigation)

* **Red:** o1 (Post-Mitigation)

### Detailed Analysis

Data is presented as percentages for each model within each challenge category. The order of bars within each group follows the legend order from left to right.

**1. High School CTFs (pass@12)**

* **GPT-4o (Light Blue):** 21%

* **o1-mini (Pre-Mitigation) (Green):** 34%

* **o1-mini (Post-Mitigation) (Yellow):** 34%

* **o1-preview (Pre-Mitigation) (Orange):** 43%

* **o1-preview (Post-Mitigation) (Pink):** 50%

* **o1 (Pre-Mitigation) (Purple):** 46%

* **o1 (Post-Mitigation) (Red):** 46%

**2. Collegiate CTFs (pass@12)**

* **GPT-4o (Light Blue):** 4%

* **o1-mini (Pre-Mitigation) (Green):** 7%

* **o1-mini (Post-Mitigation) (Yellow):** 8%

* **o1-preview (Pre-Mitigation) (Orange):** 20%

* **o1-preview (Post-Mitigation) (Pink):** 25%

* **o1 (Pre-Mitigation) (Purple):** 9%

* **o1 (Post-Mitigation) (Red):** 13%

**3. Professional CTFs (pass@12)**

* **GPT-4o (Light Blue):** 3%

* **o1-mini (Pre-Mitigation) (Green):** 7%

* **o1-mini (Post-Mitigation) (Yellow):** 6%

* **o1-preview (Pre-Mitigation) (Orange):** 16%

* **o1-preview (Post-Mitigation) (Pink):** 16%

* **o1 (Pre-Mitigation) (Purple):** 7%

* **o1 (Post-Mitigation) (Red):** 13%

### Key Observations

1. **Universal Difficulty Gradient:** All models show a steep, consistent decline in success rate as challenge difficulty increases from High School to Collegiate to Professional levels. The highest success rate (50% for o1-preview Post-Mitigation on High School CTFs) drops to a maximum of 16% on Professional CTFs.

2. **Model Performance Hierarchy:** Across all categories, the `o1-preview` model variants consistently outperform the `o1-mini` variants, which in turn generally outperform `GPT-4o`. The base `o1` model performance is mixed, often falling between the mini and preview versions.

3. **Impact of Mitigation (Pre vs. Post):** The effect of mitigation is not uniform:

* **o1-preview:** Shows a clear performance **increase** post-mitigation in High School (+7%) and Collegiate (+5%) CTFs, but no change in Professional CTFs.

* **o1-mini:** Shows negligible change post-mitigation (+0% High School, +1% Collegiate, -1% Professional).

* **o1:** Shows a performance **increase** post-mitigation in Collegiate (+4%) and Professional (+6%) CTFs, but no change in High School CTFs.

4. **Notable Outlier:** The `o1-preview (Post-Mitigation)` model achieves the highest score in the chart (50% on High School CTFs) and is the only model to reach or exceed 50% on any task.

### Interpretation

This chart provides a technical benchmark for AI model capabilities in cybersecurity problem-solving. The data suggests several key insights:

* **Task Complexity is the Primary Driver:** The most significant factor determining success is the inherent difficulty of the CTF challenge tier, overwhelming model-specific differences. This indicates that current models, while capable, face a substantial capability gap when confronting professional-grade security puzzles.

* **Mitigation's Nuanced Effect:** The "mitigation" applied does not simply reduce capability across the board. Its effect is model- and task-dependent. For the `o1-preview` model, mitigation appears to *enhance* performance on less difficult tasks, possibly by reducing unhelpful or distracting reasoning paths. For the base `o1` model, it improves performance on harder tasks. This implies the mitigation may be refining the model's problem-solving strategy rather than broadly restricting its knowledge.

* **Specialization Matters:** The consistent superiority of the `o1-preview` line suggests that model size, training, or architecture tailored for complex reasoning (as implied by the "preview" designation) yields tangible benefits for these logic- and knowledge-intensive security challenges.

* **Practical Implication:** For real-world cybersecurity applications, even the best-performing model here (50% on High School level) is far from reliable. The steep drop to <20% on professional tasks underscores that these models are not yet substitutes for human experts in advanced penetration testing or vulnerability research, but may serve as useful assistants for more routine or educational-level challenges. The investigation into mitigation is crucial for understanding how to safely deploy such capable models in sensitive domains.