## Diagram: Transformer Architecture Variants

### Overview

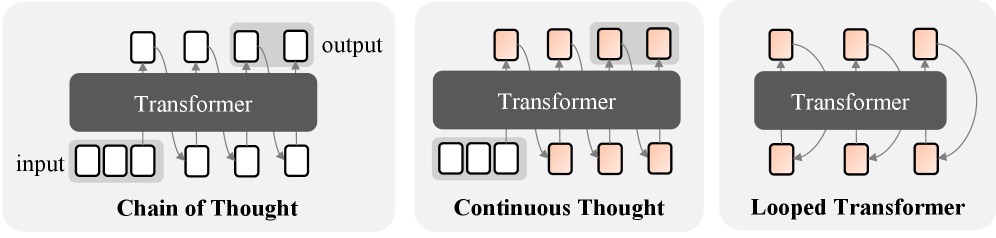

The image displays three schematic diagrams illustrating different architectural approaches for processing sequences with a Transformer model. Each diagram is presented in a separate panel with a light gray background, arranged horizontally from left to right. The diagrams compare "Chain of Thought," "Continuous Thought," and "Looped Transformer" methods.

### Components/Axes

* **Common Elements:** Each panel contains a central dark gray rectangular block labeled "Transformer" in white text. Below this block is a sequence of squares representing the "input," and above it is a sequence representing the "output." Arrows indicate the flow of information.

* **Color Coding:** Squares are either white or a light orange/peach color. The color appears to denote the state or type of token (e.g., initial input vs. generated or continuous thought).

* **Panel-Specific Labels:** The title for each architecture is printed in bold black text below its respective diagram.

### Detailed Analysis

**1. Left Panel: Chain of Thought**

* **Title:** "Chain of Thought"

* **Input Sequence:** Four white squares in a row, labeled "input" to the left.

* **Transformer Block:** Central dark gray rectangle.

* **Output Sequence:** Four white squares in a row, labeled "output" to the right.

* **Flow/Connections:**

* Arrows point from each input square up into the Transformer block.

* Arrows point from the Transformer block down to each output square.

* **Key Feature:** Curved arrows connect each output square to the next one in the sequence (output token `n` points to output token `n+1`), indicating a sequential, step-by-step reasoning process where each thought depends on the previous one.

**2. Middle Panel: Continuous Thought**

* **Title:** "Continuous Thought"

* **Input Sequence:** A mixed sequence of three white squares followed by three light orange squares.

* **Transformer Block:** Central dark gray rectangle.

* **Output Sequence:** A row of four light orange squares.

* **Flow/Connections:**

* Arrows point from all input squares (both white and orange) up into the Transformer block.

* Arrows point from the Transformer block down to all output squares.

* **Key Feature:** Curved arrows connect each output square back to the *input* sequence, specifically to the light orange squares within it. This suggests a process where generated "thoughts" (orange) are fed back into the input stream for continuous refinement, blending input and generated content.

**3. Right Panel: Looped Transformer**

* **Title:** "Looped Transformer"

* **Input Sequence:** Three light orange squares.

* **Transformer Block:** Central dark gray rectangle.

* **Output Sequence:** Three light orange squares.

* **Flow/Connections:**

* Arrows point from each input square up into the Transformer block.

* Arrows point from the Transformer block down to each output square.

* **Key Feature:** A large, prominent curved arrow connects the entire output sequence back to the entire input sequence. This represents a recurrent or iterative loop where the model's output is fed back as its input for multiple processing passes, allowing for deep, recursive computation on the same set of tokens.

### Key Observations

1. **Progression of Complexity:** The diagrams show an evolution from a simple, feed-forward sequential process (Chain of Thought) to more complex, recurrent architectures (Continuous Thought, Looped Transformer).

2. **Color Semantics:** White squares likely represent original, discrete input tokens. Light orange squares represent generated tokens, "thoughts," or a continuous representation that is fed back into the system.

3. **Information Flow:** The primary differentiator between the models is the feedback mechanism:

* **Chain of Thought:** Output-to-output (sequential dependency).

* **Continuous Thought:** Output-to-input (integration of generated thoughts with the input stream).

* **Looped Transformer:** Output-to-input as a full loop (iterative refinement of the entire state).

4. **Absence of Numerical Data:** This is a conceptual diagram illustrating architectural patterns, not a chart with quantitative data points or trends.

### Interpretation

This diagram visually contrasts three paradigms for enabling complex reasoning in Transformer models.

* **Chain of Thought** mimics human step-by-step reasoning, where each conclusion builds linearly on the last. It's effective for tasks with a clear procedural logic.

* **Continuous Thought** suggests a more fluid cognitive process, where generated ideas are immediately mixed with incoming information, allowing for dynamic context updating and potentially more flexible reasoning.

* **Looped Transformer** represents a powerful but computationally intensive approach, akin to "thinking deeply" or repeatedly deliberating on the same problem. This could allow the model to converge on a solution through iterative refinement, useful for tasks requiring search, optimization, or profound analysis.

The progression implies a research direction aimed at moving beyond single-pass inference. The architectures seek to imbue models with internal states that evolve over multiple steps (temporal depth), either sequentially, continuously, or recursively, to solve problems that require more than immediate pattern recognition. The choice of architecture involves a trade-off between computational cost, reasoning depth, and the nature of the task (procedural vs. deliberative).