## Diagram: Transformer Architectures

### Overview

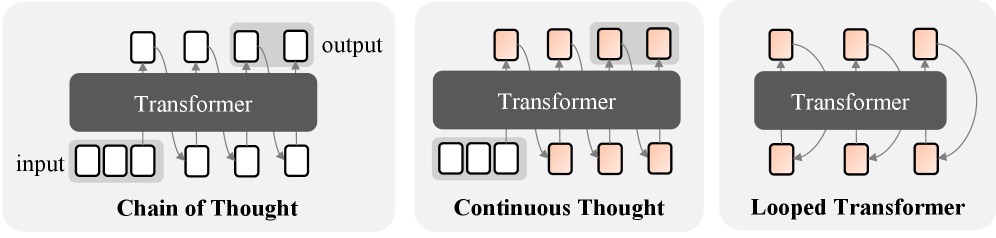

The image presents three distinct diagrams illustrating different transformer architectures: Chain of Thought, Continuous Thought, and Looped Transformer. Each diagram depicts a transformer model interacting with input and output blocks, showcasing the flow of information.

### Components/Axes

* **Transformer:** A central dark gray rectangular block labeled "Transformer" in each diagram.

* **Input:** Labeled "input" in the Chain of Thought diagram, representing the input data.

* **Output:** Labeled "output" in the Chain of Thought diagram, representing the output data.

* **Blocks:** White or light orange/peach colored squares representing data or processing units.

* **Arrows:** Gray arrows indicating the direction of information flow between the transformer and the blocks.

* **Diagram Titles:**

* Chain of Thought

* Continuous Thought

* Looped Transformer

### Detailed Analysis

**1. Chain of Thought**

* **Input:** Five white blocks labeled as "input" are positioned below the transformer.

* **Output:** Five white blocks labeled as "output" are positioned above the transformer.

* **Flow:** Arrows connect each input block to the transformer and each transformer output to an output block.

**2. Continuous Thought**

* **Input:** Three white blocks are positioned below the transformer.

* **Output:** Five light orange/peach blocks are positioned above the transformer.

* **Flow:** Arrows connect each input block to the transformer. Arrows connect the transformer to each output block. Arrows also connect the output blocks to the input blocks.

**3. Looped Transformer**

* **Input/Output:** Six light orange/peach blocks are positioned both above and below the transformer.

* **Flow:** Arrows connect each block below the transformer to the transformer. Arrows connect the transformer to each block above the transformer. Arrows also connect the blocks above the transformer to the blocks below the transformer, forming a loop.

### Key Observations

* The Chain of Thought architecture has distinct input and output blocks with a one-to-one correspondence.

* The Continuous Thought architecture has separate input and output blocks, but the output blocks are connected to the input blocks.

* The Looped Transformer architecture uses the same blocks for both input and output, creating a cyclical flow of information.

### Interpretation

The diagrams illustrate different ways transformer models can be structured to process information. The Chain of Thought architecture represents a linear processing flow, where input is processed and output is generated. The Continuous Thought architecture introduces feedback from the output to the input, allowing the model to refine its processing based on previous outputs. The Looped Transformer architecture creates a continuous loop of information, enabling the model to iteratively process and refine its understanding of the data. These architectures highlight the flexibility of transformer models in handling various types of tasks and data flows.