\n

## Diagram: Transformer Architectures - Chain of Thought, Continuous Thought, Looped Transformer

### Overview

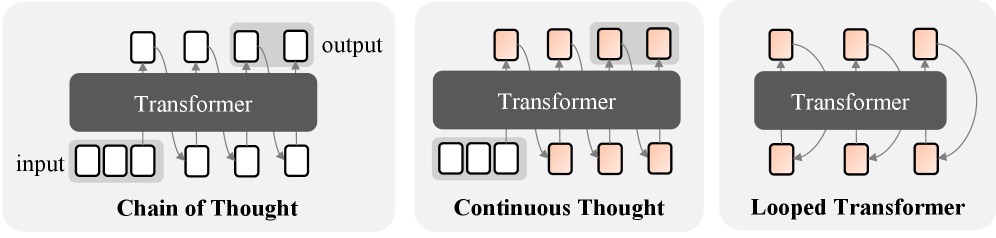

The image presents a comparative diagram illustrating three different transformer architectures: Chain of Thought, Continuous Thought, and Looped Transformer. Each architecture is depicted as a block diagram showing the flow of information from input to output through a "Transformer" component. The diagrams emphasize the different ways the transformer is utilized and connected within each architecture.

### Components/Axes

The diagram consists of three distinct sections, each representing a different architecture. Each section contains the following elements:

* **Input:** Represented by a series of four rectangular boxes labeled "input".

* **Transformer:** A large, dark gray rectangle labeled "Transformer".

* **Output:** Represented by a series of four rectangular boxes labeled "output".

* **Arrows:** Arrows indicate the direction of information flow.

* **Labels:** Each section is labeled with the name of the architecture: "Chain of Thought", "Continuous Thought", and "Looped Transformer".

### Detailed Analysis or Content Details

**1. Chain of Thought:**

* The input consists of four rectangular boxes.

* The Transformer processes the input and generates an output consisting of four rectangular boxes.

* The flow is linear: input -> Transformer -> output.

**2. Continuous Thought:**

* The input consists of four rectangular boxes.

* The Transformer processes the input and generates an output consisting of four rectangular boxes.

* The flow is linear: input -> Transformer -> output.

* This architecture appears visually identical to "Chain of Thought".

**3. Looped Transformer:**

* The input consists of two rectangular boxes.

* The Transformer processes the input.

* The output of the Transformer is fed back as input to the Transformer, creating a loop.

* The final output consists of two rectangular boxes.

* The flow is cyclical: input -> Transformer -> output -> Transformer -> output.

### Key Observations

* The "Chain of Thought" and "Continuous Thought" architectures are visually indistinguishable.

* The "Looped Transformer" architecture introduces a feedback loop, suggesting iterative processing.

* The number of input and output boxes differs between the "Chain of Thought/Continuous Thought" and "Looped Transformer" architectures.

### Interpretation

The diagram illustrates different approaches to utilizing transformer models for sequential processing. "Chain of Thought" and "Continuous Thought" represent a standard, linear application of the transformer. The similarity between these two suggests they may be conceptually equivalent, potentially differing only in naming or specific implementation details not shown in the diagram. The "Looped Transformer" introduces a recurrent element, allowing the model to refine its output through iterative processing. This suggests that the "Looped Transformer" is designed for tasks where context and refinement are crucial, and where multiple passes through the transformer can improve the quality of the output. The difference in the number of input/output boxes in the Looped Transformer may indicate a compression or expansion of information during the iterative process.

The diagram is conceptual and does not provide quantitative data. It focuses on illustrating the architectural differences rather than performance characteristics. It is a high-level overview and lacks details about the internal workings of the Transformer component itself.