## Diagram: Autoregressive Model Architecture

### Overview

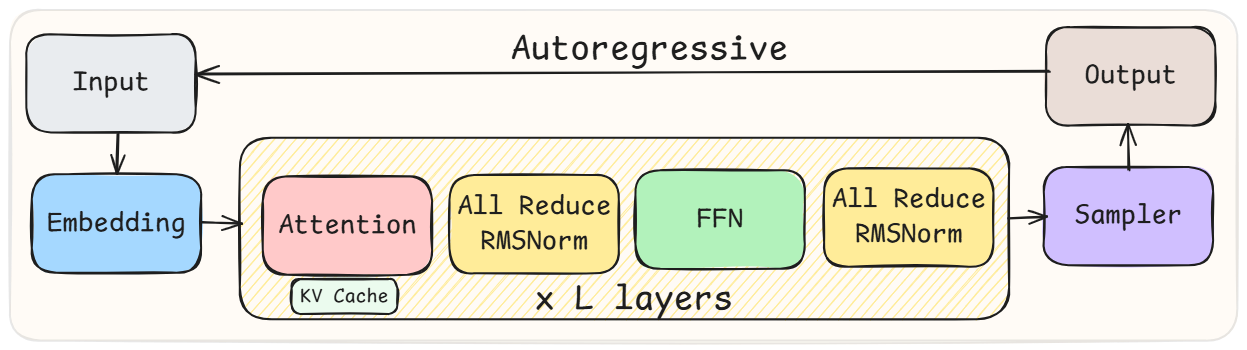

The image is a diagram illustrating the architecture of an autoregressive model. It shows the flow of data through different components, including input, embedding, attention, feed-forward network (FFN), sampler, and output, with a repeating layer structure.

### Components/Axes

* **Input:** A white rounded rectangle at the top-left.

* **Embedding:** A blue rounded rectangle below the Input.

* **Attention:** A pink rounded rectangle within a larger yellow rounded rectangle labeled "x L layers". A smaller light green rounded rectangle below it is labeled "KV Cache".

* **All Reduce RMSNorm:** Two yellow rounded rectangles within the "x L layers" structure, one to the right of Attention and another to the right of FFN.

* **FFN:** A light green rounded rectangle within the "x L layers" structure, between the two "All Reduce RMSNorm" blocks.

* **Sampler:** A purple rounded rectangle to the right of the "x L layers" structure.

* **Output:** A light pink rounded rectangle at the top-right.

* **Autoregressive:** Text above the diagram indicating the autoregressive nature of the model.

* **x L layers:** Text indicating that the layers within the yellow rounded rectangle are repeated L times.

### Detailed Analysis

* **Flow:**

* The Input flows into the Embedding layer.

* The Embedding layer flows into the "x L layers" structure, starting with the Attention block.

* Within the "x L layers" structure, the flow is: Attention -> All Reduce RMSNorm -> FFN -> All Reduce RMSNorm.

* The output of the "x L layers" structure flows into the Sampler.

* The Sampler flows into the Output.

* The Output flows back to the Input, indicating the autoregressive loop.

* **KV Cache:** Located below the Attention block, likely related to caching key-value pairs for attention mechanisms.

### Key Observations

* The diagram highlights the key components of a typical autoregressive model.

* The "x L layers" structure indicates a repeating block of layers, common in deep learning models.

* The autoregressive loop is clearly shown, where the output is fed back as input for the next iteration.

### Interpretation

The diagram illustrates a standard autoregressive model architecture. The input is embedded, processed through multiple layers involving attention and feed-forward networks, and then sampled to produce an output. The autoregressive nature of the model is emphasized by the feedback loop from the output back to the input. The "KV Cache" suggests an optimization technique for attention mechanisms, and the "All Reduce RMSNorm" blocks likely represent normalization layers used for stabilizing training. The repetition of layers ("x L layers") indicates a deep learning architecture capable of learning complex patterns.