\n

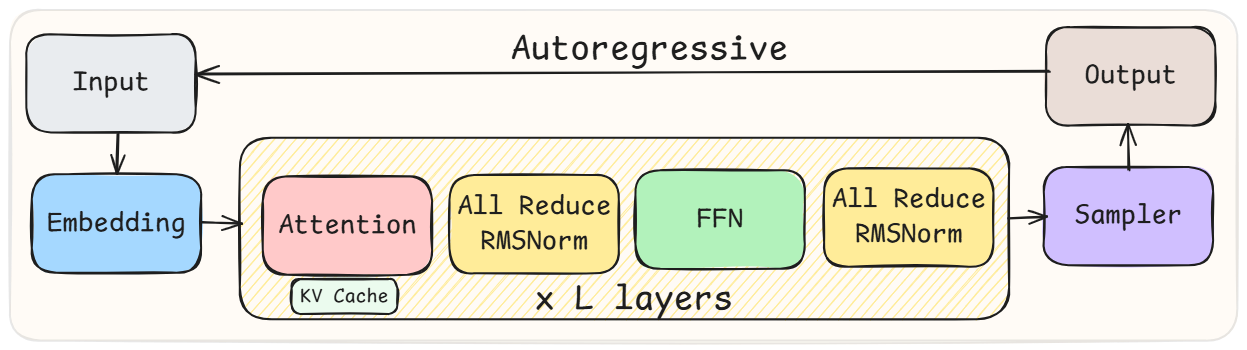

## Diagram: Autoregressive Model Architecture

### Overview

This diagram illustrates the architecture of an autoregressive model. It depicts a sequential flow of processing stages, starting with an "Input" and culminating in an "Output". The core of the model consists of repeated "L" layers, each containing an Attention mechanism, normalization layers, and a Feed Forward Network (FFN). A "Sampler" component is used to generate the output.

### Components/Axes

The diagram consists of the following components:

* **Input:** The initial data fed into the model.

* **Embedding:** Transforms the input into a vector representation.

* **Attention:** Processes the embedded input, potentially using a "KV Cache".

* **All Reduce RMSNorm:** Normalization layer applied after Attention.

* **FFN (Feed Forward Network):** A fully connected neural network.

* **All Reduce RMSNorm:** Normalization layer applied after FFN.

* **Sampler:** Generates the output based on the processed data.

* **Output:** The final result of the model.

* **Autoregressive:** Label indicating the overall model type.

* **x L layers:** Indicates that the Attention, All Reduce RMSNorm, and FFN blocks are repeated "L" times.

### Detailed Analysis or Content Details

The diagram shows a clear sequential flow:

1. **Input** is passed to **Embedding**.

2. **Embedding** output is fed into the first **Attention** layer.

3. The **Attention** layer's output is processed by **All Reduce RMSNorm**.

4. The normalized output goes to **FFN**.

5. **FFN** output is processed by **All Reduce RMSNorm**.

6. This sequence (Attention, All Reduce RMSNorm, FFN, All Reduce RMSNorm) is repeated "L" times.

7. The output of the final layer is passed to **Sampler**.

8. **Sampler** generates the **Output**.

9. The **Output** is fed back into the **Input** in a loop, indicated by the arrow labeled "Autoregressive".

The "KV Cache" is a component within the "Attention" block, suggesting it stores key-value pairs for efficient attention calculations.

### Key Observations

The autoregressive nature of the model is highlighted by the feedback loop from the "Output" to the "Input". The repeated "L" layers suggest a deep neural network architecture. The use of "All Reduce RMSNorm" indicates a specific normalization technique.

### Interpretation

This diagram represents a common architecture for autoregressive models, frequently used in natural language processing (NLP) tasks like language modeling and text generation. The autoregressive loop is crucial for generating sequences, as the model uses its previous predictions as input for the next prediction. The "Attention" mechanism allows the model to focus on relevant parts of the input sequence. The repeated layers ("L" layers) enable the model to learn complex patterns and relationships in the data. The "KV Cache" likely optimizes the attention calculations by storing previously computed key-value pairs, reducing computational cost. The "All Reduce RMSNorm" layers help stabilize training and improve performance. The diagram provides a high-level overview of the model's structure, without specifying the details of the individual components (e.g., the size of the embedding, the number of layers "L", or the specific architecture of the FFN).