## Diagram: Autoregressive Model Architecture

### Overview

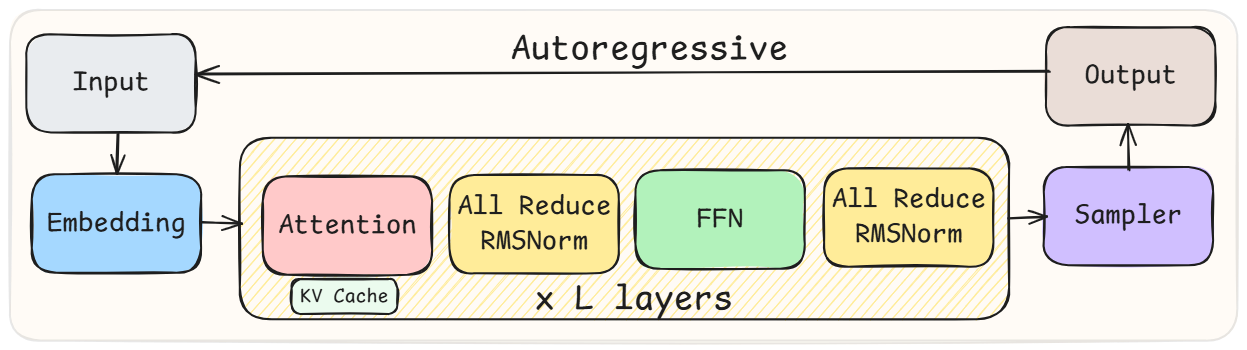

The diagram illustrates the architecture of an autoregressive model, depicting the flow of data from input to output through a series of processing components. The structure includes embedding, attention mechanisms, normalization layers, feed-forward networks (FFN), and a sampler, repeated across multiple layers.

### Components/Axes

- **Input**: Starting point for the model.

- **Embedding**: Converts input into vector representations.

- **Attention**: Processes relationships between input elements (marked with "KV Cache" for key-value storage).

- **All Reduce RMSNorm**: Normalization layer applied across distributed computations.

- **FFN**: Feed-forward neural network for non-linear transformations.

- **Sampler**: Generates output tokens autoregressively.

- **x L layers**: Indicates repetition of the core block (L times).

### Detailed Analysis

1. **Input → Embedding**: Raw input is transformed into embeddings for further processing.

2. **Attention + KV Cache**: Attention mechanism computes contextual relationships, with KV Cache storing intermediate key-value pairs for efficiency.

3. **All Reduce RMSNorm**: Normalizes activations across distributed nodes (appears twice in the flow).

4. **FFN**: Applies non-linear transformations to embeddings.

5. **Sampler**: Produces output tokens sequentially, characteristic of autoregressive models.

### Key Observations

- The architecture repeats the core block (Attention → All Reduce RMSNorm → FFN → All Reduce RMSNorm) **L times**, typical of transformer-based models.

- The presence of **KV Cache** suggests optimization for autoregressive generation (e.g., avoiding redundant computations in attention).

- **All Reduce RMSNorm** implies distributed training or inference, ensuring stable gradients.

### Interpretation

This diagram represents a transformer-like autoregressive model, likely for tasks like language modeling or text generation. The autoregressive flow (Input → Output via Sampler) indicates sequential token generation. The KV Cache optimizes attention by reusing prior computations, critical for efficiency in long sequences. The repeated layers (L) enable hierarchical feature learning, while RMSNorm stabilizes training in distributed settings. The model’s design balances computational efficiency (via KV Cache) and scalability (via All Reduce operations), making it suitable for large-scale autoregressive tasks.