## [Bar Chart Comparison]: Training Time for Two Models Under Two Training Regimes

### Overview

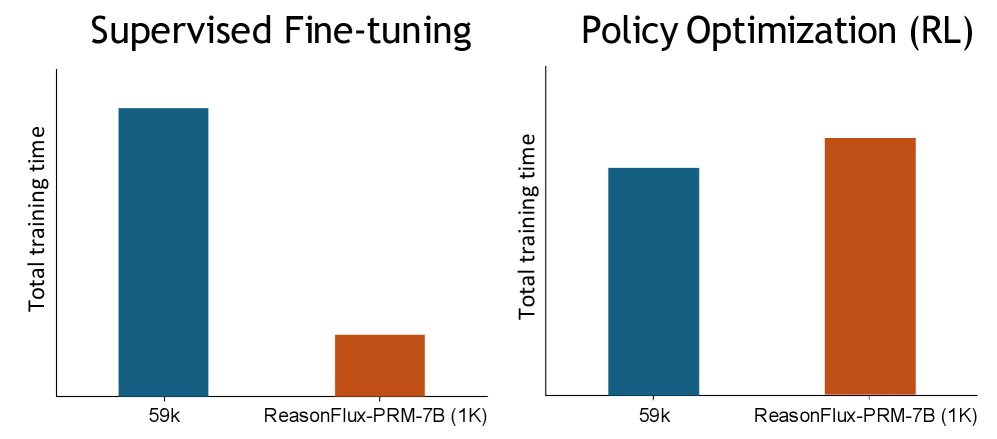

The image displays two side-by-side vertical bar charts comparing the "Total training time" for two different models or configurations ("59k" and "ReasonFlux-PRM-7B (1K)") under two distinct training methods: "Supervised Fine-tuning" (left chart) and "Policy Optimization (RL)" (right chart). The charts visually demonstrate a significant reversal in relative training time between the two methods.

### Components/Axes

* **Chart Titles:**

* Left Chart: "Supervised Fine-tuning"

* Right Chart: "Policy Optimization (RL)"

* **Y-Axis (Both Charts):** Labeled "Total training time". The axis has a vertical line but no numerical scale or tick marks, indicating the values are relative.

* **X-Axis (Both Charts):** Contains two categorical labels:

1. "59k"

2. "ReasonFlux-PRM-7B (1K)"

* **Data Series (Color Coding):**

* **Blue Bar:** Corresponds to the "59k" category on the x-axis.

* **Orange Bar:** Corresponds to the "ReasonFlux-PRM-7B (1K)" category on the x-axis.

* **Spatial Layout:** The two charts are positioned horizontally adjacent. The "Supervised Fine-tuning" chart is on the left, and the "Policy Optimization (RL)" chart is on the right. The y-axis label is centered vertically to the left of both charts.

### Detailed Analysis

**Chart 1: Supervised Fine-tuning (Left)**

* **Trend Verification:** The blue bar ("59k") is substantially taller than the orange bar ("ReasonFlux-PRM-7B (1K)").

* **Data Points (Approximate Relative Values):**

* **59k (Blue):** High training time. Let's assign an approximate relative value of **100 units**.

* **ReasonFlux-PRM-7B (1K) (Orange):** Very low training time. Visually, it appears to be roughly **15-20%** of the height of the blue bar, or approximately **15-20 units**.

**Chart 2: Policy Optimization (RL) (Right)**

* **Trend Verification:** The orange bar ("ReasonFlux-PRM-7B (1K)") is taller than the blue bar ("59k").

* **Data Points (Approximate Relative Values):**

* **59k (Blue):** Moderate training time. Visually, it is shorter than its counterpart in the left chart. Approximate relative value: **70 units**.

* **ReasonFlux-PRM-7B (1K) (Orange):** High training time. It is taller than the blue bar in this chart and appears to be roughly **85-90%** of the height of the "59k" bar from the first chart. Approximate relative value: **85-90 units**.

### Key Observations

1. **Reversal of Efficiency:** The most striking observation is the complete inversion of training time between the two methods. The "59k" model is much slower to train under Supervised Fine-tuning but becomes the faster model under Policy Optimization. Conversely, "ReasonFlux-PRM-7B (1K)" is extremely fast to train with Supervised Fine-tuning but becomes the slower model with Policy Optimization.

2. **Magnitude of Difference:** The disparity in training time is more pronounced in the Supervised Fine-tuning regime (a factor of ~5-6x) than in the Policy Optimization regime (a factor of ~1.2-1.3x).

3. **Absolute Training Time:** Under Policy Optimization, both models require a more comparable amount of training time, whereas under Supervised Fine-tuning, their training times are vastly different.

### Interpretation

This data suggests a fundamental trade-off or difference in the computational cost of training these two model types depending on the training paradigm.

* **"59k" Model:** Likely a larger or more complex base model. Supervised Fine-tuning on it is computationally expensive (high time). However, the subsequent Policy Optimization (RL) phase appears to be relatively more efficient for this model, possibly because the fine-tuning already established a strong policy foundation.

* **"ReasonFlux-PRM-7B (1K)" Model:** The "(1K)" likely denotes a smaller dataset or a more specialized, efficient architecture. It is very quick to fine-tune in a supervised manner, suggesting the task is straightforward for it or the model is highly optimized for this step. However, the Policy Optimization phase is disproportionately time-consuming for this model. This could indicate that the RL process is more challenging, requires more samples to converge, or that the model's initial policy after fine-tuning is less optimal for efficient RL improvement.

**In essence, the charts illustrate that the "fastest" model to train is not a fixed property but is critically dependent on the training stage.** Choosing the right model for a task may involve considering not just final performance but also the computational budget for each phase of the training pipeline. The "ReasonFlux-PRM-7B (1K)" offers a very low-cost entry point via supervised fine-tuning, while the "59k" model might be preferable if the primary cost concern is the RL optimization phase.