## Diagram: Reasoning Strategies with LLMs

### Overview

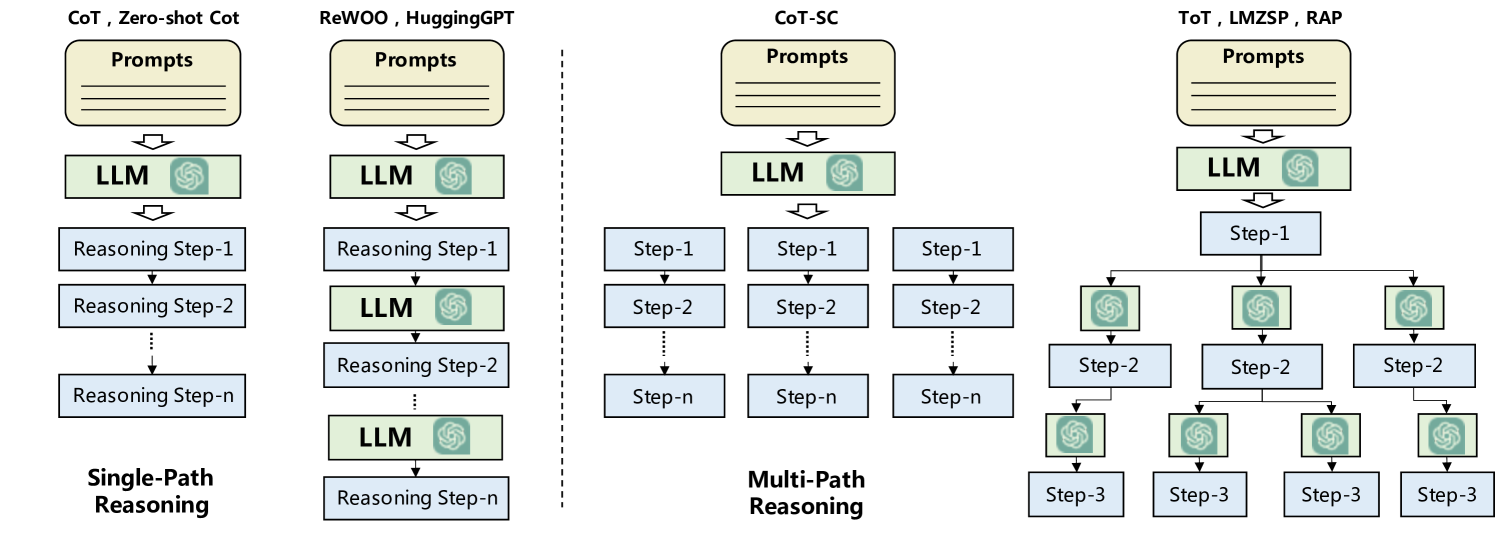

The image presents a comparative diagram illustrating different reasoning strategies employed with Large Language Models (LLMs). It contrasts single-path reasoning approaches (CoT, Zero-shot CoT, ReWOO, HuggingGPT) with multi-path reasoning approaches (CoT-SC, ToT, LMZSP, RAP). The diagram highlights the flow of information and the involvement of LLMs at various stages of the reasoning process.

### Components/Axes

The diagram is divided into four sections, each representing a different reasoning strategy or group of strategies.

* **Section 1 (Left):** CoT, Zero-shot CoT.

* **Section 2 (Left-Middle):** ReWOO, HuggingGPT.

* **Section 3 (Right-Middle):** CoT-SC.

* **Section 4 (Right):** ToT, LMZSP, RAP.

Each section contains the following components:

* **Prompts:** A rounded rectangle at the top, labeled "Prompts," representing the initial input to the system.

* **LLM:** A rounded rectangle labeled "LLM," representing the Large Language Model. It contains a stylized icon resembling the OpenAI logo.

* **Reasoning Steps/Steps:** Rectangles labeled "Reasoning Step-1," "Reasoning Step-2," ..., "Reasoning Step-n" or "Step-1", "Step-2", "Step-n", "Step-3" representing intermediate reasoning steps.

* **Arrows:** Downward-pointing arrows indicating the flow of information between components.

* **Dotted Lines:** Representing continuation of steps.

* **Labels:** "Single-Path Reasoning" and "Multi-Path Reasoning" at the bottom of the respective sections.

### Detailed Analysis

**Section 1: CoT, Zero-shot CoT (Single-Path Reasoning)**

* **Flow:** Prompts -> LLM -> Reasoning Step-1 -> Reasoning Step-2 -> ... -> Reasoning Step-n.

* **Description:** The initial prompt is fed into the LLM, which generates a series of reasoning steps in a linear sequence.

**Section 2: ReWOO, HuggingGPT (Single-Path Reasoning)**

* **Flow:** Prompts -> LLM -> Reasoning Step-1 -> LLM -> Reasoning Step-2 -> LLM -> Reasoning Step-n.

* **Description:** The initial prompt is fed into the LLM, which generates a reasoning step. The output of each reasoning step is then fed back into the LLM to generate the next reasoning step.

**Section 3: CoT-SC (Multi-Path Reasoning)**

* **Flow:** Prompts -> LLM -> Step-1 (3 parallel instances) -> Step-2 (3 parallel instances) -> ... -> Step-n (3 parallel instances).

* **Description:** The initial prompt is fed into the LLM, which generates a series of reasoning steps in parallel.

**Section 4: ToT, LMZSP, RAP (Multi-Path Reasoning)**

* **Flow:** Prompts -> LLM -> Step-1 -> LLM (3 parallel instances) -> Step-2 (3 parallel instances) -> LLM (4 parallel instances) -> Step-3 (4 parallel instances).

* **Description:** The initial prompt is fed into the LLM, which generates a series of reasoning steps in a tree-like structure. The LLM is involved in generating each step.

### Key Observations

* The diagram clearly distinguishes between single-path and multi-path reasoning strategies.

* Single-path reasoning involves a linear sequence of reasoning steps, while multi-path reasoning involves parallel or branching steps.

* The LLM is used at different stages of the reasoning process, depending on the strategy.

* The number of parallel instances in multi-path reasoning varies.

### Interpretation

The diagram illustrates the evolution of reasoning strategies used with LLMs. Single-path reasoning, as exemplified by CoT and Zero-shot CoT, represents a basic approach where the LLM generates a linear chain of thought. ReWOO and HuggingGPT introduce a feedback loop, where the LLM refines its reasoning based on previous steps. Multi-path reasoning, as seen in CoT-SC, ToT, LMZSP, and RAP, explores multiple reasoning paths simultaneously, potentially leading to more robust and accurate solutions. The choice of reasoning strategy depends on the complexity of the task and the desired level of performance. The diagram highlights the increasing sophistication of reasoning strategies and the growing role of LLMs in complex problem-solving.