## Diagram: LLM Reasoning Strategies Comparison

### Overview

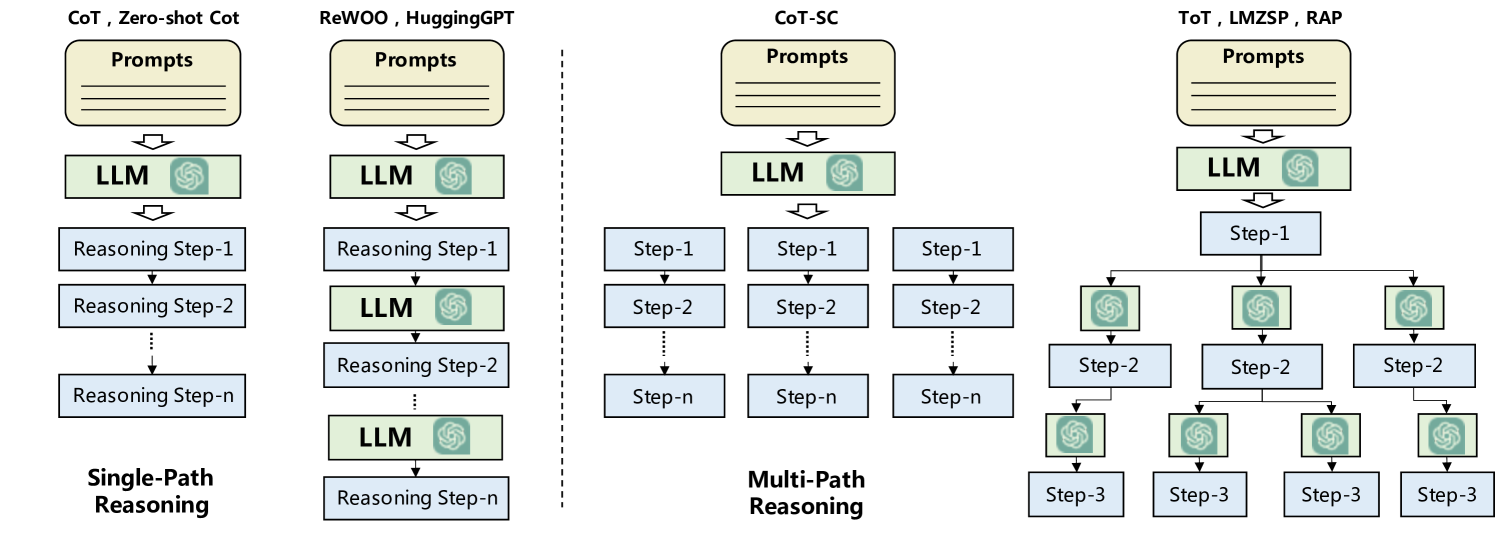

This technical diagram illustrates four distinct architectural patterns for Large Learning Model (LLM) reasoning, categorized into two main groups: **Single-Path Reasoning** and **Multi-Path Reasoning**. The diagram uses a flow-based visualization to show how prompts interact with LLMs to produce reasoning steps, ranging from simple linear sequences to complex tree-like search structures.

### Components/Axes

The diagram is organized into a grid-like structure with a header, a main body containing four flowcharts, and a footer. A vertical dashed line separates the two primary categories.

* **Color Coding:**

* **Light Yellow Boxes (Top):** Represent "Prompts" (input).

* **Light Green Boxes with Logo:** Represent the "LLM" (processing unit). The logo inside is the OpenAI/ChatGPT logo.

* **Light Blue Boxes:** Represent "Reasoning Steps" or "Steps" (intermediate outputs/thoughts).

* **Spatial Layout:**

* **Header (Top):** Contains the names of specific AI techniques/frameworks associated with each diagram.

* **Main Body (Center):** Four distinct flowcharts showing the logic flow from top to bottom.

* **Footer (Bottom):** Categorizes the diagrams into "Single-Path Reasoning" (left) and "Multi-Path Reasoning" (right).

* **Divider:** A vertical dashed line separates the first two diagrams from the last two.

---

### Content Details

#### 1. Single-Path Reasoning (Left Side)

**A. Linear Chain (Far Left)**

* **Associated Techniques:** CoT (Chain of Thought), Zero-shot CoT.

* **Flow Description:** A strictly vertical, linear progression.

* **Sequence:**

1. **Prompts** (Top)

2. $\downarrow$ **LLM**

3. $\downarrow$ **Reasoning Step-1**

4. $\downarrow$ **Reasoning Step-2**

5. $\vdots$ (Vertical dotted line indicating continuation)

6. $\downarrow$ **Reasoning Step-n** (Bottom)

**B. Iterative/Interleaved Chain (Middle Left)**

* **Associated Techniques:** ReWOO, HuggingGPT.

* **Flow Description:** A vertical progression where the LLM is called repeatedly between reasoning steps.

* **Sequence:**

1. **Prompts** (Top)

2. $\downarrow$ **LLM**

3. $\downarrow$ **Reasoning Step-1**

4. $\downarrow$ **LLM**

5. $\downarrow$ **Reasoning Step-2**

6. $\vdots$ (Vertical dotted line)

7. $\downarrow$ **LLM**

8. $\downarrow$ **Reasoning Step-n** (Bottom)

#### 2. Multi-Path Reasoning (Right Side)

**C. Parallel Paths (Middle Right)**

* **Associated Technique:** CoT-SC (Chain of Thought - Self Consistency).

* **Flow Description:** A single LLM call branches into multiple independent, parallel reasoning chains.

* **Sequence:**

1. **Prompts** (Top)

2. $\downarrow$ **LLM**

3. $\downarrow$ (Three-way branch)

4. **Three Parallel Columns:** Each column follows a linear path: **Step-1** $\rightarrow$ **Step-2** $\rightarrow$ $\vdots$ $\rightarrow$ **Step-n**.

**D. Tree-Structured Search (Far Right)**

* **Associated Techniques:** ToT (Tree of Thoughts), LMZSP, RAP.

* **Flow Description:** A complex branching structure where each step involves further LLM calls and potential branching, resembling a search tree.

* **Sequence:**

1. **Prompts** (Top)

2. $\downarrow$ **LLM**

3. $\downarrow$ **Step-1**

4. $\downarrow$ (Three-way branch)

5. **Level 2:** Three separate **LLM** boxes, each leading to a **Step-2** box.

6. $\downarrow$ (Further branching from Step-2 boxes)

7. **Level 3:** Four separate **LLM** boxes (as depicted), each leading to a **Step-3** box.

---

### Key Observations

* **Complexity Gradient:** The diagrams progress in complexity from left to right, starting with a simple linear chain and ending with a multi-level tree structure.

* **LLM Utilization:** In "CoT," the LLM is used once to generate a sequence. In "ReWOO/HuggingGPT," the LLM is used iteratively. In "ToT/RAP," the LLM is used at every node of a branching tree.

* **Divergence vs. Convergence:** The "Multi-Path" section highlights divergence (exploring multiple possibilities), whereas "Single-Path" focuses on a single line of reasoning.

---

### Interpretation

This diagram demonstrates the evolution of "Prompt Engineering" into "Agentic Workflows."

* **Single-Path Reasoning** represents the foundational approach where a model is asked to "think step-by-step." The interleaved version (ReWOO) suggests a more sophisticated agent that uses the LLM as a controller to process intermediate results before moving to the next step.

* **Multi-Path Reasoning** represents a shift toward search and optimization. **CoT-SC** suggests a "majority vote" or consistency check across parallel outputs to improve accuracy. **ToT (Tree of Thoughts)** and **RAP** represent the most advanced stage, where the reasoning process is treated as a state-space search. This allows the system to explore different reasoning branches, look ahead, and potentially backtrack, much like a chess engine or a classical AI search algorithm, but powered by the semantic capabilities of an LLM.

Essentially, the data suggests that as tasks become more complex, the architecture moves from simple generation to structured, multi-step search and verification.