\n

## Diagram: Reasoning Pathways in Large Language Models

### Overview

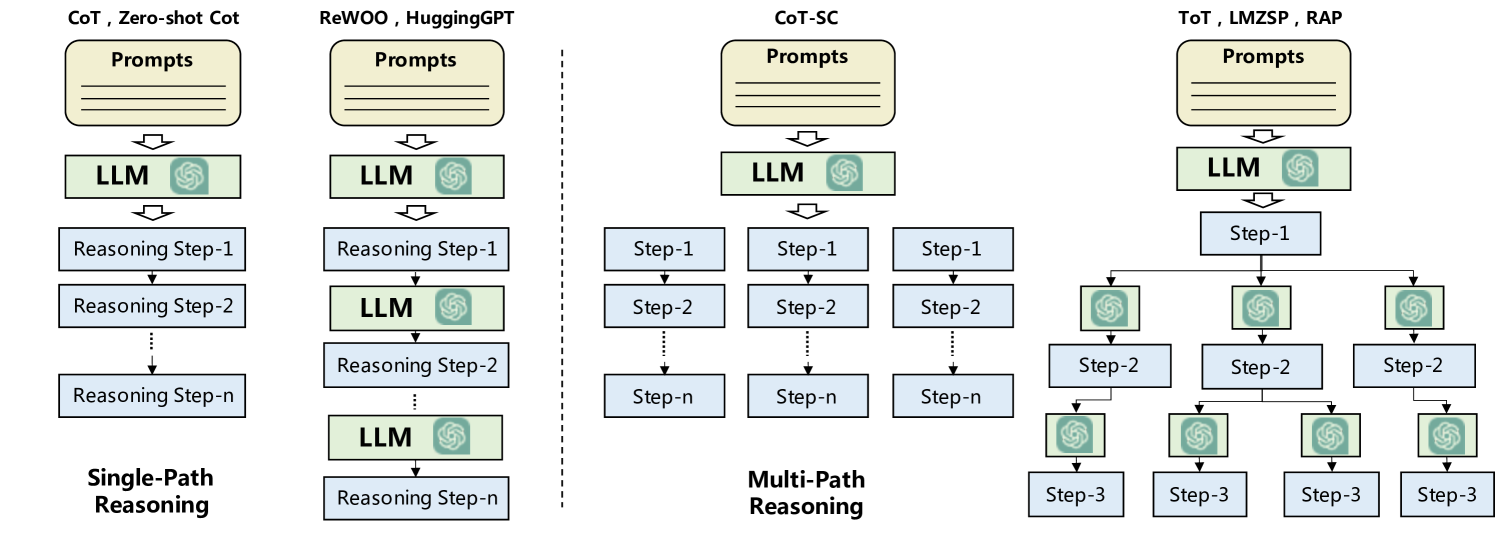

The image is a diagram illustrating four different reasoning pathways employed by Large Language Models (LLMs). These pathways are categorized as Single-Path Reasoning, and Multi-Path Reasoning, with variations within each. The diagram visually represents the flow of information from prompts through LLM processing to reasoning steps.

### Components/Axes

The diagram is divided into four main columns, each representing a different reasoning approach:

1. **CoT, Zero-Shot CoT:** Prompts are fed into an LLM, which then generates a series of sequential reasoning steps (Step-1, Step-2,...Step-n).

2. **ReWOO, HuggingGPT:** Similar to the first, prompts go to an LLM, followed by sequential reasoning steps, but with an additional LLM involved in the reasoning process at each step.

3. **CoT-SC:** Prompts are fed into an LLM, which then branches into multiple parallel reasoning paths (Step-1, Step-2,...Step-n) at each stage.

4. **ToT, LMZSP, RAP:** Prompts are fed into an LLM, which then branches into multiple parallel reasoning paths (Step-1, Step-2,...Step-3) at each stage.

Each column includes the following components:

* **Prompts:** Represented by rectangular boxes at the top.

* **LLM:** Represented by a rounded rectangle with a chip icon inside.

* **Reasoning Steps/Step-n:** Represented by rectangular boxes, indicating sequential or parallel reasoning processes.

* **Arrows:** Indicate the flow of information between components.

* **Labels:** Text labels identify each component and the overall reasoning approach.

### Detailed Analysis or Content Details

Let's analyze each column:

**1. Single-Path Reasoning (CoT, Zero-Shot CoT):**

* **Prompts:** Input to the system.

* **LLM:** Processes the prompts.

* **Reasoning Step-1:** The LLM generates the first reasoning step.

* **Reasoning Step-2:** The LLM continues the reasoning process.

* **Reasoning Step-n:** The final reasoning step in the sequence.

* The flow is strictly sequential, with each step building upon the previous one.

**2. Single-Path Reasoning (ReWOO, HuggingGPT):**

* **Prompts:** Input to the system.

* **LLM:** Processes the prompts.

* **Reasoning Step-1:** The LLM generates the first reasoning step.

* **LLM:** The output of the first step is fed back into another LLM.

* **Reasoning Step-2:** The second LLM generates the next reasoning step.

* **LLM:** The output of the second step is fed back into another LLM.

* **Reasoning Step-n:** The final reasoning step in the sequence.

* The flow is sequential, but involves multiple LLM calls at each step.

**3. Multi-Path Reasoning (CoT-SC):**

* **Prompts:** Input to the system.

* **LLM:** Processes the prompts.

* **Step-1:** The LLM branches into multiple parallel reasoning paths.

* **Step-2:** Each path continues to branch into multiple parallel reasoning paths.

* **Step-n:** The final reasoning steps in each parallel path.

* The flow is parallel, with multiple reasoning paths explored simultaneously.

**4. Multi-Path Reasoning (ToT, LMZSP, RAP):**

* **Prompts:** Input to the system.

* **LLM:** Processes the prompts.

* **Step-1:** The LLM branches into multiple parallel reasoning paths.

* **Step-2:** Each path continues to branch into multiple parallel reasoning paths.

* **Step-3:** The final reasoning steps in each parallel path.

* The flow is parallel, with multiple reasoning paths explored simultaneously.

### Key Observations

* The diagram highlights a shift from sequential (single-path) to parallel (multi-path) reasoning approaches.

* The ReWOO/HuggingGPT approach introduces iterative LLM calls within a single path.

* Multi-path reasoning allows for exploration of multiple potential solutions or lines of thought concurrently.

* The number of steps (n) is variable and represents the depth of the reasoning process.

### Interpretation

The diagram illustrates the evolution of reasoning strategies in LLMs. Early approaches (CoT, Zero-Shot CoT) relied on a single, sequential chain of thought. Later methods (ReWOO, HuggingGPT) attempted to improve reasoning by iteratively refining the output through multiple LLM calls. The most recent approaches (CoT-SC, ToT, LMZSP, RAP) leverage the power of parallel processing to explore a wider range of reasoning paths, potentially leading to more robust and accurate results. The branching structure in the multi-path reasoning diagrams suggests a tree-like search process, where the LLM explores different possibilities before converging on a final answer. This diagram is a conceptual representation of these processes, and doesn't provide specific data on performance or efficiency. It serves as a visual aid for understanding the different architectural choices in LLM reasoning.