## Diagram: Comparison of Reasoning Architectures for Large Language Models (LLMs)

### Overview

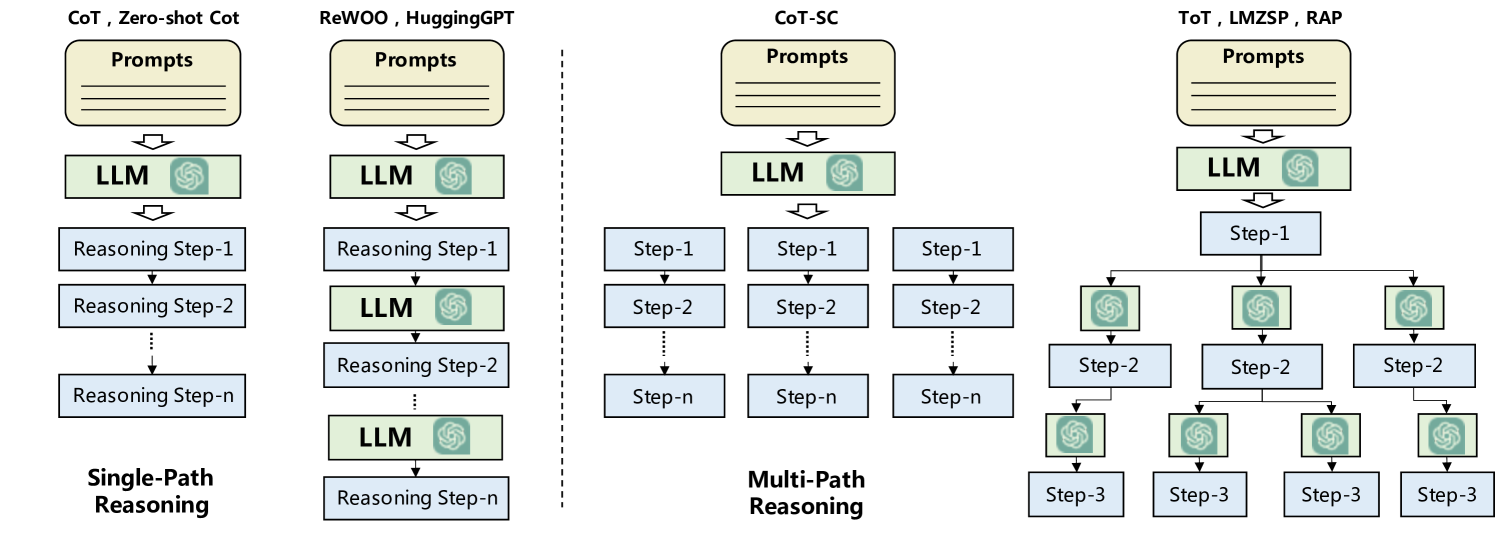

The image is a technical diagram illustrating and comparing four distinct architectural patterns for prompting and reasoning with Large Language Models (LLMs). The diagram is divided into two primary categories, separated by a vertical dashed line: **Single-Path Reasoning** on the left and **Multi-Path Reasoning** on the right. Each category contains two specific methodological examples, depicted as flowcharts showing the flow from prompts to final reasoning steps.

### Components/Axes

The diagram is not a chart with numerical axes but a conceptual flowchart. Its components are:

* **Method Labels:** Four distinct method groupings are labeled at the top of each flowchart.

* **Process Blocks:** Standardized shapes represent different stages:

* **Beige Rounded Rectangle:** Labeled "Prompts". This is the input stage.

* **Light Green Rectangle with LLM Icon:** Labeled "LLM". This represents a call to a Large Language Model.

* **Light Blue Rectangle:** Labeled with reasoning steps (e.g., "Reasoning Step-1", "Step-1"). These represent intermediate or final outputs.

* **Flow Arrows:** Black arrows indicate the direction of data or control flow between blocks.

* **Dashed Vertical Line:** Visually separates the "Single-Path" and "Multi-Path" reasoning paradigms.

### Detailed Analysis

The diagram details four specific reasoning architectures:

**1. Single-Path Reasoning (Left Side)**

* **Method 1: CoT, Zero-shot Cot**

* **Flow:** `Prompts` -> `LLM` -> `Reasoning Step-1` -> `Reasoning Step-2` -> ... -> `Reasoning Step-n`.

* **Description:** A linear, sequential chain. A single prompt is fed to an LLM, which generates a series of reasoning steps in a strict sequence.

* **Method 2: ReWOO, HuggingGPT**

* **Flow:** `Prompts` -> `LLM` -> `Reasoning Step-1` -> `LLM` -> `Reasoning Step-2` -> ... -> `LLM` -> `Reasoning Step-n`.

* **Description:** A linear chain with iterative LLM calls. After each reasoning step, the output is fed back into the LLM to generate the next step, allowing for refinement or tool use between steps.

**2. Multi-Path Reasoning (Right Side)**

* **Method 3: CoT-SC (Self-Consistency)**

* **Flow:** A single `Prompts` block feeds into one `LLM` block. The output of this LLM then branches into **three parallel, independent chains**. Each chain follows its own linear path: `Step-1` -> `Step-2` -> ... -> `Step-n`.

* **Description:** Generates multiple independent reasoning paths (chains) from the same initial prompt. The final answer is typically derived by taking a majority vote or consistency check across the outputs of these parallel chains.

* **Method 4: ToT, LMZSP, RAP (Tree of Thoughts, etc.)**

* **Flow:** `Prompts` -> `LLM` -> `Step-1`. From `Step-1`, the flow branches into **three separate paths**, each starting with its own `LLM` block. Each of these LLM blocks generates a `Step-2`. From each `Step-2`, the flow branches again, with each path leading to another `LLM` block that generates a `Step-3`.

* **Description:** A tree-like or graph structure. The process explores multiple reasoning trajectories at each step. The system can evaluate, prune, or backtrack from different branches (thoughts) before proceeding, enabling more deliberate and exploratory problem-solving.

### Key Observations

1. **Structural Dichotomy:** The core visual and conceptual split is between linear (single-path) and branching (multi-path) execution flows.

2. **LLM Call Frequency:** The "ReWOO, HuggingGPT" method is distinct within the single-path category for requiring an LLM call at *every* reasoning step, unlike "CoT" which uses a single initial call.

3. **Parallelism vs. Hierarchy:** "CoT-SC" shows flat parallelism (multiple independent chains), while "ToT, LMZSP, RAP" shows a hierarchical tree where each node can spawn multiple child nodes.

4. **Abstraction Level:** The diagram is high-level and methodological. It does not specify the content of prompts, the nature of the reasoning steps, or the specific LLMs used.

### Interpretation

This diagram serves as a taxonomy for understanding how prompting strategies have evolved to enhance LLM reasoning capabilities.

* **What it demonstrates:** It visually argues that moving from simple sequential chains (CoT) to methods involving iterative refinement (ReWOO), parallel sampling (CoT-SC), or structured search (ToT) represents an increase in architectural complexity aimed at improving robustness, accuracy, and the ability to solve harder problems.

* **Relationship between elements:** The progression from left to right, and within each category from top to bottom, suggests an evolution in strategy. Single-path methods are simpler and faster but may be brittle. Multi-path methods introduce redundancy (CoT-SC) or explicit exploration and evaluation mechanisms (ToT) to overcome the limitations of a single, potentially flawed, reasoning trajectory.

* **Underlying principle:** The diagram encapsulates the shift from treating the LLM as a one-shot "answer generator" to using it as a "reasoning engine" within a larger, more controlled computational framework. The key innovation highlighted is the management of *multiple potential lines of thought*, either by generating them in parallel or by systematically exploring a tree of possibilities. This is a fundamental concept in applying LLMs to complex tasks requiring planning, search, or verification.