## Composite Graph: Training Dynamics Across Four Metrics

### Overview

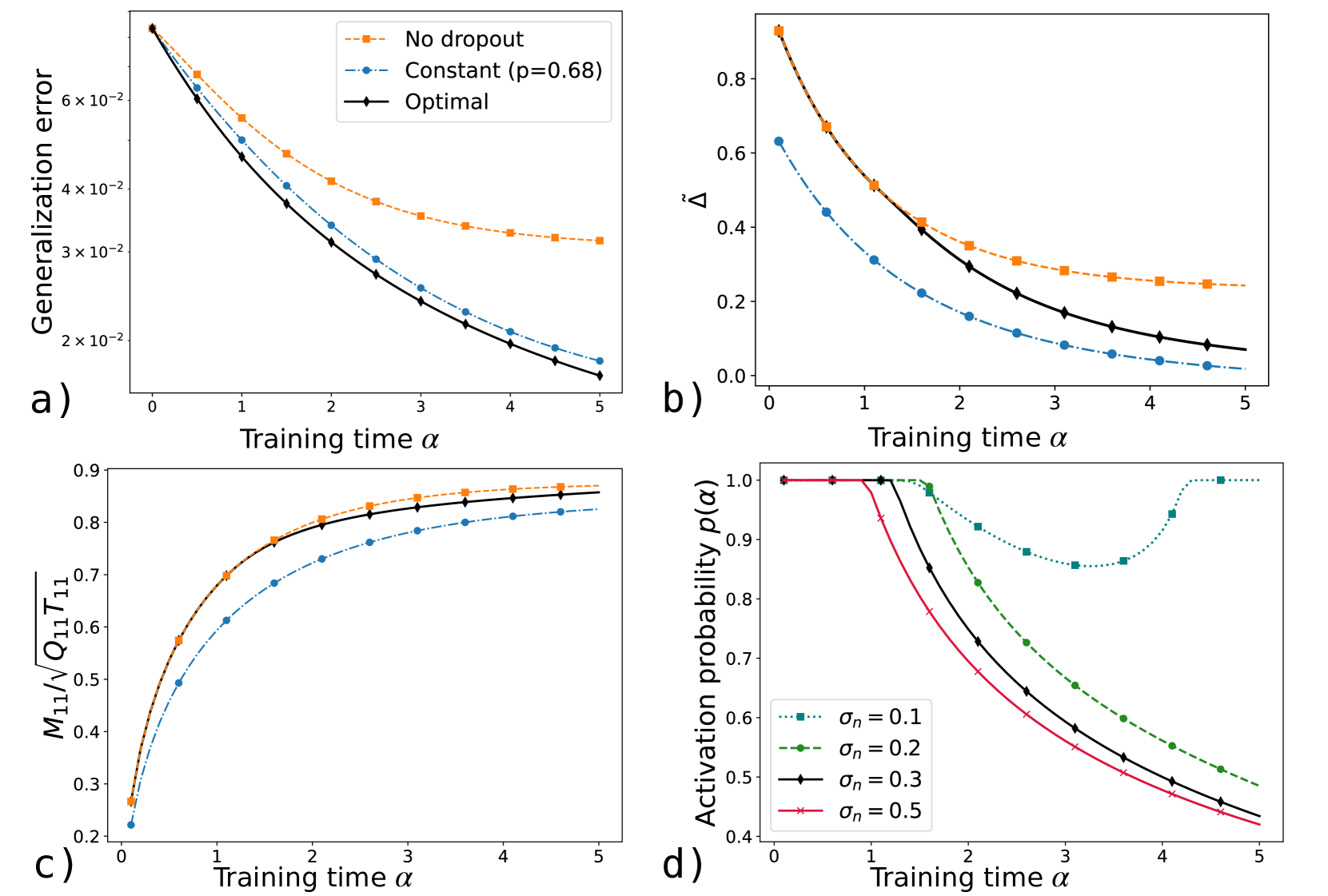

The image presents four subplots (a-d) illustrating the evolution of different metrics during training, with training time (α) on the x-axis. Each subplot compares three scenarios: "No dropout," "Constant (p=0.68)," and "Optimal" (or varying σₙ values). All plots show distinct trends in their respective metrics over α=0 to 5.

---

### Components/Axes

**a) Generalization Error**

- **Y-axis**: Generalization error (log scale, 2×10⁻² to 6×10⁻²)

- **X-axis**: Training time α (0 to 5)

- **Legend**:

- Orange dashed line: No dropout

- Blue dash-dot line: Constant (p=0.68)

- Black solid line: Optimal

**b) Δ Metric**

- **Y-axis**: Δ (0 to 0.8)

- **X-axis**: Training time α (0 to 5)

- **Legend**: Same as subplot a).

**c) M11/√(Q11T11)**

- **Y-axis**: Normalized metric (0.2 to 0.9)

- **X-axis**: Training time α (0 to 5)

- **Legend**: Same as subplot a).

**d) Activation Probability p(α)**

- **Y-axis**: Activation probability (0.4 to 1.0)

- **X-axis**: Training time α (0 to 5)

- **Legend**:

- Dotted teal: σₙ=0.1

- Dashed green: σₙ=0.2

- Solid black: σₙ=0.3

- Cross red: σₙ=0.5

---

### Detailed Analysis

**a) Generalization Error**

- **Trend**: All lines decrease monotonically.

- **No dropout**: Starts at ~6×10⁻² (α=0), ends at ~3×10⁻² (α=5).

- **Constant (p=0.68)**: Starts at ~5.5×10⁻², ends at ~2×10⁻².

- **Optimal**: Starts at ~5.8×10⁻², ends at ~1.5×10⁻².

- **Key**: Optimal outperforms others by ~50% at α=5.

**b) Δ Metric**

- **Trend**: All lines decrease, with Optimal lowest.

- **No dropout**: Drops from 0.8 to 0.25.

- **Constant**: Drops from 0.7 to 0.15.

- **Optimal**: Drops from 0.75 to 0.1.

- **Key**: Optimal reduces Δ by ~87% compared to No dropout.

**c) M11/√(Q11T11)**

- **Trend**: All lines increase, approaching saturation.

- **No dropout**: Rises from 0.3 to 0.85.

- **Constant**: Rises from 0.4 to 0.8.

- **Optimal**: Rises from 0.35 to 0.88.

- **Key**: Optimal achieves highest efficiency (~25% better than No dropout).

**d) Activation Probability p(α)**

- **Trend**: U-shaped curves for all σₙ.

- **σₙ=0.1**: Drops to 0.6 at α=3, rises to 0.8 at α=5.

- **σₙ=0.5**: Drops to 0.4 at α=3, rises to 0.6 at α=5.

- **Key**: Lower σₙ values (e.g., 0.1) maintain higher probabilities post-α=3.

---

### Key Observations

1. **Optimal vs. Constant**: The "Optimal" strategy consistently outperforms the fixed p=0.68 across all metrics.

2. **Activation Probability**: Lower σₙ (0.1–0.2) preserves higher activation probabilities after α=3, suggesting better generalization.

3. **No Dropout**: Performs worst in generalization and Δ but best in M11/√(Q11T11), indicating a trade-off between efficiency and robustness.

---

### Interpretation

- **Optimal Strategy**: Likely adapts dropout rates dynamically (vs. fixed p=0.68), balancing generalization error, Δ, and efficiency (M11).

- **Activation Probability**: The U-shape implies a phase transition: early training reduces overfitting (lower p), while later stages recover representational capacity (higher p).

- **σₙ Sensitivity**: Lower σₙ (0.1–0.2) may prevent excessive activation suppression, critical for maintaining performance in later training phases.

This analysis highlights the importance of adaptive regularization (Optimal) over static dropout, particularly for metrics sensitive to overfitting (Δ, generalization error). The activation probability trends suggest σₙ tuning is critical for balancing model expressivity and stability.