## Screenshot: ChatGPT Joke Comparison (Chinese vs. English)

### Overview

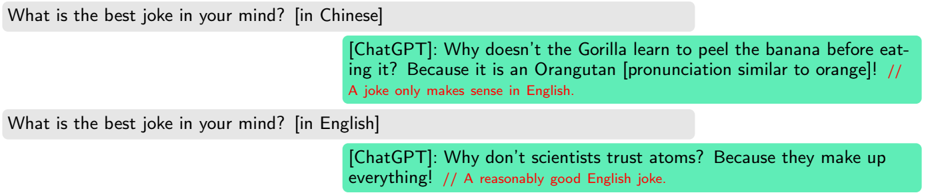

The image is a screenshot of a chat interface, displaying two parallel conversations with an AI model identified as "ChatGPT." The conversations compare the model's response to the same prompt ("What is the best joke in your mind?") when asked in Chinese versus English. The interface uses colored chat bubbles: gray for user queries and green for AI responses. Red text annotations provide commentary on the jokes.

### Components/Axes

* **Layout:** A vertical sequence of four chat bubbles.

* **User Bubbles (Gray):**

* Top-left: "What is the best joke in your mind? [in Chinese]"

* Third from top, left-aligned: "What is the best joke in your mind? [in English]"

* **AI Response Bubbles (Green):**

* Second from top, right-aligned: Contains the Chinese joke and a red annotation.

* Bottom, right-aligned: Contains the English joke and a red annotation.

* **Annotations (Red Text):** Embedded within the green AI response bubbles, following the joke text and separated by double slashes (`//`).

### Detailed Analysis / Content Details

**1. First Exchange (Chinese Prompt):**

* **User Query:** `What is the best joke in your mind? [in Chinese]`

* **AI Response (Transcribed):**

* `[ChatGPT]: Why doesn't the Gorilla learn to peel the banana before eating it? Because it is an Orangutan [pronunciation similar to orange]! //`

* **Red Annotation:** `A joke only makes sense in English.`

* **Language:** The primary joke text is in English, but it is presented as the response to a Chinese prompt. The punchline relies on a homophonic pun between "Orangutan" and "orange" in English.

**2. Second Exchange (English Prompt):**

* **User Query:** `What is the best joke in your mind? [in English]`

* **AI Response (Transcribed):**

* `[ChatGPT]: Why don't scientists trust atoms? Because they make up everything! //`

* **Red Annotation:** `A reasonably good English joke.`

* **Language:** The joke and annotation are entirely in English.

### Key Observations

* **Identical Prompt, Different Output:** The AI provides two completely different jokes based on the language of the prompt, despite the core question being the same.

* **Linguistic Dependence of Humor:** The first joke (presented for the Chinese prompt) is explicitly noted as only making sense in English, highlighting a cross-linguistic translation issue for humor.

* **Meta-Commentary:** The red text acts as a layer of evaluation or critique on the AI's own output, judging the cultural/linguistic appropriateness and quality of the jokes.

* **UI Design:** The chat interface uses a standard right-aligned (AI) and left-aligned (user) bubble format with distinct background colors for role differentiation.

### Interpretation

This screenshot serves as a case study in the challenges of cross-linguistic and cross-cultural AI interaction. It demonstrates that:

1. **Contextual Response Generation:** The AI's output is heavily influenced by the language of the input, not just its semantic meaning. It likely retrieves or generates jokes from a corpus associated with the specified language.

2. **The Untranslatability of Certain Humor:** The first joke's reliance on an English-specific homophone ("orangutan"/"orange") makes it nonsensical when directly translated, as the red annotation points out. This underscores how humor is often deeply embedded in the phonetic and cultural fabric of a specific language.

3. **AI Self-Awareness (or Lack Thereof):** The red annotations are intriguing. They are not part of the standard ChatGPT response format and appear to be added by a human reviewer or a separate system. They provide a critical, human-like evaluation of the AI's performance, judging the first joke as linguistically inappropriate for the context and the second as "reasonably good." This creates a layered dialogue: User -> AI -> Critic.

4. **Practical Implication:** For users seeking culturally appropriate or genuinely funny content, simply translating a prompt may not yield satisfactory results. The AI's knowledge base and generative patterns are segmented by language, and humor does not always transfer.

The image is less about the data within the jokes themselves and more about the meta-data of the interaction: the relationship between input language, output content, and external evaluation.