## Diagram: Comparison of Decoding Methods in Language Models

### Overview

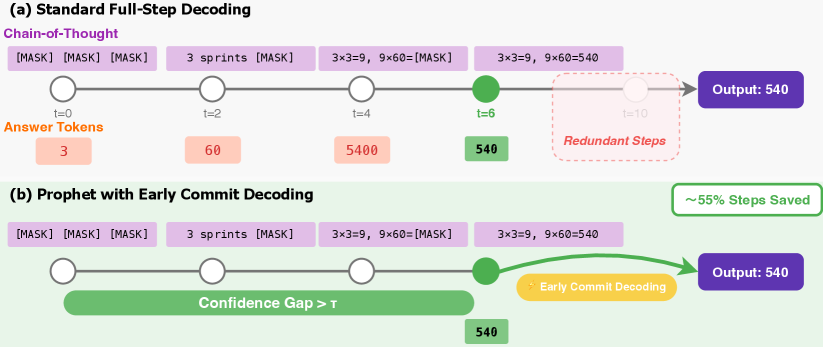

The image compares two decoding strategies in language models:

1. **(a) Standard Full-Step Decoding**: A sequential process with explicit intermediate steps.

2. **(b) Prophet with Early Commit Decoding**: A streamlined approach with confidence-based early termination.

Both methods solve the arithmetic problem "3×3=9, 9×60=?" and output **540**, but differ in computational efficiency.

---

### Components/Axes

#### (a) Standard Full-Step Decoding

- **Timeline (x-axis)**: Steps labeled `t=0`, `t=2`, `t=4`, `t=6`, `t=10`.

- **Y-axis**: Not explicitly labeled; represents stages of decoding.

- **Legend**:

- **Purple**: Chain-of-Thought (CoT) reasoning.

- **Orange**: Answer tokens (e.g., "3", "60", "5400").

- **Green**: Final output ("540").

- **Key Text**:

- "3 sprints [MASK]"

- "3×3=9, 9×60=[MASK]"

- "Redundant Steps" (highlighted at `t=10`).

#### (b) Prophet with Early Commit Decoding

- **Timeline (x-axis)**: Steps labeled `t=0`, `t=2`, `t=4`, `t=6`.

- **Y-axis**: Not explicitly labeled; represents stages of decoding.

- **Legend**:

- **Green**: Confidence Gap > T (threshold for early termination).

- **Yellow**: Early Commit Decoding.

- **Purple**: Final output ("540").

- **Key Text**:

- "Confidence Gap > T"

- "Early Commit Decoding"

- "~55% Steps Saved"

---

### Detailed Analysis

#### (a) Standard Full-Step Decoding

- **Steps**:

- `t=0`: Initial prompt with `[MASK]` placeholders.

- `t=2`: Partial answer "3" (orange).

- `t=4`: Intermediate result "60" (orange).

- `t=6`: Final result "5400" (orange), then corrected to "540" (green).

- `t=10`: Redundant steps (dashed red box) after the correct answer is known.

- **Flow**: Linear progression with no early termination.

#### (b) Prophet with Early Commit Decoding

- **Steps**:

- `t=0`: Initial prompt with `[MASK]` placeholders.

- `t=2`: Partial answer "3" (orange).

- `t=4`: Intermediate result "60" (orange).

- `t=6`: Confidence threshold met (green), triggering early commit.

- **Flow**: Early termination at `t=6` avoids redundant steps.

---

### Key Observations

1. **Efficiency**: Prophet with Early Commit Decoding skips `t=10` steps, saving ~55% of computational effort.

2. **Accuracy**: Both methods produce the same final output (**540**).

3. **Confidence Mechanism**: The green "Confidence Gap > T" in (b) indicates a model's ability to self-correct and terminate early.

4. **Redundancy**: Standard decoding performs unnecessary computations post-solution.

---

### Interpretation

The diagram illustrates how **early commit decoding** optimizes language model inference by leveraging confidence thresholds to avoid redundant computations. This is critical for real-time applications where latency and resource usage matter. The "Confidence Gap > T" mechanism acts as a self-regulating checkpoint, ensuring correctness while minimizing wasted steps. The 55% efficiency gain highlights the practical value of adaptive decoding strategies in large-scale NLP systems.