## Simulation Environment Screenshot: AI Training Arena

### Overview

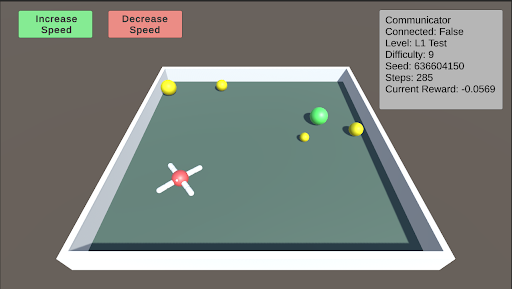

This image is a screenshot of a 3D simulation environment, likely used for training or testing an AI agent (a drone-like object). The interface includes control buttons, a status information panel, and a central arena containing the agent and target objects. The scene is rendered with simple, low-polygon graphics.

### Components/Axes

The image is divided into three primary regions:

1. **Header/UI Region (Top):**

* **Top-Left:** Two rectangular buttons.

* Green button with white text: `Increase Speed`

* Red button with white text: `Decrease Speed`

* **Top-Right:** A semi-transparent gray information panel titled `Communicator`. It contains the following text lines:

* `Connected: False`

* `Level: L1 Test`

* `Difficulty: 9`

* `Seed: 639604150`

* `Steps: 1000`

* `Current Reward: -0.0569`

2. **Main Arena (Center):**

* A rectangular, green-floored arena with a white border and gray outer walls, viewed from an isometric perspective.

* **Objects within the arena:**

* A white, cross-shaped drone with a red central hub, positioned in the lower-left quadrant.

* A large, bright green sphere located in the center-right area.

* Five smaller yellow spheres scattered around the arena: two near the top-left corner, one near the top-center, one near the green sphere, and one in the lower-right quadrant.

3. **Background:** A solid, dark gray background surrounds the arena.

### Detailed Analysis

* **Text Transcription (Communicator Panel):**

* `Connected: False` - Indicates the simulation or agent is not currently connected to a controlling system or server.

* `Level: L1 Test` - Specifies the current test level or scenario.

* `Difficulty: 9` - A numerical difficulty rating, suggesting a high level of challenge.

* `Seed: 639604150` - A random seed number used to initialize the simulation's state for reproducibility.

* `Steps: 1000` - The number of simulation steps or time units that have elapsed.

* `Current Reward: -0.0569` - The cumulative reward value for the agent at this step. The negative value indicates the agent's actions have, on net, been penalized according to the task's reward function.

* **Spatial Grounding & Object Placement:**

* The `Communicator` panel is fixed in the **top-right corner** of the screen.

* The control buttons are fixed in the **top-left corner**.

* The drone is located at approximately **30% from the left edge and 60% from the top edge** of the arena floor.

* The large green sphere is at approximately **70% from the left and 40% from the top** of the arena floor.

* The yellow spheres are distributed, with the closest pair in the **top-left corner** of the arena.

### Key Observations

1. **Negative Reward State:** The `Current Reward` of `-0.0569` after `1000` steps is a critical data point. It suggests the agent is not successfully completing its objective (e.g., reaching a target, collecting items) and may be incurring penalties for time, collisions, or inefficient movement.

2. **High Difficulty Setting:** A `Difficulty` level of `9` implies the task parameters (e.g., speed of targets, complexity of navigation, precision required) are set to a challenging level.

3. **Disconnected State:** The `Connected: False` status is notable. It could mean the simulation is running autonomously with a pre-trained policy, or that the interface for external control is inactive.

4. **Object Configuration:** The presence of one large green sphere and multiple small yellow spheres suggests a potential task hierarchy. The green sphere may be a primary target or obstacle, while the yellow spheres could be secondary targets, waypoints, or hazards.

### Interpretation

This screenshot captures a snapshot of a reinforcement learning or AI robotics simulation in progress. The data suggests an agent (the drone) is operating in a challenging, pre-configured environment (`L1 Test`, `Difficulty: 9`, `Seed: 639604150`). After a significant number of steps (`1000`), the agent's performance is suboptimal, as indicated by the negative cumulative reward. This could be due to the high difficulty, an ineffective policy, or the agent being in an exploratory phase of learning.

The spatial arrangement implies a navigation or collection task. The agent's position in the lower-left, distant from the cluster of objects in the center and top-left, may explain the negative reward—it has not yet reached a high-value area. The "Increase/Decrease Speed" buttons suggest a human operator can interact with the simulation's time scale, possibly to observe behavior more closely or to speed up training. The `Connected: False` status is the most ambiguous element; it frames the entire scene as either a recorded playback, a test of an autonomous system, or a session where the primary learning connection is severed.