\n

## Line Chart: Cost per Sequence vs. Sequence Number

### Overview

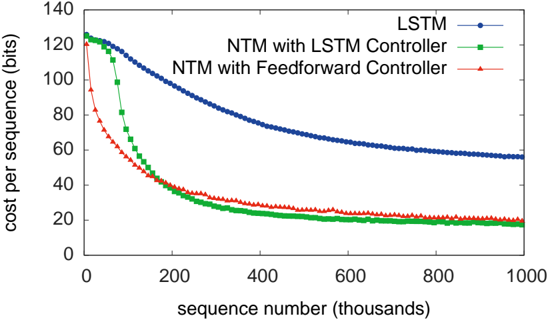

This image presents a line chart illustrating the cost per sequence (in bits) as a function of the sequence number (in thousands). The chart compares the performance of three different models: LSTM, NTM with LSTM Controller, and NTM with Feedforward Controller. All three models demonstrate a decreasing cost per sequence as the sequence number increases, indicating learning and improvement over time.

### Components/Axes

* **X-axis:** Sequence number (thousands). Scale ranges from 0 to 1000, with tick marks at intervals of 200.

* **Y-axis:** Cost per sequence (bits). Scale ranges from 0 to 140, with tick marks at intervals of 20.

* **Legend:** Located in the top-right corner.

* LSTM (Blue line with circle markers)

* NTM with LSTM Controller (Green line with triangle markers)

* NTM with Feedforward Controller (Red line with diamond markers)

### Detailed Analysis

**LSTM (Blue Line):**

The blue line representing LSTM starts at approximately 122 bits at sequence number 0. It exhibits a decreasing trend, becoming less steep over time.

* At sequence number 200: ~85 bits

* At sequence number 400: ~65 bits

* At sequence number 600: ~55 bits

* At sequence number 800: ~50 bits

* At sequence number 1000: ~47 bits

**NTM with LSTM Controller (Green Line):**

The green line starts at approximately 125 bits at sequence number 0. It shows a rapid initial decrease, followed by a slower decline and a plateau.

* At sequence number 200: ~45 bits

* At sequence number 400: ~25 bits

* At sequence number 600: ~20 bits

* At sequence number 800: ~18 bits

* At sequence number 1000: ~17 bits

**NTM with Feedforward Controller (Red Line):**

The red line begins at approximately 115 bits at sequence number 0. It demonstrates the steepest initial decrease among the three models, followed by a leveling off.

* At sequence number 200: ~55 bits

* At sequence number 400: ~30 bits

* At sequence number 600: ~22 bits

* At sequence number 800: ~20 bits

* At sequence number 1000: ~19 bits

### Key Observations

* All three models show a decreasing cost per sequence, indicating learning.

* The NTM with Feedforward Controller initially learns the fastest, exhibiting the steepest decline in cost.

* The NTM with LSTM Controller reaches the lowest cost per sequence, but its initial learning rate is slower than the Feedforward Controller.

* The LSTM model has the slowest learning rate and the highest final cost per sequence.

* The NTM with LSTM Controller and NTM with Feedforward Controller converge to similar cost per sequence values at higher sequence numbers (around 800-1000).

### Interpretation

The data suggests that Neural Turing Machines (NTMs) outperform standard LSTMs in this task, as evidenced by the lower cost per sequence achieved by both NTM variants. The choice of controller (LSTM vs. Feedforward) impacts the learning speed, with the Feedforward controller providing faster initial learning but ultimately converging to a similar performance level as the LSTM controller. The LSTM model, while still learning, demonstrates a significantly higher cost per sequence, indicating it is less efficient at this task compared to the NTM architectures. The leveling off of the curves at higher sequence numbers suggests that the models are approaching their performance limits or that further training yields diminishing returns. This data could be used to evaluate the effectiveness of different memory-augmented neural network architectures for sequence learning tasks.