\n

## Comparative Robotic Task Execution Chart: Model Performance on Manipulation Tasks

### Overview

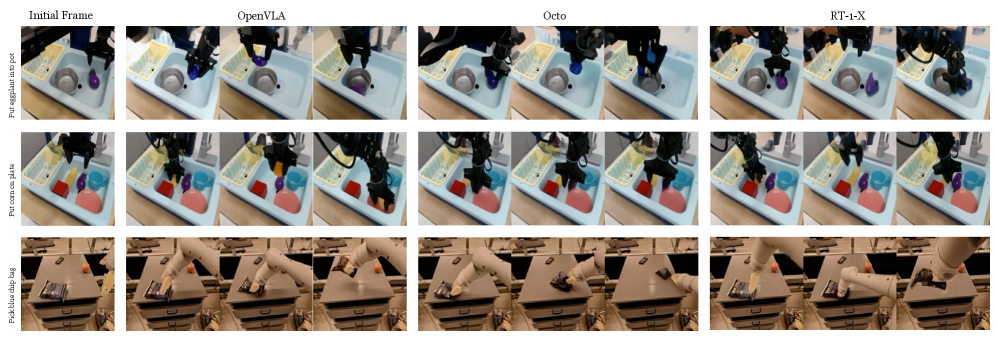

The image is a comparative chart displaying the performance of three different robotic manipulation models (OpenVLA, Octo, RT-1-X) on three distinct tasks. It is structured as a grid with three rows (tasks) and four columns (including the initial state and the three models). Each cell contains a sequence of three frames showing the progression of the robotic arm's attempt to complete the specified task.

### Components/Axes

* **Column Headers (Top Row):** The columns are labeled from left to right:

1. `Initial Frame`

2. `OpenVLA`

3. `Octo`

4. `RT-1-X`

* **Row Labels (Leftmost Column):** The rows are labeled with the task description, written vertically. From top to bottom:

1. `Put eggplant in pot`

2. `Put red block in pink bowl`

3. `Put blue object in bag`

* **Visual Content:** Each cell contains a sequence of three photographic frames showing a robotic arm (a black, multi-jointed arm with a gripper) interacting with objects on a workspace. The workspace appears to be a light blue tray or a wooden table, depending on the task.

### Detailed Analysis

**Task 1: Put eggplant in pot (Top Row)**

* **Initial Frame:** Shows a purple eggplant-shaped object and a small grey pot on a light blue tray. The robotic arm is positioned above.

* **OpenVLA:** The sequence shows the arm descending, grasping the purple object, moving it over the grey pot, and releasing it inside. The final frame shows the object resting within the pot.

* **Octo:** The sequence shows the arm descending and grasping the purple object. In the subsequent frames, the arm moves the object near the pot but appears to place it next to or partially on the rim, not fully inside. The final frame shows the object adjacent to the pot.

* **RT-1-X:** The sequence shows the arm descending, grasping the purple object, and moving it towards the pot. The final frame shows the object being held directly above the pot's opening, poised for release.

**Task 2: Put red block in pink bowl (Middle Row)**

* **Initial Frame:** Shows a red cube, a blue cup, and a pink bowl on a light blue tray. The robotic arm is positioned above.

* **OpenVLA:** The sequence shows the arm descending, grasping the red cube, moving it over the pink bowl, and releasing it. The final frame shows the red cube inside the pink bowl.

* **Octo:** The sequence shows the arm descending and grasping the red cube. In the following frames, the arm moves the cube towards the pink bowl but appears to place it next to the bowl, not inside. The final frame shows the red cube on the tray beside the pink bowl.

* **RT-1-X:** The sequence shows the arm descending, grasping the red cube, and moving it over the pink bowl. The final frame shows the cube being released and falling into the pink bowl.

**Task 3: Put blue object in bag (Bottom Row)**

* **Initial Frame:** Shows a blue rectangular object (resembling a book or box) and a brown paper bag on a wooden table. The robotic arm is positioned above.

* **OpenVLA:** The sequence shows the arm descending, grasping the blue object, moving it over the open bag, and releasing it. The final frame shows the blue object inside the bag.

* **Octo:** The sequence shows the arm descending and attempting to grasp the blue object. The grasp appears less secure. In the following frames, the arm moves the object towards the bag but drops it near the bag's opening. The final frame shows the blue object on the table next to the bag.

* **RT-1-X:** The sequence shows the arm descending, grasping the blue object, and moving it over the bag. The final frame shows the object being released and falling into the bag.

### Key Observations

1. **Performance Consistency:** The `OpenVLA` model successfully completes all three tasks, placing the target object inside the designated container in each case.

2. **Performance Variability:** The `Octo` model fails to complete any of the three tasks as specified. In each case, it grasps the object but places it adjacent to, rather than inside, the target container.

3. **Partial Success:** The `RT-1-X` model shows mixed results. It successfully completes Tasks 2 and 3 (red block in pink bowl, blue object in bag). For Task 1 (eggplant in pot), it positions the object correctly above the pot but the final frame does not show the release, leaving the completion ambiguous.

4. **Task Environment:** Tasks 1 and 2 are performed on a light blue tray with multiple objects present, while Task 3 is performed on a clearer wooden table surface.

### Interpretation

This chart serves as a qualitative benchmark comparing the generalization and precision of three robotic foundation models across varied manipulation tasks requiring pick-and-place actions.

* **What the data suggests:** `OpenVLA` demonstrates robust and reliable performance across different objects (eggplant, block, book), containers (pot, bowl, bag), and environments (tray, table). `Octo` consistently fails the final placement step, suggesting a potential limitation in its spatial understanding or policy for the "place" component of the task. `RT-1-X` performs well on two tasks but shows hesitation or incomplete execution on the third, indicating possible task-specific variability in its policy.

* **How elements relate:** The side-by-side comparison directly contrasts the models' policies for the same initial state. The "Initial Frame" column establishes a controlled starting point, making the differences in the subsequent frames attributable to the models' decision-making.

* **Notable patterns/anomalies:** The most striking pattern is the consistent failure mode of `Octo` (placing beside instead of inside). This is not a random error but a systematic deviation from the task goal. The ambiguity in `RT-1-X`'s first task (holding above the pot) is also notable, as it differs from its clear successes in the other two tasks. The chart effectively highlights that successful grasping does not guarantee successful task completion; the "place" action is a critical differentiator.