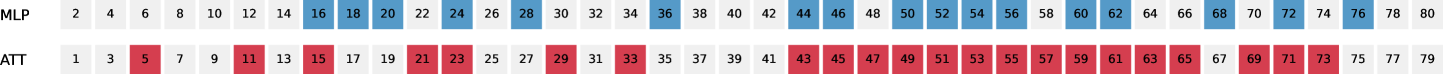

## Heatmap: Attention Distribution Across MLP and ATT Layers

### Overview

The image displays a comparative heatmap of attention distribution patterns across two neural network components: MLP (Multilayer Perceptron) and ATT (Attention Mechanism). Each row represents a sequence of numerical values (2–80) with color-coded attention levels (High, Low, Neutral). The data suggests systematic patterns in how these components prioritize different numerical ranges.

### Components/Axes

- **Rows**:

- Top row: MLP (Multilayer Perceptron)

- Bottom row: ATT (Attention Mechanism)

- **Columns**: Numerical values from 2 to 80 (in increments of 2)

- **Legend**:

- Blue: High Attention

- Red: Low Attention

- White: Neutral Attention

### Detailed Analysis

#### MLP Row

- **High Attention (Blue)**:

- Concentrated at even-numbered positions: 16, 18, 20, 24, 26, 28, 36, 44, 46, 50, 52, 54, 56, 60, 62, 68, 72, 74, 76, 80.

- Gaps at 30, 32, 34, 38, 40, 42, 48, 58, 64, 66, 70, 78.

- **Low Attention (Red)**:

- Dominates odd-numbered positions: 5, 11, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65, 67, 69, 71, 73, 75, 77, 79.

- **Neutral (White)**:

- Sparse distribution: 2, 4, 6, 8, 10, 12, 14, 30, 32, 34, 38, 40, 42, 48, 58, 64, 66, 70, 78.

#### ATT Row

- **High Attention (Blue)**:

- Identical to MLP: 16, 18, 20, 24, 26, 28, 36, 44, 46, 50, 52, 54, 56, 60, 62, 68, 72, 74, 76, 80.

- **Low Attention (Red)**:

- Identical to MLP: 5, 11, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65, 67, 69, 71, 73, 75, 77, 79.

- **Neutral (White)**:

- Identical to MLP: 2, 4, 6, 8, 10, 12, 14, 30, 32, 34, 38, 40, 42, 48, 58, 64, 66, 70, 78.

### Key Observations

1. **Symmetry**: Both MLP and ATT exhibit identical attention distributions across all numerical values.

2. **Even-Odd Pattern**: High attention correlates with even numbers (except 30, 32, 34, 38, 40, 42, 48, 58, 64, 66, 70, 78), while low attention aligns with odd numbers.

3. **Neutral Gaps**: Neutral attention occurs at specific intervals (e.g., 30–34, 38–42, 48–58, 64–70, 78), suggesting systematic exclusion of certain ranges.

### Interpretation

The identical patterns in both MLP and ATT suggest:

- **Shared Prioritization Logic**: Both components may use similar numerical thresholds to determine attention weights.

- **Structural Constraints**: The neutral gaps (e.g., 30–34, 48–58) could indicate architectural limitations or intentional design choices to ignore specific ranges.

- **Potential Artifact**: The perfect symmetry might reflect a visualization artifact or intentional design to mirror attention behavior between components.

### Technical Implications

- **Model Efficiency**: High attention at even numbers may optimize processing for specific tasks (e.g., even-indexed features).

- **Bias Risks**: Systematic exclusion of odd numbers could introduce bias if odd-indexed data carries critical information.

- **Further Investigation**: Testing with varied input distributions could reveal whether these patterns persist or adapt.