## Table Diagram: MLP and ATT Layer/Unit Selection

### Overview

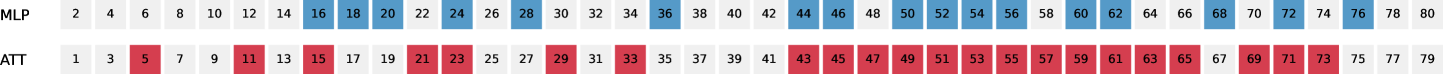

The image displays a two-row table or diagram comparing two entities labeled "MLP" and "ATT". Each row contains a sequence of numbers, with specific numbers highlighted in color. The layout suggests a representation of selected layers, units, or indices within two different model architectures or components.

### Components/Axes

* **Row Labels (Left-aligned):**

* Top Row: `MLP`

* Bottom Row: `ATT`

* **Data Series:** Each row consists of a horizontal sequence of numbers contained within individual cells.

* **Color Legend (Implicit):**

* **Blue Highlight:** Applied to specific numbers in the `MLP` row.

* **Red Highlight:** Applied to specific numbers in the `ATT` row.

* **Spatial Layout:** The `MLP` row is positioned directly above the `ATT` row. The numbers within each row are arranged sequentially from left to right.

### Detailed Analysis

**1. MLP Row (Top Row):**

* **Trend/Pattern:** The numbers form a near-complete sequence of even numbers from 2 to 80. The highlighted (blue) cells form a continuous block.

* **Full Sequence (Visible Numbers):** 2, 4, 6, 8, 10, 12, 14, **16, 18, 20, 22, 24, 26, 28, 30, 32, 34, 36, 38, 40, 42, 44, 46, 48, 50, 52, 54, 56, 58, 60, 62, 64, 66, 68, 70, 72, 74, 76**, 78, 80.

* **Highlighted (Blue) Values:** 16, 18, 20, 22, 24, 26, 28, 30, 32, 34, 36, 38, 40, 42, 44, 46, 48, 50, 52, 54, 56, 58, 60, 62, 64, 66, 68, 70, 72, 74, 76.

* **Observation:** The blue highlight covers a contiguous range from 16 to 76, inclusive. This represents 31 consecutive even numbers.

**2. ATT Row (Bottom Row):**

* **Trend/Pattern:** The numbers form a near-complete sequence of odd numbers from 1 to 79. The highlighted (red) cells are scattered, forming several distinct clusters.

* **Full Sequence (Visible Numbers):** 1, 3, **5**, 7, 9, **11**, 13, **15**, 17, 19, **21, 23**, 25, 27, **29**, 31, **33**, 35, 37, 39, 41, **43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65**, 67, **69, 71, 73**, 75, 77, 79.

* **Highlighted (Red) Values:** 5, 11, 15, 21, 23, 29, 33, 43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65, 69, 71, 73.

* **Observation:** The red highlights are not contiguous. They appear as:

* Isolated points: 5, 11, 15, 29, 33.

* A pair: 21, 23.

* A large, dense block: 43 through 65 (all odd numbers in this range).

* A final cluster: 69, 71, 73.

### Key Observations

1. **Structural Contrast:** The `MLP` selection is a single, unbroken block of indices. The `ATT` selection is fragmented, with one large central block and several smaller, outlying selections.

2. **Index Parity:** The `MLP` row exclusively uses even numbers, while the `ATT` row exclusively uses odd numbers. This suggests a deliberate separation or interleaving scheme.

3. **Density:** The `ATT` row has a higher density of highlighted cells in the middle-to-late section (43-65) compared to the `MLP` row's uniform block.

4. **Spatial Grounding:** The legend (color meaning) is defined by the row label. Blue is always associated with `MLP` (top row), and red is always associated with `ATT` (bottom row). There is no separate legend box; the association is positional.

### Interpretation

This diagram likely visualizes the **selection or activation pattern of layers, attention heads, or neurons** in a neural network analysis. The labels `MLP` (Multi-Layer Perceptron) and `ATT` (Attention) are standard components in transformer architectures.

* **What the data suggests:** The pattern implies a comparative study. The `MLP` component has a broad, continuous region of interest (layers/units 16-76). In contrast, the `ATT` component shows a more complex, non-uniform pattern of importance, with a highly active core (43-65) and several specific, isolated points of interest earlier in the sequence.

* **How elements relate:** The two rows are directly comparable, sharing the same numerical index space (though parity differs). The visualization allows for immediate comparison of the "footprint" of each component type across the same index range.

* **Notable anomalies/patterns:** The strict even/odd separation is the most striking pattern. It could indicate that the indices represent two interleaved sequences (e.g., even indices for one model stream, odd for another) or that the analysis was performed on two distinct, non-overlapping sets of units. The large, contiguous block in `ATT` (43-65) suggests a critical functional module within the attention mechanism, while the scattered early points (5, 11, 15, etc.) may represent specialized or early-processing attention heads.