## Line Chart: Average Reward vs. Training Steps

### Overview

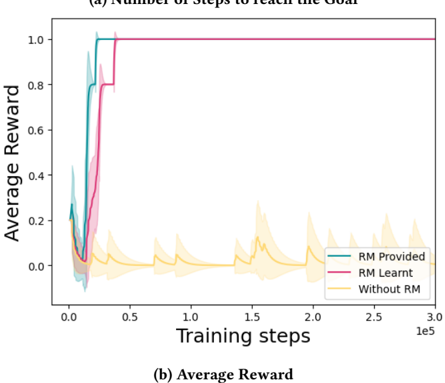

The image is a line chart comparing the learning performance of three different reinforcement learning conditions over the course of training. The chart plots "Average Reward" against "Training steps." The title at the top reads "(a) Number of steps to reach the Goal," while a subtitle at the bottom reads "(b) Average Reward," suggesting this may be one panel of a larger figure. The chart demonstrates a clear performance hierarchy among the tested methods.

### Components/Axes

* **Title (Top):** "(a) Number of steps to reach the Goal"

* **Subtitle (Bottom):** "(b) Average Reward"

* **Y-Axis:**

* **Label:** "Average Reward"

* **Scale:** Linear, from 0.0 to 1.0.

* **Tick Marks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **X-Axis:**

* **Label:** "Training steps"

* **Scale:** Linear, from 0.0 to 3.0, with a multiplier of `1e5` (100,000). The effective range is 0 to 300,000 steps.

* **Tick Marks:** 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0 (all values to be multiplied by 1e5).

* **Legend:** Located in the bottom-right quadrant of the chart area.

* **Entry 1:** "RM Provided" - Represented by a teal/cyan line.

* **Entry 2:** "RM Learnt" - Represented by a magenta/pink line.

* **Entry 3:** "Without RM" - Represented by a yellow/gold line.

* **Data Series:** Each line is accompanied by a semi-transparent shaded area of the same color, likely representing variance, standard deviation, or a confidence interval across multiple runs.

### Detailed Analysis

**Trend Verification & Data Points:**

1. **"RM Provided" (Teal Line):**

* **Trend:** Starts near 0 reward. Exhibits an extremely rapid, near-vertical ascent beginning very early in training (approximately 0.1e5 steps). Reaches the maximum reward of 1.0 by roughly 0.2e5 (20,000) steps and remains perfectly flat at 1.0 for the remainder of the training (up to 300,000 steps).

* **Key Points:** (0, ~0.0), (~0.15e5, 1.0), (0.2e5 to 3.0e5, 1.0).

2. **"RM Learnt" (Magenta Line):**

* **Trend:** Also starts near 0. Shows a rapid ascent, but with a slight delay compared to the "RM Provided" line. The steep climb begins around 0.2e5 steps. It reaches the maximum reward of 1.0 by approximately 0.4e5 (40,000) steps and then plateaus at 1.0 for the rest of training.

* **Key Points:** (0, ~0.0), (~0.3e5, ~0.8), (0.4e5 to 3.0e5, 1.0).

3. **"Without RM" (Yellow Line):**

* **Trend:** Shows no sustained learning trend. The line fluctuates noisily in the low reward region, primarily between 0.0 and 0.2, with occasional spikes up to ~0.25. It never approaches the high reward levels of the other two conditions. The shaded variance area is notably wider, indicating high instability and inconsistency across runs.

* **Key Points:** Fluctuates around a mean of approximately 0.05-0.10 throughout the entire 300,000 steps. No clear upward trajectory.

### Key Observations

* **Performance Hierarchy:** There is a stark and unambiguous performance difference. Conditions with a Reward Model (RM), whether provided or learnt, achieve perfect performance (average reward = 1.0) quickly and stably. The condition without an RM fails to learn the task effectively.

* **Learning Speed:** "RM Provided" learns the fastest, followed closely by "RM Learnt." The delay in "RM Learnt" likely represents the time needed to learn the reward model itself before optimizing the policy.

* **Stability:** The "RM Provided" and "RM Learnt" conditions show virtually no variance (very narrow shaded areas) after convergence, indicating highly reliable performance. The "Without RM" condition shows high variance, indicating unreliable and unstable behavior.

* **Convergence:** Both RM-based methods converge to the theoretical maximum reward (1.0) and stay there, suggesting they have solved the task completely.

### Interpretation

This chart provides strong empirical evidence for the critical role of a well-defined reward signal in reinforcement learning for this specific task.

* **The Necessity of a Reward Model:** The "Without RM" line's failure demonstrates that the task cannot be solved through exploration or other mechanisms alone; an explicit reward signal is necessary to guide learning.

* **Effectiveness of Provided vs. Learnt Models:** The near-identical final performance of "RM Provided" and "RM Learnt" suggests that, for this task, a learnt reward model can be just as effective as a hand-engineered or provided one. The slight delay in learning is a reasonable trade-off for the automation of reward design.

* **Task Characteristics:** The rapid convergence to a perfect score (1.0) for the successful methods implies the task has a clear, achievable goal state and that the learning algorithms, when properly guided, are highly sample-efficient.

* **Implication for Research/Engineering:** The results argue for investing in reward modeling (either through engineering or learning) as a foundational step. The high variance in the "Without RM" case also highlights the risk and inefficiency of attempting to learn without this guidance. The chart effectively visualizes the "reward shaping" or "reward learning" paradigm as a key to stable and efficient learning.