# Technical Data Extraction: Solve Rate by Model and Context Type

## 1. Image Overview

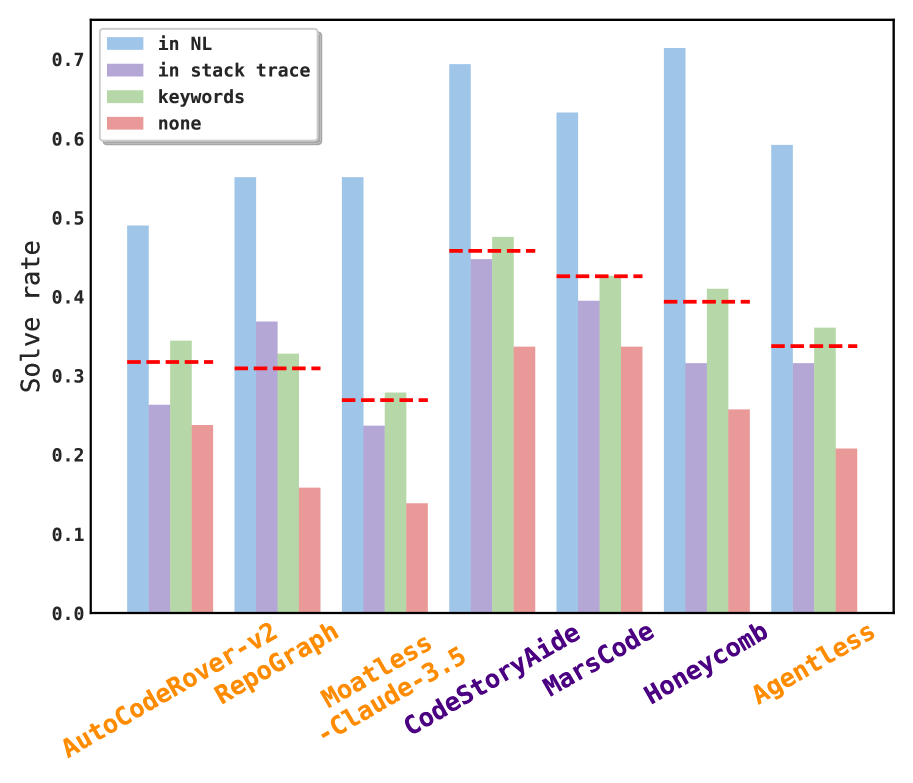

This image is a grouped bar chart comparing the "Solve rate" of seven different AI coding models/frameworks across four distinct context conditions. Each model group also features a horizontal dashed red line representing an average or baseline performance metric for that specific model.

## 2. Chart Metadata

* **Y-Axis Label:** Solve rate

* **Y-Axis Scale:** 0.0 to 0.7 (with markers every 0.1)

* **X-Axis Labels (Models):**

* AutoCodeRover-v2 (Orange)

* RepoGraph (Orange)

* Moatless-Claude-3.5 (Orange)

* CodeStoryAide (Purple)

* MarsCode (Purple)

* Honeycomb (Purple)

* Agentless (Orange)

* **Legend (Top Left):**

* **Light Blue:** `in NL` (Natural Language)

* **Light Purple:** `in stack trace`

* **Light Green:** `keywords`

* **Light Red/Pink:** `none`

* **Additional Indicators:** Red dashed horizontal lines spanning the width of each model's bar group.

## 3. Data Table Extraction

The following table reconstructs the approximate numerical values based on the visual height of the bars relative to the Y-axis markers.

| Model | in NL (Blue) | in stack trace (Purple) | keywords (Green) | none (Red) | Baseline (Red Dash) |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **AutoCodeRover-v2** | ~0.49 | ~0.26 | ~0.34 | ~0.24 | ~0.32 |

| **RepoGraph** | ~0.55 | ~0.37 | ~0.33 | ~0.16 | ~0.31 |

| **Moatless-Claude-3.5** | ~0.55 | ~0.24 | ~0.28 | ~0.14 | ~0.27 |

| **CodeStoryAide** | ~0.69 | ~0.45 | ~0.48 | ~0.34 | ~0.46 |

| **MarsCode** | ~0.63 | ~0.39 | ~0.43 | ~0.34 | ~0.43 |

| **Honeycomb** | ~0.71 | ~0.32 | ~0.41 | ~0.26 | ~0.39 |

| **Agentless** | ~0.59 | ~0.32 | ~0.36 | ~0.21 | ~0.34 |

## 4. Trend Analysis and Observations

### Component Isolation: Performance by Context

1. **"in NL" (Light Blue):** This is consistently the highest-performing category across all models. It peaks with *Honeycomb* at >0.7 and is lowest for *AutoCodeRover-v2* at just under 0.5.

2. **"in stack trace" (Light Purple):** Generally the second or third highest. It shows a significant drop compared to "in NL" for all models.

3. **"keywords" (Light Green):** Often performs slightly better than "in stack trace" (e.g., *AutoCodeRover-v2*, *CodeStoryAide*, *MarsCode*, *Honeycomb*, *Agentless*), but slightly worse in *RepoGraph*.

4. **"none" (Light Red):** Consistently the lowest-performing category for every model, indicating that providing no context significantly degrades the solve rate.

### Model Comparison

* **Top Performer:** *Honeycomb* achieves the highest single solve rate (~0.71) when provided with Natural Language context.

* **Most Consistent High Performer:** *CodeStoryAide* maintains high solve rates across all categories, with its "none" context performance (~0.34) being higher than the "none" performance of most other models.

* **Baseline (Red Dash):** The red dashed line typically sits between the "in stack trace/keywords" bars and the "none" bars, representing a weighted average or aggregate performance metric.