## Diagram: High-Level Architecture of ClarifAI

### Overview

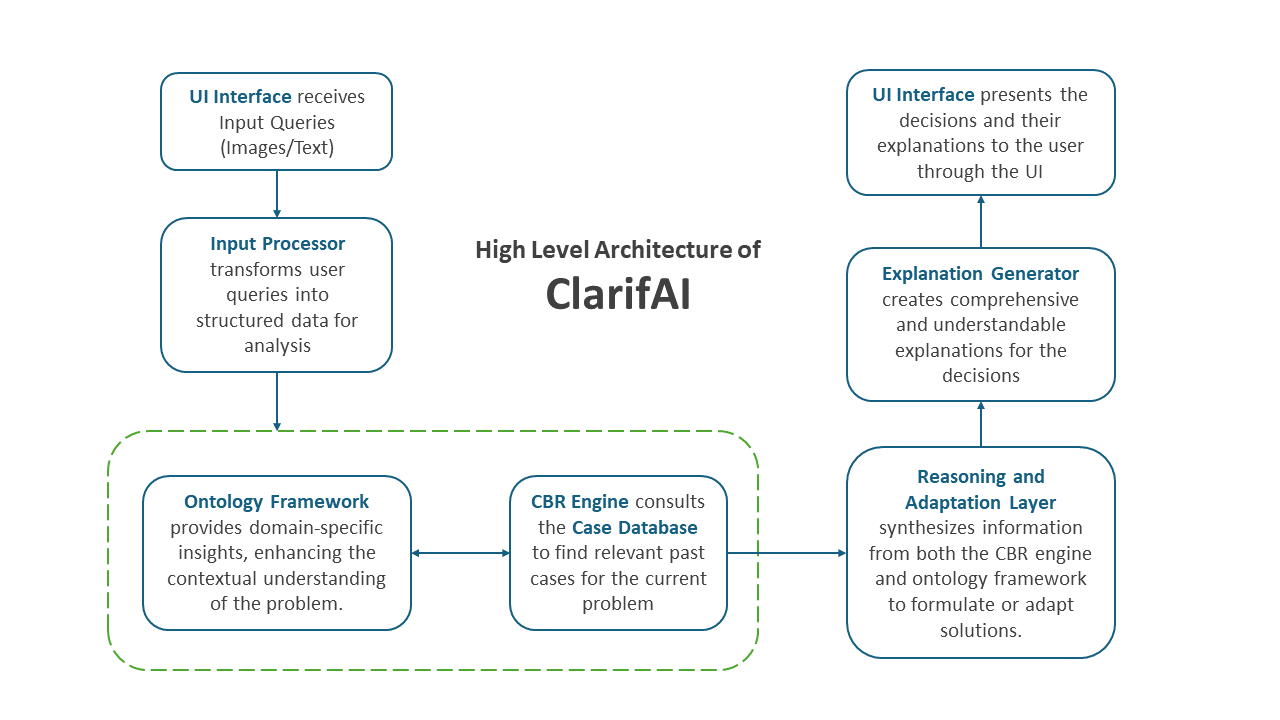

This image is a flowchart diagram illustrating the high-level system architecture of an AI system named "ClarifAI." It depicts the sequential and interconnected components involved in processing a user query, from input to explanation output. The diagram uses rectangular boxes with rounded corners to represent components, connected by directional arrows indicating data or process flow. A central title is prominently displayed.

### Components/Axes

The diagram is organized into a flow that moves generally from the top-left, down, across to the right, and then up to the top-right. The components are:

1. **Central Title:** Located in the center of the diagram.

* Text: "High Level Architecture of ClarifAI"

2. **Input Path (Left Side, Top to Bottom):**

* **Component 1 (Top-Left):** "UI Interface"

* Description Text: "receives Input Queries (Images/Text)"

* **Component 2 (Below Component 1):** "Input Processor"

* Description Text: "transforms user queries into structured data for analysis"

* **Component 3 (Bottom-Left, within a dashed green box):** "Ontology Framework"

* Description Text: "provides domain-specific insights, enhancing the contextual understanding of the problem."

3. **Core Processing (Bottom Center, within the same dashed green box):**

* **Component 4:** "CBR Engine"

* Description Text: "consults the Case Database to find relevant past cases for the current problem"

4. **Reasoning & Output Path (Right Side, Bottom to Top):**

* **Component 5 (Bottom-Right):** "Reasoning and Adaptation Layer"

* Description Text: "synthesizes information from both the CBR engine and ontology framework to formulate or adapt solutions."

* **Component 6 (Above Component 5):** "Explanation Generator"

* Description Text: "creates comprehensive and understandable explanations for the decisions"

* **Component 7 (Top-Right):** "UI Interface"

* Description Text: "presents the decisions and their explanations to the user through the UI"

5. **Grouping Element:**

* A dashed green rectangle encloses the "Ontology Framework" and "CBR Engine" components, suggesting they form a cohesive subsystem or knowledge base layer.

### Detailed Analysis

The process flow is explicitly defined by directional arrows:

1. **Input Stage:** The flow begins at the top-left "UI Interface," which accepts user queries in the form of images or text.

2. **Processing Initiation:** An arrow points downward from the UI Interface to the "Input Processor," which converts the raw query into a structured format suitable for analysis.

3. **Knowledge Consultation:** From the Input Processor, an arrow points down into the dashed green subsystem. Here, the "CBR Engine" (Case-Based Reasoning Engine) is the central actor. It has a bidirectional arrow connecting it to the "Ontology Framework," indicating a constant exchange of information. The CBR Engine's role is to search a "Case Database" for historical cases relevant to the current problem.

4. **Solution Formulation:** An arrow leads from the CBR Engine (and by extension, the knowledge subsystem) to the "Reasoning and Adaptation Layer" on the right. This layer synthesizes insights from both the retrieved past cases (CBR) and the domain-specific context (Ontology) to create or adapt a solution.

5. **Explanation Generation:** The formulated solution is passed upward to the "Explanation Generator," which translates the system's decision-making process into a human-understandable explanation.

6. **Output Stage:** Finally, an arrow points upward to the "UI Interface" at the top-right, which presents both the final decision and its generated explanation back to the user.

### Key Observations

* **Cyclical User Interaction:** The architecture starts and ends with the "UI Interface," creating a closed loop for user interaction. The same component is responsible for both input and output.

* **Central Knowledge Core:** The dashed green box highlights the "Ontology Framework" and "CBR Engine" as the core knowledge and reasoning backbone of the system. Their bidirectional connection is critical.

* **Explicit Explanation Step:** The architecture dedicates a specific component, the "Explanation Generator," to the task of making the AI's decisions transparent, which is a key feature for user trust and debugging.

* **Linear Flow with a Core Loop:** The overall flow is linear (Input -> Process -> Output), but contains a critical internal loop between the Ontology Framework and CBR Engine for deep analysis.

### Interpretation

This diagram outlines a hybrid AI architecture designed for explainable decision-making. It combines **Case-Based Reasoning (CBR)**—which relies on analogical reasoning from past experiences—with an **Ontology Framework** that provides structured, domain-specific knowledge. This combination allows the system to ground its solutions in both historical precedent and formal domain rules.

The dedicated "Reasoning and Adaptation Layer" suggests the system doesn't just retrieve past cases but actively modifies them to fit the new problem's context. The most significant architectural choice is the inclusion of the "Explanation Generator." This indicates that **explainability is a first-class requirement**, not an afterthought. The system is designed to answer not just "what" the solution is, but "why" it was chosen, tracing the logic back through the CBR and ontology-informed reasoning process. This is crucial for applications in fields like healthcare, finance, or law, where decisions must be justifiable. The flow demonstrates a clear pipeline from unstructured user input to a structured, reasoned, and explained output.