TECHNICAL ASSET FINGERPRINT

30329725c7208494c1380078

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Layer vs. ΔP for Llama-3-8B and Llama-3-70B

### Overview

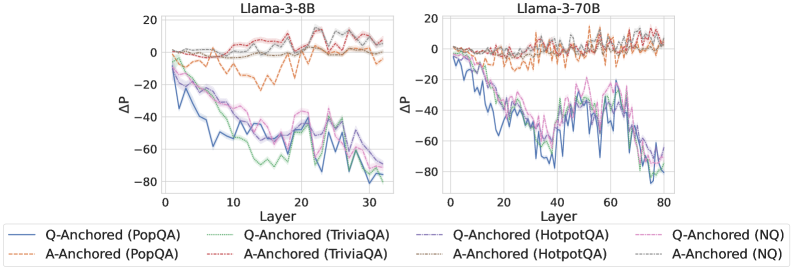

The image presents two line charts comparing the performance of Llama-3-8B and Llama-3-70B models across different layers, measured by ΔP (change in probability). Each chart plots the ΔP values for question-anchored (Q-Anchored) and answer-anchored (A-Anchored) methods across various question-answering datasets: PopQA, TriviaQA, HotpotQA, and NQ. The x-axis represents the layer number, and the y-axis represents the ΔP value.

### Components/Axes

* **Titles:**

* Left Chart: "Llama-3-8B"

* Right Chart: "Llama-3-70B"

* **X-axis:**

* Label: "Layer"

* Left Chart: Scale from 0 to 30, with tick marks at intervals of 10.

* Right Chart: Scale from 0 to 80, with tick marks at intervals of 20.

* **Y-axis:**

* Label: "ΔP"

* Scale: From -80 to 20, with tick marks at intervals of 20.

* **Legend:** Located at the bottom of the image, it identifies the line colors and styles for each method and dataset.

* **Q-Anchored (PopQA):** Solid blue line

* **A-Anchored (PopQA):** Dashed brown line

* **Q-Anchored (TriviaQA):** Dotted green line

* **A-Anchored (TriviaQA):** Dotted gray line

* **Q-Anchored (HotpotQA):** Dashed-dotted purple line

* **A-Anchored (HotpotQA):** Dotted-dashed pink line

* **Q-Anchored (NQ):** Dashed-dotted black line

* **A-Anchored (NQ):** Dotted-dashed orange line

### Detailed Analysis

**Left Chart (Llama-3-8B):**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0 and decreases sharply to around -50 by layer 10, then fluctuates between -50 and -80 until layer 30.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 0 and fluctuates between -10 and 10 across all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0 and decreases to around -60 by layer 30.

* **A-Anchored (TriviaQA):** (Dotted Gray) Starts around 0 and remains relatively stable, fluctuating between 0 and 10 across all layers.

* **Q-Anchored (HotpotQA):** (Dashed-dotted Purple) Starts at approximately 0 and decreases to around -50 by layer 30.

* **A-Anchored (HotpotQA):** (Dotted-dashed Pink) Starts at approximately 0 and decreases to around -50 by layer 30.

* **Q-Anchored (NQ):** (Dashed-dotted Black) Starts around 0 and remains relatively stable, fluctuating between 0 and 10 across all layers.

* **A-Anchored (NQ):** (Dotted-dashed Orange) Starts around 0 and remains relatively stable, fluctuating between 0 and 10 across all layers.

**Right Chart (Llama-3-70B):**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0 and decreases sharply to around -60 by layer 20, then fluctuates between -40 and -70 until layer 80.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 0 and fluctuates between 0 and 15 across all layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0 and decreases to around -60 by layer 80.

* **A-Anchored (TriviaQA):** (Dotted Gray) Starts around 0 and remains relatively stable, fluctuating between 0 and 10 across all layers.

* **Q-Anchored (HotpotQA):** (Dashed-dotted Purple) Starts at approximately 0 and decreases to around -50 by layer 80.

* **A-Anchored (HotpotQA):** (Dotted-dashed Pink) Starts at approximately 0 and decreases to around -50 by layer 80.

* **Q-Anchored (NQ):** (Dashed-dotted Black) Starts around 0 and remains relatively stable, fluctuating between 0 and 10 across all layers.

* **A-Anchored (NQ):** (Dotted-dashed Orange) Starts around 0 and fluctuates between 0 and 15 across all layers.

### Key Observations

* For both models, the Q-Anchored methods for PopQA, TriviaQA, and HotpotQA show a significant decrease in ΔP as the layer number increases, indicating a performance decline in these tasks as the model processes deeper layers.

* The A-Anchored methods for PopQA, TriviaQA, HotpotQA, and NQ, as well as the Q-Anchored method for NQ, remain relatively stable across all layers for both models, suggesting more consistent performance.

* The Llama-3-70B model has a larger x-axis range (0-80 layers) compared to Llama-3-8B (0-30 layers), indicating a deeper architecture.

### Interpretation

The data suggests that anchoring the question (Q-Anchored) for PopQA, TriviaQA, and HotpotQA tasks leads to a degradation in performance as the model processes deeper layers, while anchoring the answer (A-Anchored) maintains a more stable performance. This could indicate that the model's ability to effectively utilize information from the question deteriorates with increasing depth for these specific datasets. The consistent performance of A-Anchored methods and Q-Anchored NQ suggests that the model handles answer-related information and certain types of questions more effectively across all layers. The difference in layer depth between Llama-3-8B and Llama-3-70B may contribute to the observed performance variations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: ΔP vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the change in performance (ΔP) across layers for two Llama models: Llama-3-8B and Llama-3-70B. The x-axis represents the layer number, and the y-axis represents ΔP. Each chart displays multiple lines, each representing a different question-answering dataset and anchoring method.

### Components/Axes

* **X-axis:** Layer (ranging from 0 to 30 for Llama-3-8B and 0 to 80 for Llama-3-70B).

* **Y-axis:** ΔP (ranging from approximately -80 to 20).

* **Models:** Llama-3-8B (left chart), Llama-3-70B (right chart).

* **Datasets/Anchoring Methods (Legend):**

* Q-Anchored (PopQA) - Blue solid line

* A-Anchored (PopQA) - Orange dashed line

* Q-Anchored (TriviaQA) - Green solid line

* A-Anchored (TriviaQA) - Purple dashed line

* Q-Anchored (HotpotQA) - Brown dashed-dotted line

* A-Anchored (HotpotQA) - Pink dashed line

* Q-Anchored (NQ) - Light Blue solid line

* A-Anchored (NQ) - Teal solid line

### Detailed Analysis or Content Details

**Llama-3-8B (Left Chart):**

* **Q-Anchored (PopQA):** Starts at approximately -20, sharply decreases to around -70 by layer 5, then fluctuates between -50 and -70 until layer 30.

* **A-Anchored (PopQA):** Starts at approximately 10, gradually decreases to around -10 by layer 30, with some fluctuations.

* **Q-Anchored (TriviaQA):** Starts at approximately -10, decreases to around -60 by layer 5, then fluctuates between -40 and -60 until layer 30.

* **A-Anchored (TriviaQA):** Starts at approximately 5, decreases to around -20 by layer 30, with some fluctuations.

* **Q-Anchored (HotpotQA):** Starts at approximately 0, decreases to around -50 by layer 5, then fluctuates between -30 and -50 until layer 30.

* **A-Anchored (HotpotQA):** Starts at approximately 10, decreases to around -10 by layer 30, with some fluctuations.

* **Q-Anchored (NQ):** Starts at approximately -15, decreases to around -65 by layer 5, then fluctuates between -50 and -65 until layer 30.

* **A-Anchored (NQ):** Starts at approximately 5, decreases to around -20 by layer 30, with some fluctuations.

**Llama-3-70B (Right Chart):**

* **Q-Anchored (PopQA):** Starts at approximately -20, decreases to around -60 by layer 20, then fluctuates between -40 and -60 until layer 80.

* **A-Anchored (PopQA):** Starts at approximately 10, gradually decreases to around -10 by layer 80, with some fluctuations.

* **Q-Anchored (TriviaQA):** Starts at approximately -10, decreases to around -50 by layer 20, then fluctuates between -30 and -50 until layer 80.

* **A-Anchored (TriviaQA):** Starts at approximately 5, decreases to around -15 by layer 80, with some fluctuations.

* **Q-Anchored (HotpotQA):** Starts at approximately 0, decreases to around -40 by layer 20, then fluctuates between -20 and -40 until layer 80.

* **A-Anchored (HotpotQA):** Starts at approximately 10, decreases to around -10 by layer 80, with some fluctuations.

* **Q-Anchored (NQ):** Starts at approximately -15, decreases to around -55 by layer 20, then fluctuates between -40 and -55 until layer 80.

* **A-Anchored (NQ):** Starts at approximately 5, decreases to around -15 by layer 80, with some fluctuations.

### Key Observations

* In both models, the Q-Anchored lines generally exhibit a more significant decrease in ΔP compared to the A-Anchored lines.

* The initial performance (ΔP at layer 0) is generally higher for A-Anchored methods.

* The rate of performance decrease appears to slow down in later layers for both models.

* The Llama-3-70B model shows a more gradual decline in ΔP across layers compared to the Llama-3-8B model.

* The lines for the different datasets tend to cluster together, suggesting a common trend in performance change.

### Interpretation

The charts illustrate how performance changes across layers for different question-answering datasets and anchoring methods in two Llama models. The negative ΔP values indicate a performance decrease as the model processes deeper layers. The steeper decline in Q-Anchored methods suggests that question-based anchoring might be more sensitive to layer depth or require more careful tuning. The larger model (Llama-3-70B) exhibits a more stable performance profile, indicating that increased model size can mitigate the performance degradation observed in the smaller model (Llama-3-8B). The consistent trend across datasets suggests that the observed performance changes are not specific to any particular question-answering task. The difference between A-Anchored and Q-Anchored methods could be due to the way information is incorporated into the model during training or inference. The charts provide valuable insights into the behavior of these models and can inform strategies for improving their performance and stability.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Line Charts]: Llama-3 Model Layer-wise ΔP Performance

### Overview

The image displays two side-by-side line charts comparing the performance change (ΔP) across the layers of two different-sized language models: Llama-3-8B (left) and Llama-3-70B (right). Each chart plots the ΔP metric for eight different experimental conditions, which are combinations of an anchoring method (Q-Anchored or A-Anchored) and a dataset (PopQA, TriviaQA, HotpotQA, NQ). The charts illustrate how performance evolves as information propagates through the model's layers.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Llama-3-8B`

* Right Chart: `Llama-3-70B`

* **Y-Axis (Both Charts):**

* Label: `ΔP`

* Scale: Linear, ranging from -80 to 20, with major ticks at intervals of 20 (-80, -60, -40, -20, 0, 20).

* **X-Axis (Both Charts):**

* Label: `Layer`

* Scale (Llama-3-8B): Linear, from 0 to 30, with major ticks at 0, 10, 20, 30.

* Scale (Llama-3-70B): Linear, from 0 to 80, with major ticks at 0, 20, 40, 60, 80.

* **Legend (Positioned below both charts, centered):**

* Contains 8 entries, each with a unique line style and color.

* **Q-Anchored Series (Solid Lines):**

* `Q-Anchored (PopQA)`: Solid blue line.

* `Q-Anchored (TriviaQA)`: Solid green line.

* `Q-Anchored (HotpotQA)`: Solid purple line.

* `Q-Anchored (NQ)`: Solid pink line.

* **A-Anchored Series (Dashed Lines):**

* `A-Anchored (PopQA)`: Dashed orange line.

* `A-Anchored (TriviaQA)`: Dashed red line.

* `A-Anchored (HotpotQA)`: Dashed brown line.

* `A-Anchored (NQ)`: Dashed gray line.

### Detailed Analysis

**Llama-3-8B Chart (Left):**

* **Q-Anchored Lines (Solid):** All four solid lines show a strong, consistent downward trend. They start near ΔP = 0 at Layer 0 and decline steeply, reaching values between approximately -60 and -80 by Layer 30. The lines are tightly clustered, indicating similar degradation across all four datasets for the Q-Anchored method.

* **A-Anchored Lines (Dashed):** All four dashed lines remain relatively stable and close to ΔP = 0 across all layers. They exhibit minor fluctuations but no significant upward or downward trend. The `A-Anchored (PopQA)` (dashed orange) line shows slightly more negative values (dipping to around -20) in the middle layers (10-20) compared to the others, which stay closer to zero.

**Llama-3-70B Chart (Right):**

* **Q-Anchored Lines (Solid):** Similar to the 8B model, the solid lines trend downward. However, the decline is more volatile, with pronounced peaks and valleys, especially between layers 40 and 60. The final values at Layer 80 are again in the -60 to -80 range. The volatility suggests less stable performance degradation in the larger model's mid-to-late layers.

* **A-Anchored Lines (Dashed):** These lines also remain stable around ΔP = 0, similar to the 8B model. They show slightly more high-frequency noise than in the 8B chart but maintain the same overall flat trend, indicating robustness across layers.

### Key Observations

1. **Clear Dichotomy by Anchoring Method:** The most striking pattern is the complete separation between Q-Anchored (solid, declining) and A-Anchored (dashed, stable) lines. This effect is consistent across both model sizes and all four datasets.

2. **Layer-Dependent Degradation for Q-Anchored:** Performance (ΔP) for Q-Anchored methods deteriorates significantly and monotonically with increasing layer depth.

3. **Stability of A-Anchored:** A-Anchored methods show no layer-dependent degradation, maintaining performance near the baseline (ΔP ≈ 0) throughout the network.

4. **Model Size Effect on Volatility:** The larger Llama-3-70B model exhibits greater volatility in the declining Q-Anchored lines compared to the smoother decline in Llama-3-8B, particularly in the middle layers.

5. **Dataset Similarity:** Within each anchoring group (Q or A), the lines for different datasets (PopQA, TriviaQA, HotpotQA, NQ) follow very similar trajectories, suggesting the observed effect is primarily driven by the anchoring method, not the specific knowledge dataset.

### Interpretation

This data strongly suggests that the **choice of anchoring method (Q vs. A) is a critical factor determining how a model's internal representations affect performance on knowledge-intensive tasks across its layers.**

* **Q-Anchored (Question-Anchored) methods** appear to suffer from a form of "representational drift" or interference as information passes through deeper layers. The initial question representation becomes less effective for retrieval or reasoning as it is transformed, leading to a steady drop in ΔP. The increased volatility in the 70B model might indicate that larger models have more complex internal transformations that can amplify this instability.

* **A-Anchored (Answer-Anchored) methods** demonstrate remarkable stability. This implies that anchoring the process to the answer representation provides a consistent signal that is preserved or even reinforced through the network's layers, making the model's performance robust to depth.

**In practical terms,** for tasks requiring deep, multi-layer processing of knowledge (like complex reasoning over retrieved facts), using an A-Anchored approach appears far more reliable. The Q-Anchored approach, while potentially effective in early layers, becomes increasingly detrimental in deeper layers, which could harm performance on tasks that require deep integration of information. The consistency across four different QA datasets (PopQA, TriviaQA, HotpotQA, NQ) indicates this is a fundamental architectural or methodological insight, not a dataset-specific artifact.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Llama-3-8B and Llama-3-70B Model Performance Comparison

### Overview

The image contains two side-by-side line charts comparing the performance of Q-Anchored and A-Anchored models across different datasets (PopQA, TriviaQA, HotpotQA, NQ) for two versions of the Llama-3 model (3-8B and 3-70B). The y-axis represents ΔP (change in performance), and the x-axis represents model layers. Each chart shows distinct trends for Q-Anchored (solid lines) and A-Anchored (dashed lines) configurations.

---

### Components/Axes

- **X-Axis (Layer)**:

- Llama-3-8B: 0 to 30 (integer increments).

- Llama-3-70B: 0 to 80 (integer increments).

- **Y-Axis (ΔP)**:

- Range: -80 to 20 (integer increments).

- **Legends**:

- Positioned at the bottom of each chart.

- Colors and styles correspond to:

- **Q-Anchored**: Solid lines (blue, green, purple, pink).

- **A-Anchored**: Dashed lines (orange, gray, brown, black).

- Datasets: PopQA, TriviaQA, HotpotQA, NQ.

---

### Detailed Analysis

#### Llama-3-8B Chart

- **Q-Anchored (PopQA)**: Blue solid line. Starts at 0, dips sharply to -60 by layer 10, then fluctuates between -40 and -20.

- **Q-Anchored (TriviaQA)**: Green dashed line. Starts at 0, drops to -50 by layer 15, then stabilizes near -30.

- **Q-Anchored (HotpotQA)**: Purple solid line. Starts at 0, declines to -70 by layer 20, then oscillates between -50 and -30.

- **Q-Anchored (NQ)**: Pink dashed line. Starts at 0, dips to -40 by layer 10, then stabilizes near -20.

- **A-Anchored (PopQA)**: Orange solid line. Remains near 0 with minor fluctuations.

- **A-Anchored (TriviaQA)**: Gray dashed line. Starts at 0, dips to -10 by layer 10, then stabilizes.

- **A-Anchored (HotpotQA)**: Brown solid line. Starts at 0, fluctuates between -5 and 5.

- **A-Anchored (NQ)**: Black dashed line. Starts at 0, dips to -5 by layer 10, then stabilizes.

#### Llama-3-70B Chart

- **Q-Anchored (PopQA)**: Blue solid line. Starts at 0, drops to -80 by layer 40, then fluctuates between -60 and -40.

- **Q-Anchored (TriviaQA)**: Green dashed line. Starts at 0, declines to -70 by layer 50, then stabilizes near -50.

- **Q-Anchored (HotpotQA)**: Purple solid line. Starts at 0, drops to -90 by layer 60, then oscillates between -70 and -50.

- **Q-Anchored (NQ)**: Pink dashed line. Starts at 0, dips to -60 by layer 30, then stabilizes near -40.

- **A-Anchored (PopQA)**: Orange solid line. Remains near 0 with minor fluctuations.

- **A-Anchored (TriviaQA)**: Gray dashed line. Starts at 0, dips to -15 by layer 20, then stabilizes.

- **A-Anchored (HotpotQA)**: Brown solid line. Starts at 0, fluctuates between -10 and 10.

- **A-Anchored (NQ)**: Black dashed line. Starts at 0, dips to -10 by layer 10, then stabilizes.

---

### Key Observations

1. **Q-Anchored vs. A-Anchored**:

- Q-Anchored models show larger ΔP deviations (negative trends) across all datasets, especially in deeper layers.

- A-Anchored models exhibit smaller, more stable ΔP values, often remaining near 0.

2. **Model Size Impact**:

- Llama-3-70B shows more pronounced ΔP declines for Q-Anchored models compared to Llama-3-8B, suggesting scalability challenges.

3. **Dataset Sensitivity**:

- HotpotQA (Q-Anchored) demonstrates the steepest ΔP decline in both models, indicating higher sensitivity to anchoring methods.

4. **Layer Depth Correlation**:

- ΔP trends generally worsen as layer depth increases, particularly for Q-Anchored configurations.

---

### Interpretation

The data suggests that **Q-Anchored models** are more sensitive to layer depth and dataset complexity, leading to larger performance deviations (ΔP). This could imply that Q-Anchored configurations struggle with maintaining consistency in deeper layers or with complex datasets like HotpotQA. In contrast, **A-Anchored models** maintain stability, indicating robustness to layer depth and dataset variations. The Llama-3-70B model’s amplified ΔP trends for Q-Anchored configurations highlight potential scalability issues, suggesting that anchoring strategies may need adjustment for larger models. The divergence between Q and A anchoring methods underscores the importance of anchoring choice in model performance optimization.

DECODING INTELLIGENCE...