## Line Chart: Accuracy of Game 24 vs. Iteration

### Overview

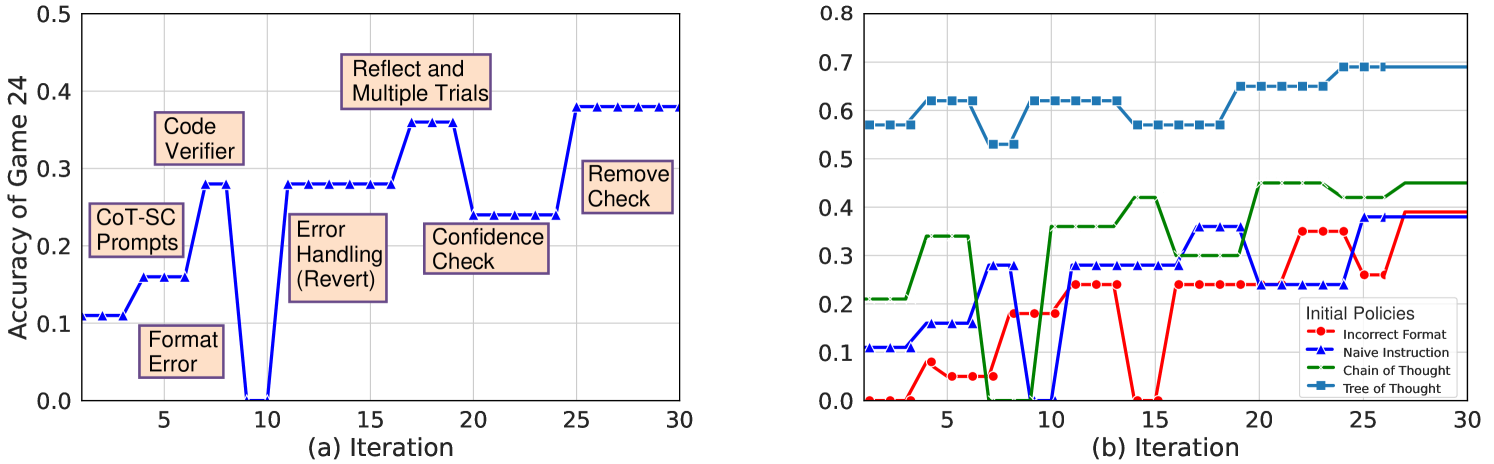

The image presents two line charts, labeled (a) and (b), depicting the accuracy of a game (Game 24) over iterations. Chart (a) shows the impact of different intervention strategies on accuracy, while chart (b) compares the performance of different initial policies. Both charts share a common x-axis representing iteration number, ranging from 0 to 30.

### Components/Axes

**Chart (a):**

* **X-axis:** Iteration (0 to 30)

* **Y-axis:** Accuracy of Game 24 (0.0 to 0.5)

* **Intervention Strategies (represented as steps in the line):**

* Format Error

* CoT-SC Prompts

* Code Verifier

* Error Handling (Revert)

* Confidence Check

* Reflect and Multiple Trials

* Remove Check

**Chart (b):**

* **X-axis:** Iteration (0 to 30)

* **Y-axis:** Accuracy of Game 24 (0.0 to 0.8)

* **Initial Policies (represented by different colored lines):**

* Incorrect Format (Red)

* Naive Instruction (Orange)

* Chain of Thought (Green)

* Tree of Thought (Blue)

* **Legend:** Located in the top-right corner, clearly labeling each policy with its corresponding color.

### Detailed Analysis or Content Details

**Chart (a):**

The line starts at approximately 0.05 at iteration 0. It rises sharply to around 0.25 at iteration 5 (Format Error). It then increases to approximately 0.35 at iteration 8 (CoT-SC Prompts). A further increase to around 0.45 is observed at iteration 12 (Code Verifier). A dip to approximately 0.3 at iteration 16 (Error Handling (Revert)) is followed by a rise to around 0.4 at iteration 18 (Confidence Check). The line peaks at approximately 0.48 at iteration 20 (Reflect and Multiple Trials) and then plateaus around 0.65 from iteration 22 to 30 (Remove Check).

**Chart (b):**

* **Incorrect Format (Red):** Starts at approximately 0.1 at iteration 0, fluctuates between 0.1 and 0.35, with a peak around 0.35 at iteration 20, and ends at approximately 0.25 at iteration 30.

* **Naive Instruction (Orange):** Starts at approximately 0.2 at iteration 0, rises to around 0.35 at iteration 5, fluctuates between 0.25 and 0.4, and ends at approximately 0.3 at iteration 30.

* **Chain of Thought (Green):** Starts at approximately 0.6 at iteration 0, drops to around 0.55 at iteration 5, rises to approximately 0.7 at iteration 10, and remains relatively stable around 0.65-0.7 from iteration 15 to 30.

* **Tree of Thought (Blue):** Starts at approximately 0.62 at iteration 0, drops to around 0.58 at iteration 5, rises sharply to approximately 0.7 at iteration 10, and remains stable around 0.7 from iteration 15 to 30.

### Key Observations

* In Chart (a), the "Remove Check" intervention appears to stabilize the accuracy at a relatively high level.

* In Chart (b), "Tree of Thought" and "Chain of Thought" policies consistently outperform "Incorrect Format" and "Naive Instruction" policies.

* "Tree of Thought" and "Chain of Thought" policies show an initial dip in accuracy at iteration 5 before improving.

* "Incorrect Format" policy exhibits the lowest overall accuracy.

### Interpretation

The data suggests that iterative interventions, particularly the "Remove Check" strategy, can significantly improve the accuracy of Game 24. The comparison of initial policies reveals that more sophisticated approaches like "Tree of Thought" and "Chain of Thought" are substantially more effective than simpler strategies like "Incorrect Format" and "Naive Instruction." The initial dip in accuracy for "Tree of Thought" and "Chain of Thought" might indicate a learning phase where the model adjusts to the new policy. The consistent high performance of these policies suggests they are better equipped to handle the complexities of the game. The interventions in Chart (a) seem to build upon each other, with each step contributing to a gradual increase in accuracy, culminating in the stabilizing effect of the "Remove Check" intervention. This could indicate that removing potentially erroneous elements is crucial for maintaining high performance.