\n

## Diagram: Skill Matching for LLM Reasoning

### Overview

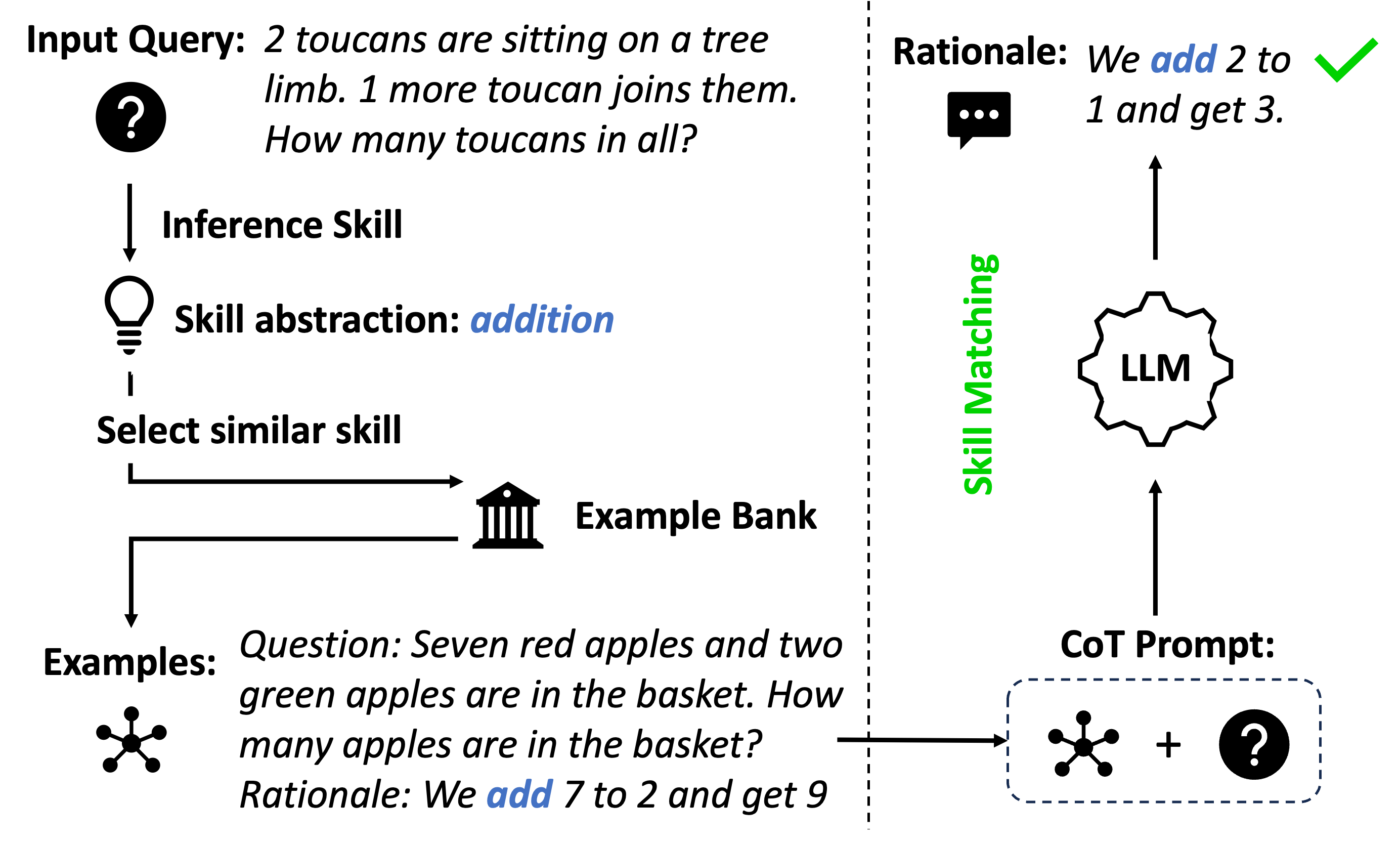

This diagram illustrates a process for skill matching in the context of Large Language Models (LLMs) to improve reasoning capabilities. It depicts how an input query is processed to identify the required skill, retrieve relevant examples, and generate a Chain-of-Thought (CoT) prompt for the LLM. The diagram is segmented into distinct areas representing input, skill abstraction, example retrieval, and LLM interaction.

### Components/Axes

The diagram consists of the following components:

* **Input Query:** A text box containing the initial question: "2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"

* **Inference Skill:** A label indicating the identified skill required to answer the query.

* **Skill Abstraction:** A label stating the abstracted skill: "addition".

* **Select Similar Skill:** A directional arrow indicating the selection of a similar skill.

* **Example Bank:** A stack of books representing a repository of examples.

* **Examples:** A text box containing an example question and rationale: "Question: Seven red apples and two green apples are in the basket. How many apples are in the basket? Rationale: We add 7 to 2 and get 9".

* **CoT Prompt:** A dashed box containing symbols representing the prompt sent to the LLM.

* **LLM:** A hexagonal shape representing the Large Language Model.

* **Skill Matching:** A label indicating the connection between the example bank and the LLM.

* **Rationale:** A text box containing the rationale for the input query: "We add 2 to 1 and get 3".

* **Icons:** Various icons representing the different stages of the process (question mark, lightbulb, stack of books, etc.).

* **Arrows:** Arrows indicating the flow of information between the components.

### Detailed Analysis or Content Details

The diagram shows a flow of information starting from the "Input Query" and progressing through several stages:

1. **Input Query:** The initial question is "2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?".

2. **Inference Skill:** The system identifies the "Inference Skill" needed to solve the problem.

3. **Skill Abstraction:** The skill is abstracted as "addition".

4. **Example Bank:** The system searches the "Example Bank" for similar examples.

5. **Examples:** An example is retrieved: "Question: Seven red apples and two green apples are in the basket. How many apples are in the basket? Rationale: We add 7 to 2 and get 9".

6. **CoT Prompt:** A "CoT Prompt" is generated, containing symbols representing the question and a placeholder for the answer.

7. **LLM:** The prompt is sent to the "LLM".

8. **Skill Matching:** The "Skill Matching" process connects the example bank to the LLM.

9. **Rationale:** The rationale for the input query is "We add 2 to 1 and get 3".

The arrows indicate a directional flow:

* From "Input Query" to "Inference Skill".

* From "Inference Skill" to "Skill Abstraction".

* From "Skill Abstraction" to "Select Similar Skill".

* From "Select Similar Skill" to "Example Bank".

* From "Example Bank" to "Examples".

* From "Examples" to "CoT Prompt".

* From "CoT Prompt" to "LLM".

* From "LLM" to "Rationale".

### Key Observations

The diagram highlights the importance of skill abstraction and example retrieval in guiding the LLM's reasoning process. The use of a Chain-of-Thought prompt suggests a strategy for eliciting step-by-step reasoning from the LLM. The diagram demonstrates a closed-loop system where the LLM's output is informed by relevant examples.

### Interpretation

This diagram illustrates a technique for improving the reasoning capabilities of LLMs by explicitly identifying the required skills, retrieving relevant examples, and constructing prompts that encourage step-by-step reasoning. The process aims to bridge the gap between the LLM's general knowledge and the specific requirements of a given task. The use of an "Example Bank" suggests a knowledge base of solved problems that can be used to guide the LLM's reasoning. The "Skill Abstraction" step is crucial for identifying the underlying cognitive process required to solve the problem. The diagram suggests that by providing the LLM with relevant examples and a structured prompt, it is possible to improve the accuracy and reliability of its responses. The diagram is a conceptual illustration of a system, and does not contain specific data points or numerical values beyond those present in the example questions. It is a visual representation of a process, rather than a presentation of data.