## Flowchart: Problem-Solving Process with LLM

### Overview

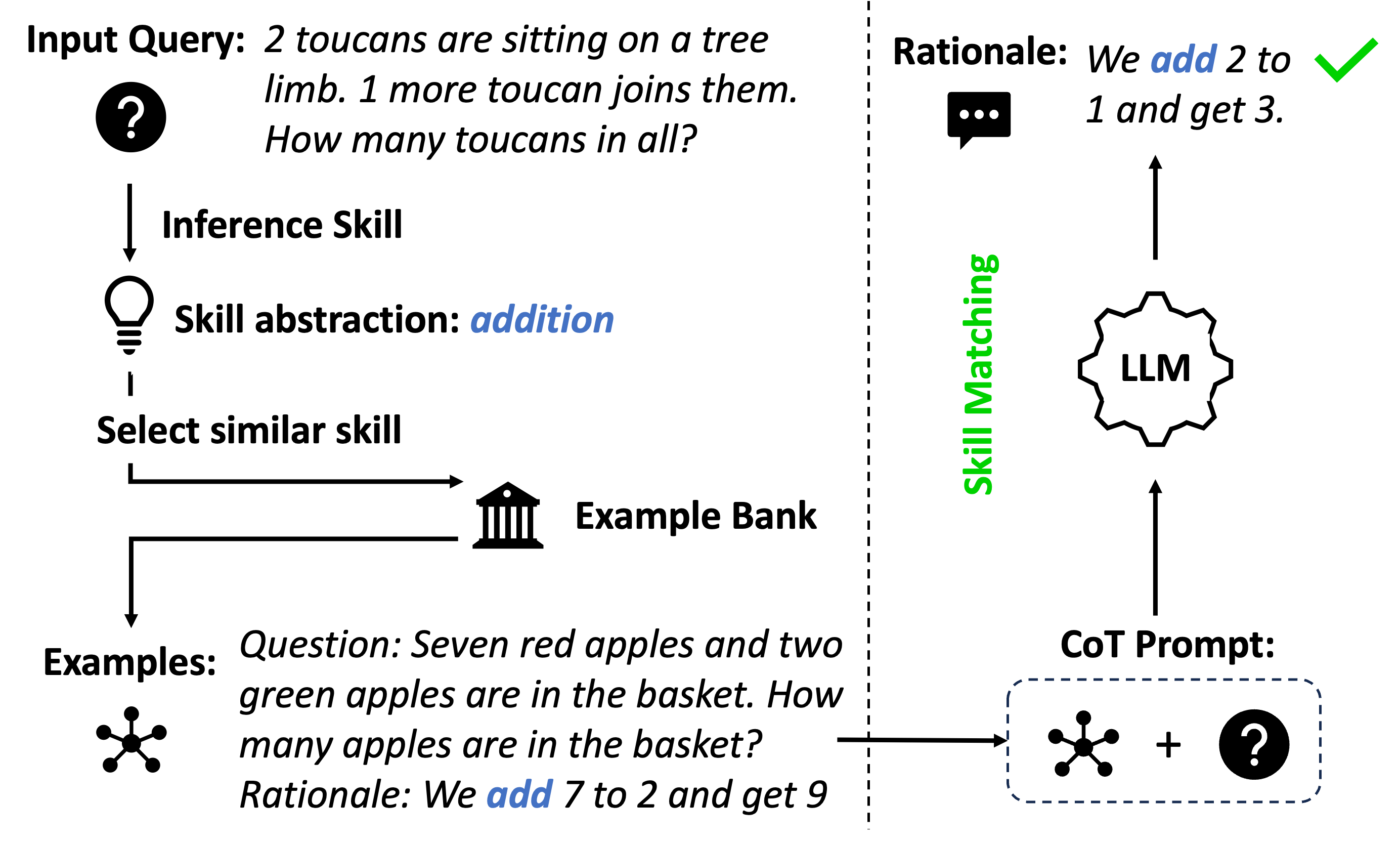

The flowchart illustrates a structured approach to solving arithmetic word problems using a Large Language Model (LLM). It demonstrates how to abstract a problem into a known skill (e.g., addition), retrieve similar examples, and construct a Chain-of-Thought (CoT) prompt to guide the LLM's reasoning.

### Components/Axes

1. **Input Query**:

- Text: "2 toucans are sitting on a tree limb. 1 more toucan joins them. How many toucans in all?"

- Symbol: Question mark (?) icon.

2. **Inference Skill**:

- Label: "Inference Skill" with a lightbulb icon.

3. **Skill Abstraction**:

- Text: "Skill abstraction: addition" (highlighted in blue).

4. **Select Similar Skill**:

- Arrows branching to "Example Bank" and "LLM".

5. **Example Bank**:

- Icon: Bank building.

- Example Question: "Seven red apples and two green apples are in the basket. How many apples are in the basket?"

- Rationale: "We add 7 to 2 and get 9."

6. **CoT Prompt**:

- Symbol: Question mark (?) combined with example icon.

- Text: "Question: ... + ?" (partial view).

7. **LLM with Skill Matching**:

- Label: "LLM" inside a gear icon.

- Connection: Green arrow labeled "Skill Matching" links to the LLM.

### Detailed Analysis

- **Flow Direction**:

- The process starts with the Input Query, progresses through Inference Skill and Skill Abstraction, then branches to retrieve examples from the Example Bank or directly engage the LLM via CoT Prompt.

- **Text Embedded in Diagrams**:

- Example Bank question and rationale are explicitly transcribed.

- CoT Prompt combines example structure with the original question.

- **Skill Matching**:

- Green arrow indicates the LLM's role in aligning the problem with the abstracted skill (addition).

### Key Observations

- The flowchart emphasizes **skill abstraction** as a critical step to map word problems to mathematical operations.

- The Example Bank provides **structured examples** to guide the LLM's reasoning.

- The CoT Prompt merges example patterns with the target problem to scaffold the LLM's response.

### Interpretation

This diagram demonstrates a **prompt engineering strategy** for arithmetic problem-solving with LLMs. By abstracting problems into known skills (e.g., addition) and leveraging example-based prompting, the process reduces ambiguity and improves accuracy. The LLM acts as a "reasoning engine" that matches problems to skills and generates step-by-step solutions. The green checkmark on the rationale ("We add 2 to 1 and get 3") visually validates the correctness of the abstracted skill application.