\n

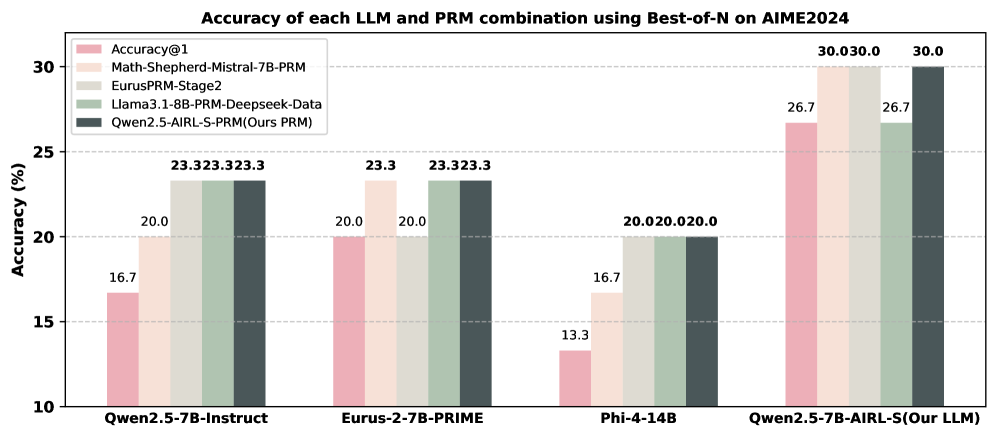

## Grouped Bar Chart: Accuracy of LLM and PRM Combinations on AIME2024

### Overview

This image is a grouped bar chart comparing the performance of four different Large Language Models (LLMs) when paired with five different Process Reward Models (PRMs) or evaluation methods. The performance metric is accuracy percentage, measured using a "Best-of-N" sampling strategy on the AIME2024 benchmark. The chart visually demonstrates how the choice of PRM significantly impacts the final accuracy for each base LLM.

### Components/Axes

* **Chart Title:** "Accuracy of each LLM and PRM combination using Best-of-N on AIME2024"

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear scale from 10 to 30, with major gridlines at intervals of 5 (10, 15, 20, 25, 30).

* **X-Axis:** Represents four distinct LLMs. The labels are:

1. `Qwen2.5-7B-Instruct`

2. `Eurus-2-7B-PRIME`

3. `Phi-4-14B`

4. `Qwen2.5-7B-AIRL-S(Our LLM)`

* **Legend:** Located in the top-left corner of the plot area. It defines five data series, each associated with a specific color and label:

1. **Pink:** `Accuracy@1`

2. **Light Peach:** `Math-Shepherd-Mistral-7B-PRM`

3. **Light Gray:** `EurusPRM-Stage2`

4. **Sage Green:** `Llama3.1-8B-PRM-Deepseek-Data`

5. **Dark Gray:** `Qwen2.5-AIRL-S-PRM(Ours PRM)`

### Detailed Analysis

The chart displays four groups of bars, one for each LLM on the x-axis. Each group contains five bars corresponding to the five PRM/evaluation methods from the legend. The numerical accuracy value is annotated above each bar.

**1. LLM: Qwen2.5-7B-Instruct**

* **Accuracy@1 (Pink):** 16.7%

* **Math-Shepherd-Mistral-7B-PRM (Light Peach):** 20.0%

* **EurusPRM-Stage2 (Light Gray):** 23.3%

* **Llama3.1-8B-PRM-Deepseek-Data (Sage Green):** 23.3%

* **Qwen2.5-AIRL-S-PRM (Dark Gray):** 23.3%

* *Trend:* Accuracy increases from the baseline `Accuracy@1` with all PRMs, plateauing at 23.3% for the last three methods.

**2. LLM: Eurus-2-7B-PRIME**

* **Accuracy@1 (Pink):** 20.0%

* **Math-Shepherd-Mistral-7B-PRM (Light Peach):** 23.3%

* **EurusPRM-Stage2 (Light Gray):** 20.0%

* **Llama3.1-8B-PRM-Deepseek-Data (Sage Green):** 23.3%

* **Qwen2.5-AIRL-S-PRM (Dark Gray):** 23.3%

* *Trend:* Performance is mixed. `Math-Shepherd`, `Llama3.1-PRM`, and `Qwen2.5-PRM` improve accuracy to 23.3%, while `EurusPRM-Stage2` matches the baseline `Accuracy@1` at 20.0%.

**3. LLM: Phi-4-14B**

* **Accuracy@1 (Pink):** 13.3%

* **Math-Shepherd-Mistral-7B-PRM (Light Peach):** 16.7%

* **EurusPRM-Stage2 (Light Gray):** 20.0%

* **Llama3.1-8B-PRM-Deepseek-Data (Sage Green):** 20.0%

* **Qwen2.5-AIRL-S-PRM (Dark Gray):** 20.0%

* *Trend:* A clear stepwise improvement. `Accuracy@1` is the lowest (13.3%). `Math-Shepherd` provides a boost to 16.7%. The final three PRMs (`EurusPRM`, `Llama3.1-PRM`, `Qwen2.5-PRM`) all achieve the same higher accuracy of 20.0%.

**4. LLM: Qwen2.5-7B-AIRL-S(Our LLM)**

* **Accuracy@1 (Pink):** 26.7%

* **Math-Shepherd-Mistral-7B-PRM (Light Peach):** 30.0%

* **EurusPRM-Stage2 (Light Gray):** 30.0%

* **Llama3.1-8B-PRM-Deepseek-Data (Sage Green):** 26.7%

* **Qwen2.5-AIRL-S-PRM (Dark Gray):** 30.0%

* *Trend:* This LLM shows the highest overall performance. The baseline `Accuracy@1` is already high at 26.7%. `Math-Shepherd`, `EurusPRM`, and the proprietary `Qwen2.5-AIRL-S-PRM` all push the accuracy to the chart's maximum of 30.0%. `Llama3.1-PRM` matches the baseline.

### Key Observations

1. **Top Performer:** The highest accuracy achieved is **30.0%**, reached by three different PRMs (`Math-Shepherd`, `EurusPRM`, `Qwen2.5-PRM`) when applied to the `Qwen2.5-7B-AIRL-S` LLM.

2. **PRM Impact:** For every LLM, using a PRM (any of the last four bars) results in equal or higher accuracy compared to the `Accuracy@1` baseline (the first pink bar in each group).

3. **Proposed Method Performance:** The authors' proposed PRM, `Qwen2.5-AIRL-S-PRM` (dark gray bars), is consistently among the top-performing methods for each LLM. It ties for the highest score in three out of four LLM groups.

4. **LLM Baseline Variation:** The baseline `Accuracy@1` varies significantly across LLMs, from a low of 13.3% (`Phi-4-14B`) to a high of 26.7% (`Qwen2.5-7B-AIRL-S`).

5. **Performance Plateaus:** In several cases (e.g., the last three PRMs for `Qwen2.5-7B-Instruct` and `Phi-4-14B`), different PRMs converge to the exact same accuracy score, suggesting a performance ceiling for that specific LLM-benchmark combination.

### Interpretation

This chart serves as an ablation study or comparative analysis within the field of AI reasoning and mathematical problem-solving (as AIME is a math competition benchmark). The data suggests several key insights:

* **PRMs are Crucial:** The consistent improvement over `Accuracy@1` demonstrates that using a Process Reward Model to re-rank or select among multiple generated solutions (Best-of-N) is an effective strategy for boosting LLM performance on complex reasoning tasks.

* **Model Synergy Matters:** The effectiveness of a PRM is not absolute; it depends on the base LLM it is paired with. For example, `EurusPRM-Stage2` performs well with `Qwen2.5-7B-Instruct` but only matches the baseline with its namesake `Eurus-2-7B-PRIME`. This highlights the importance of compatibility between the generator (LLM) and the verifier (PRM).

* **Authors' Contribution:** The chart is likely from a research paper introducing the `Qwen2.5-7B-AIRL-S` LLM and/or the `Qwen2.5-AIRL-S-PRM`. The data positions their contributions favorably: their LLM has the highest baseline and peak performance, and their PRM is a top-tier verifier across multiple LLMs. The fact that their PRM achieves the maximum 30.0% accuracy with their own LLM suggests a successfully co-designed system.

* **Diminishing Returns:** The performance plateaus indicate that for a given LLM and problem difficulty, there may be a maximum achievable accuracy with current PRM techniques. Breaking through this ceiling might require fundamental improvements in the base LLM's reasoning capabilities or the PRM's verification logic.