## Grouped Bar Chart: Token Type Fraction vs. Average Accuracy

### Overview

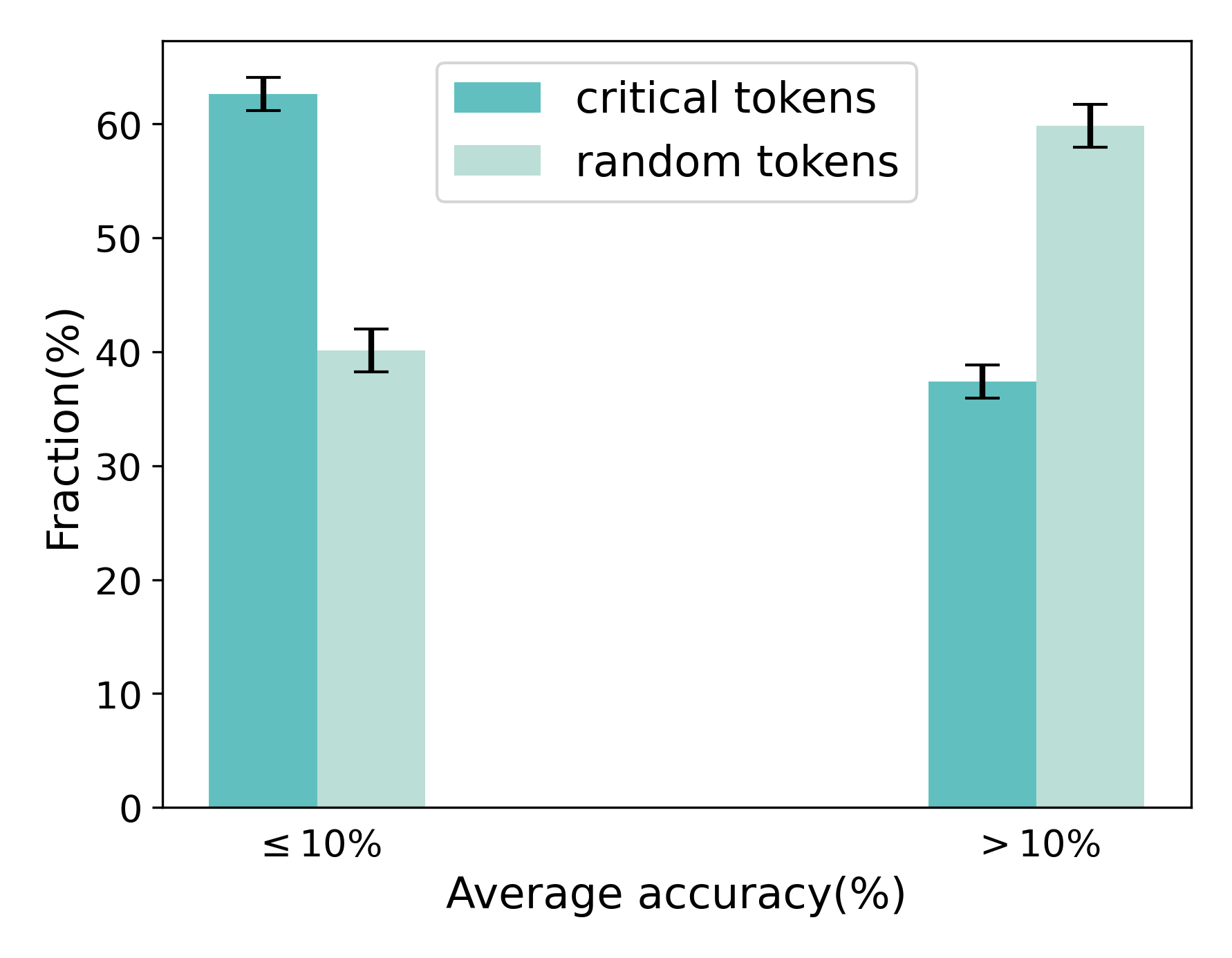

The image displays a grouped bar chart comparing the fractional percentage of two token types ("critical tokens" and "random tokens") across two categories of average accuracy. The chart includes error bars for each data point, indicating variability or confidence intervals.

### Components/Axes

* **Chart Type:** Grouped bar chart with error bars.

* **Y-Axis:**

* **Label:** `Fraction(%)`

* **Scale:** Linear, ranging from 0 to 60, with major tick marks at intervals of 10 (0, 10, 20, 30, 40, 50, 60).

* **X-Axis:**

* **Label:** `Average accuracy(%)`

* **Categories:** Two discrete categories are plotted:

1. `≤ 10%` (Less than or equal to 10 percent)

2. `> 10%` (Greater than 10 percent)

* **Legend:**

* **Position:** Top-center of the plot area.

* **Series:**

1. `critical tokens` - Represented by a teal-colored bar.

2. `random tokens` - Represented by a light green-colored bar.

* **Data Series & Spatial Grounding:**

* For each x-axis category, two bars are placed side-by-side. The left bar in each pair corresponds to "critical tokens" (teal), and the right bar corresponds to "random tokens" (light green).

### Detailed Analysis

**Category 1: Average accuracy ≤ 10%**

* **Critical Tokens (Teal Bar, Left):** The bar height indicates a fraction of approximately **62%**. An error bar extends from roughly 60% to 64%.

* **Random Tokens (Light Green Bar, Right):** The bar height indicates a fraction of approximately **40%**. An error bar extends from roughly 38% to 42%.

**Category 2: Average accuracy > 10%**

* **Critical Tokens (Teal Bar, Left):** The bar height indicates a fraction of approximately **37%**. An error bar extends from roughly 35% to 39%.

* **Random Tokens (Light Green Bar, Right):** The bar height indicates a fraction of approximately **60%**. An error bar extends from roughly 58% to 62%.

**Trend Verification:**

* The fraction of **critical tokens** shows a clear **downward trend** as average accuracy increases, dropping from ~62% in the low-accuracy group to ~37% in the high-accuracy group.

* The fraction of **random tokens** shows a clear **upward trend** as average accuracy increases, rising from ~40% in the low-accuracy group to ~60% in the high-accuracy group.

### Key Observations

1. **Inverse Relationship:** There is a strong inverse relationship between the two token types across the accuracy categories. When one is high, the other is low.

2. **Dominant Token Type Flips:** In the low-accuracy (`≤ 10%`) scenario, critical tokens are the dominant fraction (~62% vs. ~40%). In the high-accuracy (`> 10%`) scenario, random tokens become the dominant fraction (~60% vs. ~37%).

3. **Magnitude of Change:** The change in fraction for both token types between the two accuracy categories is substantial, on the order of 20-25 percentage points.

4. **Error Bars:** The error bars are relatively small compared to the differences between the bars, suggesting the observed differences between token types and across categories are likely statistically meaningful.

### Interpretation

This chart suggests a fundamental shift in the composition of tokens based on model performance (average accuracy).

* **Low Accuracy (≤ 10%):** The high fraction of "critical tokens" implies that when a model performs poorly, its outputs or internal states are disproportionately composed of tokens deemed "critical." This could mean the model is struggling with or over-representing key, high-stakes, or error-prone components of the task.

* **High Accuracy (> 10%):** The reversal, where "random tokens" dominate, suggests that as model accuracy improves, the proportion of these "critical" tokens decreases significantly. The model's operation becomes characterized more by "random" tokens. This could indicate that high performance is associated with a more balanced, less error-focused, or more fluent distribution of tokens, where the specific "critical" tokens are no longer the primary driver of the output.

**Underlying Question:** The data prompts an investigation into the definitions of "critical" and "random" tokens. The chart demonstrates that these categories are not static; their prevalence is a strong function of model accuracy. This relationship is crucial for understanding model behavior, diagnosing failure modes (where critical tokens dominate), and characterizing successful operation (where random tokens are more prevalent).